For those interested in GPUs and those interested in speculating on the future of HPC, read on. Pictures of a slide deck presented by NVIDIA’s chief research guru, Bill Dally, have been floating around the interwebs over the past several days. That detail what may become a realistic roadmap for an exascale computing platform by 2017. Given the context, the architecture includes NVIDIA GPU silicon far beyond what we have today [or tomorrow with Fermi].

For those interested in GPUs and those interested in speculating on the future of HPC, read on. Pictures of a slide deck presented by NVIDIA’s chief research guru, Bill Dally, have been floating around the interwebs over the past several days. That detail what may become a realistic roadmap for an exascale computing platform by 2017. Given the context, the architecture includes NVIDIA GPU silicon far beyond what we have today [or tomorrow with Fermi].

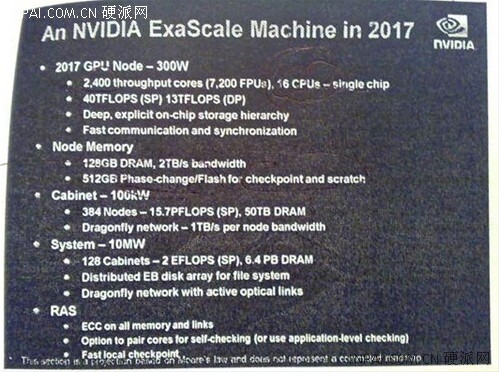

The top level of the architecture includes a GPU “Node”, as its presented, that chews an estimated 300W and includes 2,400 throughput cores, or 7,200 FPUs. These 2,400 throughput cores are married to 16 host cpus on what looks to be a single chip. According to Dally, the performance needles will peg around 40TF single precision and 13TF double precision. The double precision number is over twice what we commonly find in a dense compute rack [~6TF], per node! He has a bullet point stating, “Deep, explicit on-chip storage hierarchy.” One can only imagine that this includes a multi-level cache scenario with additional levels of complexity [embedded DRAM?]. Node memory will include 128GB of DRAM running at 2TB/s and 512GB of Phase-change/Flash for checkpoint and scratch. Considering the contextual mentioning of flash, it will likely be interconnected with the processor via a method more tightly coupled than today’s SATA/SAS offerings.

The proposed cabinet and system design scales up as well. Dally quotes 100kW for a single cabinet. Ouch! This is far beyond the 50kW that DARPA is looking for in UHPC. However, the cabinet does include 384 nodes with 50TB of DRAM to peg out at 15.7PF of single precision goodness [yes, PF]. This, is 15X the performance required for the latest DARPA HPC effort in a single cabinet. Do the math. You get 15X the performance for twice the power. Not a bad trade off. Dally lays out a 10MW system requiring 128 cabinets to reach 2EF [exaflops] single precision. The eventual system would include 6.4PB of DRAM and a distributed disk [term used loosely] array totaling several exabytes.

The proposed cabinet and system design scales up as well. Dally quotes 100kW for a single cabinet. Ouch! This is far beyond the 50kW that DARPA is looking for in UHPC. However, the cabinet does include 384 nodes with 50TB of DRAM to peg out at 15.7PF of single precision goodness [yes, PF]. This, is 15X the performance required for the latest DARPA HPC effort in a single cabinet. Do the math. You get 15X the performance for twice the power. Not a bad trade off. Dally lays out a 10MW system requiring 128 cabinets to reach 2EF [exaflops] single precision. The eventual system would include 6.4PB of DRAM and a distributed disk [term used loosely] array totaling several exabytes.

All in all, Dally and NVIDIA have some big dreams for their potential Exascale computing platform. However, given NVIDIA’s technology leadership in silicon design, are they so far fetched? If you consider Spring of 2010 to include 512 throughput cores on a single GPU socket and extrapolate out to 2017, they’re essentially following Moore’s law. Watch out for NVIDIA. They are making a serious play to become an ubiquitous high performance computing leader.

You can read the source article here [Google Translated from German].