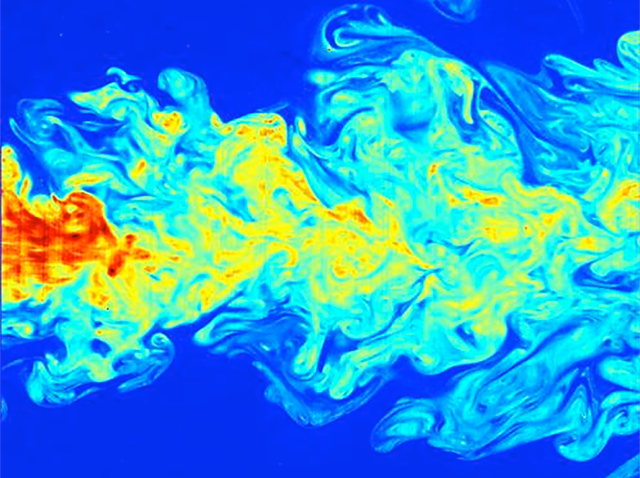

Solving Navier-Sokes equations are popular because they describe the physics of in a number of areas of interest to scientists and engineers. By solving these equations, the flow velocity can be calculated, and then other quantities of interest, such as pressure or temperature may be determined.

Since the solving of these equations is computationally expensive (i.e. lots of CPU time), and are so valuable to many industries, any performance enhancements that can be found will be of great value. As an example, the Lawrence Berkeley National Lab worked to optimize the application code, SMC on Intel Xeon processors and the Intel Xeon Phi coprocessor. The application needed to be optimized and modernized to take advantages of the number of threads available for computation on the Intel Xeon and Intel Xeon Phi coprocessor.

SMC is mainly written in Fortran and uses both OpenMP and MPI. Four optimizations were investigated and implemented in order to speed up SMC. The first was a baseline run, then looking at the thread usage, stack memory, blocking and vectorization.

Baseline – using the Intel Xeon Processor E5-2697 v2, with at 2 sockets and 12 cores each, 48 threads were available (using hyperthreading). Also, the Intel Xeon Phi Coprocessor 7120P with a total of 244 hardware threads was used. SMC was run from 1 to 240 threads on the Intel Xeon Phi, showing a speedup of about 38 X. Running on just the Intel Xeon processor showed only a speedup of about 10 X using 48 threads.

Thread Usage – The next optimization was to look at the thread use, as the code was using OpenMP. Larger parallel sections were assign to each thread. Using The Intel VTune Amplifier XE was used to look at hotspots. Again, the performance increased on the main processor, but degraded a little at high thread usage.

Stack Memory – A switch was made to use automatic instead of allocatable arrays for local variables. This optimization increased performance up to 70 X compared to the baseline for running on the main processor.

Blocking – Subdomains were then further divided and the size of the block was made into a run time parameter. This can result in better cache performance.

Vectorization – The application was analyzed to see where to take advantage of SIMD instructions so the code would run well on the Intel Xeon Phi. Chemical reaction rates were re-written to be able to use the SIMD instructions better and the Intel Xeon Phi.

Overall, using these techniques improved the concurrency and use of the Intel Xeon Phi coprocessor. The application now scales up to 100 X with 240 threads and up to 16 X faster vs. one thread on the host processors.

Source: Intel, USA and Lawrence Berkeley National Laboratory, USA

Hi MichaelS,

Congratulations on your successful optimization of Navier-Stokes equations on Intel Xeon and Intel MIC. Would like to get in touch for more such opportunities on other scientific applications.

I’ll be obliged if you drop a hello-mail at argapaar@gmail.com to continue this conversation.

Regards,