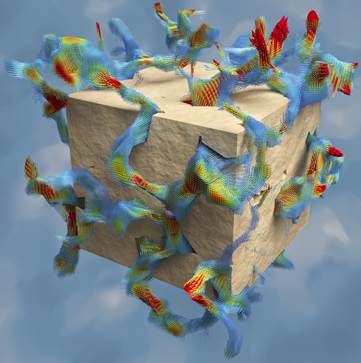

Simulated fluid flow in a small sub-volume. Red and blue arrows indicate fast and slow flow velocities while yellow and green arrows

represent intermediate velocities.

An interdisciplinary research team from JYU in Finland has set a new world record in the field of fluid flow simulations through porous materials. The team, coordinated by Dr. Keijo Mattila from the University of Jyväskylä, used the world’s largest 3D images of a porous material–synthetic X-ray tomography images of the microstructure of Fontainebleau sandstone, and successfully simulated fluid flow through a sample of the size of 1.5 cubic centimeters with a submicron resolution.

This is an unprecedented resolution for the given sample size and corresponds to 16,3843 image voxels (voxel is the 3D analogue of pixel). To grasp the proportions, imagine that a common dice is cut into over 16 thousand slices and then each slice is photographed with a resolution exceeding the best we have in cinemas today.

In addition to JYU, the research team included scientists from the Tampere University of Technology (Finland), the Natural Resources Institute of Finland (Luke), Åbo Akademi University (Finland), and the Petrobras Research Center (CENPES, Brazil). The main challenge faced by the team was to manage the vast computer memory requirements, almost 137 terabytes, which amounts to a combined memory of over 17 thousand current laptops, while forced to orchestrate a swift interplay between the numerous computing units operating in tandem. After solving such technical difficulties, the simulation was executed on the Archer supercomputer hosted by the Edinburgh Parallel Computing Centre, UK. As much as 96% of the whole computer was used 10 hours straight. The simulation consumed in total 7,500 kWh of energy. The volume of the simulated sample was roughly 1,000 times larger than what has been achieved in the state-of-the-art pore-scale flow simulations for the same resolution. The full steady-state flow simulation demonstrated the current computing capabilities and exposed new opportunities in the research of porous materials.

Porous materials are abundant in nature and industry and have a broad influence on societies as their properties affect, for example, oil recovery, erosion and the propagation of pollutants. Permeability, an indicator of how easily a porous material allows fluids to pass through it, is an example of their transport properties. Often the internal structure of a porous material encompasses relevant features at multiple length scales. This hinders research on the relation between the structure and transport properties: typically laboratory experiments cannot distinguish contributions from individual scales, while computer simulations cannot capture multiple scales because of limited capabilities. The reported extreme simulation shows that direct fluid flow simulations in the pore scale are possible with system sizes far beyond what has been previously reported. This advancement is important for, among others, soil and petroleum rock research where the achieved system size allows capturing the material properties more accurately.

Current computing resources permit simulations of this caliber to be used as a high-end tool, not as part of common workflow. Nevertheless, such a tool may be instrumental for fundamental research when trying to understand the properties of heterogeneous porous materials,” Dr. Mattila explains.

The simulation software was based on the lattice Boltzmann method and, in the case of the CPU-based Archer computing system, the parallel processing was executed using a hybrid MPI/OpenMP implementation of the software. In order to further demonstrate the capabilities of the current computing resources, the team executed benchmark simulations on the Titan supercomputer using a combined MPI/CUDA version of the simulator. Titan is a GPU-based system located at the Oak Ridge National Laboratory, US, and is currently ranked the 2nd among supercomputers. The largest benchmark simulation, half of the 16,3843 image, was executed using 16,384 GPUs for computing, 88% of the GPUs available, and a sustained computational performance of 1.77 PFLOPS.

These extreme simulations are the culmination of more than a decade of continuous research on the topic. In fact, from my perspective, the research began already around the mid-1990s in the Soft Condensed Matter and Statistical Physics research group led by Professor Jussi Timonen. The latest boost for the research came with our participation in two EU projects: CRESTA focused on the challenge of exaflop computing in applications, while SimPhoNy considers multiscale materials modeling. Also the computing services provided by CSC – IT Center for Science Ltd, Finland, have been pivotal in the development of high-performance simulation software,” Dr. Mattila concludes.