In this video from the 2016 Stanford HPC Conference, Michael Jennings from LBNL presents: Node Health Check (NHC) Project Update.

In this video from the 2016 Stanford HPC Conference, Michael Jennings from LBNL presents: Node Health Check (NHC) Project Update.

“In this follow-up to his 2014 presentation at the Stanford HPCAC Conference, Michael will provide an update on the latest happenings with the LBNL NHC project, new features in the latest release, and a brief overview of the roadmap for future development.”

TORQUE, SLURM, and other schedulers/resource managers provide for a periodic “node health check” to be performed on each compute node to verify that the node is working properly. Nodes which are determined to be “unhealthy” can be marked as down or offline so as to prevent jobs from being scheduled or run on them. This helps increase the reliability and throughput of a cluster by reducing preventable job failures due to misconfiguration, hardware failure, etc.

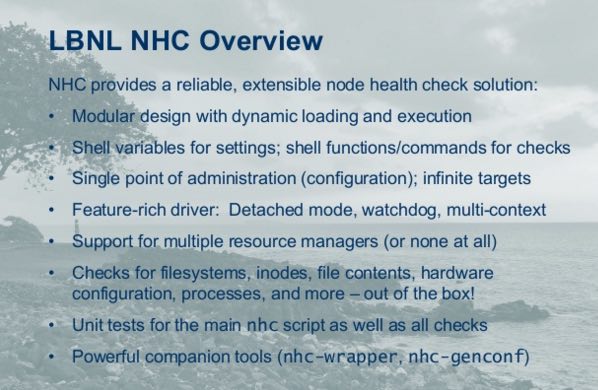

Though many sites have created their own scripts to serve this function, the vast majority are one-off efforts with little attention paid to extensibility, flexibility, reliability, speed, or reuse. Developers at Lawrence Berkeley National Laboratory created this project in an effort to change that. LBNL Node Health Check (NHC) has several design features that set it apart from most home-grown solutions:

- Reliable – To prevent single-threaded script execution from causing hangs, execution of subcommands is kept to an absolute minimum, and a watchdog timer is used to terminate the check if it runs for too long.

- Fast – Implemented almost entirely in native

bash(2.x or greater). Reducing pipes and subcommands also cuts down on execution delays and related overhead. - Flexible – Anything which can be described in a shell function can be a check. Modules can also populate cache data and reuse it for multiple checks.

- Extensible – Its modular functional interface makes writing new checks easy. Just drop modules into the scripts directory, then add your checks to the config file!

- Reusable – Written to be ultra-portable and can be used directly from a resource manager or scheduler, run via cron, or even spawned centrally (e.g., via

pdsh). The configuration file syntax allows for all compute nodes to share a single configuration.

Michael Jennings has been a UNIX/Linux Systems Administrator and a C/Perl developer for 20 years and has been author of or contributor to numerous open source software projects including Eterm, Mezzanine, RPM, Warewulf, and TORQUE. Additionally, he co-founded the Caos Foundation, creators of CentOS, and has been lead developer on 3 separate Linux distributions. He currently works as a Senior HPC Systems Engineer for Lawrence Berkeley National Laboratory and is the primary author/maintainer for the LBNL Node Health Check (NHC) project. He has also served for 2 years as President of SPXXL, the extreme-scale HPC users group.