In this special guest feature, Jan Rowell writes that an eye-popping visualization of two black holes colliding demonstrates 3D Adaptive Mesh Refinement volume rendering on next-generation Intel® Xeon Phi™ processors.

In this special guest feature, Jan Rowell writes that an eye-popping visualization of two black holes colliding demonstrates 3D Adaptive Mesh Refinement volume rendering on next-generation Intel® Xeon Phi™ processors.

When Intel took the covers off its new Intel® Xeon Phi™ processor at the International Supercomputer Conference (ISC) in Frankfurt, HPC leaders were quick to identify the potential impact of the new processors.

Case in point: The Stephen Hawking Center for Theoretical Cosmology (CTC) at Cambridge University, in collaboration with Queen Mary University of London, where scientists say the Intel Xeon Phi processor, paired with advances in Intel-supported Software Defined Visualization (SDVis), will give them a faster and easier path to critical insights. Those insights may one day deepen understanding the origins of the universe and the mysteries of gravity. At ISC, CTC researchers showed off a demo that highlights the performance of the next-generation Intel Xeon Phi processor, previously code-named Knights Landing, and the capabilities enabled by the new Intel-supported SDVis capabilities.

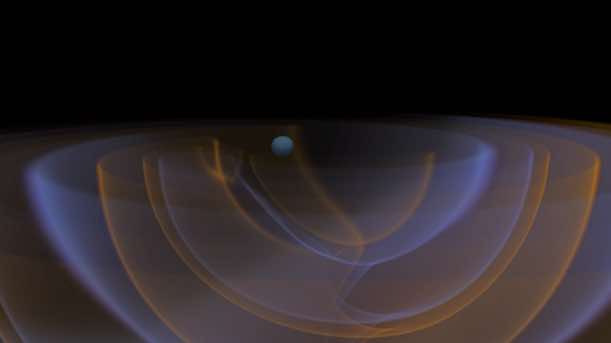

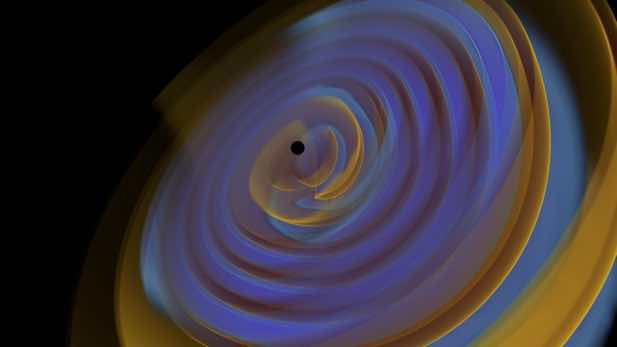

The series of visualizations, showing two black holes circling each other, colliding, and producing gravational waves, was accomplished through directly volume rendering the AMR data without needing to resample to a regular grid. (Images courtesy Intel and the Stephen Hawking Center for Theoretical Cosmology.)

Access to Intuition

CTC is dedicated to research in cosmology, general relativity, and particle physics. The center houses the 38 teraflops COSMOS supercomputer, an SGI system that was the first very large, single-image, shared-memory computer to incorporate the Intel Xeon Phi coprocessor. CTC furthered its leadership in scientific supercomputing in 2014 when it became an Intel® Parallel Computing Center (Intel® PCC). Intel PCCs are pathfinders in modernizing applications to deliver the benefits of computing advances to their user communities.

For ISC, CTC worked with Intel to create a demo spotlighting the newest member of the Intel Xeon Phi product family, the Intel Xeon Phi processor. In contrast to the earlier coprocessors in the family, the new chip is a general-purpose processor, able to boot a standard operating system and directly access large sizes (up to 384 GB) of system memory. The demo team also wanted to reflect visualization’s role in delivering scientific insights from data-rich simulations.

Visualization is a scientific tool for us—not just a way to communicate, but an important part of our research,” says Markus Kunesch, a doctoral student in the Cambridge University Department of Applied Mathematics and Theoretical Physics (DAMTP). “As a scientist, you need to develop intuition for the physical system you are studying. What happens if I change this? How will it react if I change that? If you have quick, easy access to visualization, that’s access to intuition. The shorter the time from calculation to visualization, the faster we can develop intuition and understanding.”

The demo focuses on CTC’s numerical simulation of the generation of gravitational waves from the collision of two black holes. Like scientists the world over, CTC experts were excited by the National Science Foundation’s announcement in February 2016 that the Laser Interferometer Gravitational-wave Observatory (LIGO) detectors had recorded the first-ever direct observation of gravitational waves. This observation has opened the door to the new field of gravitational wave astronomy and triggered widespread efforts to advance the fledgling field by digging deeper into the data. The LIGO experiment offers an opportunity to see how Einstein’s theoretical predictions align with the observed gravitational wave data.

Replacing 2D Slices with 3D Adaptive Mesh Refinement Volume Rendering

The CTC demo shows the visualized results of a simulation of two black holes colliding. The simulation data consists of Adaptive Mesh Refinement (AMR) data that is typically sampled into a regular grid for volume rendering, negating the memory and time advantages of the underlying AMR structure. The demo demonstrates the AMR data being directly volume rendered without resampling inside of the popular scientific visualization tool ParaView.

Intel’s Jim Jeffers, a project lead on several of Intel’s visualization initiatives who helped develop the demo, believes the collision visualization is the first time the CTC group has been able to directly volume render the AMR structure directly at interactive rendering rates. With the large memory space on the system, and by using the smaller memory footprint of the AMR data, scientists are able to not only interactively explore their data by manipulating the camera and transfer functions, but can also interactively render large time series of the data.

We’ve had AMR, and we’ve had volume rendering, but we’ve had to concentrate on one aspect or another,” explains Dr. Pau Figueras from the Queen Mary, University of London. “To have them together is going to be very helpful in giving scientists the full picture.”

Architectural Advantages of the Intel Xeon Phi Processor

The demo platform shows off Intel® Scalable System Framework (Intel® SSF), the holistic, system-focused design philosophy guiding Intel’s roadmap to exascale supercomputing and beyond. The Intel SSF platform used for the demo includes a dual-socket Intel® Xeon® processor E5 v4 plus four Intel Xeon Phi processors connected via Intel® Omni-Path Architecture fabric. The configuration also includes Intel® Solid-State Drives.

The demo takes advantage of the 384 GB Intel Xeon processor E5 platform memory and the 192 GB of system memory in each of the four Intel Xeon Phi processors. “Because of the massive memory capacity of the Intel Xeon Phi processor compared to GPUs, the demo is able to do in-memory visualization on the processor,” says Jeffers, who is also coauthor of Intel Xeon Phi Processor High Performance Programming: Knights Landing Edition. “We don’t have the bottlenecks in I/O, memory size, and power that you do when you’re moving massive data sets between processors and GPUs. We can do high fidelity rendering of larger data sets with no loss of data resolution, and provide excellent performance.”

That’s highly significant, according to Saran Tunyasuvunakool, another doctoral student at DAMTP. “Solving the memory bandwidth problem is huge,” he says. “Along with high core counts, the Intel Xeon Phi processor lets us have a massive number of threads without having to worry about memory bandwidth. It lets us try new approaches. When you can deploy the system in a way that lets you use same resources as for compute, and lets you avoid moving data around, you can speed things up tremendously.”

The software used to build the demo includes a modified version of Kitware ParaView* Visualization Toolkit* (VTK*) 5.1, along with Intel-supported SDVis enhancements through open source solutions including OSPRay and Embree* ray-tracing software and OpenSWR* software rasterizer within Mesa. Kitware has collaborated with Intel to incorporate the OSPRay enhancements into the newest release of its Visualization ToolKit (VTK).

On-the-Fly Visualization and New Ways to Work

The architecture of the new Intel Xeon Phi processor, along with Intel-supported SDVis, offers scientists like those at the CTC more powerful and flexible ways to work. If organizations no longer need to perform simulation and rendering on separate clusters, users can render while the simulation runs rather than as a separate job after the simulation completes.

It simplifies things when you can run on a single processor and not have to offload the visualization work,” says Juha Jäykkä, system manager of the COSMOS supercomputer. Dr. Jäykkä holds a doctorate in theoretical physics and also serves as a scientific consultant to the system’s users. “Programming is easier. The Intel Xeon Phi processor architecture is the next step for getting more performance and more power efficiency, and it is refreshingly convenient to use.”

The Intel Xeon Phi processor’s ability to perform both simulation and visualization can provide quicker results and faster insights. It can also improve resource utilization and make it easier to debug large, complex simulations. “We have simulations that run for weeks,” says Dr. Jäykkä. “Right now, we might write to disk and produce a visualization every 12 hours. But if we’re set up to run simulation and visualization on the same system, we’ll be able to look at the simulation more often. We’ll write to disk less, and make better use of system resources. If we can do a quick visualization on the fly, or every 15 minutes or every hour, we’ll know what’s going on with the simulation. We can sort it better if anything goes wrong. And we get faster insights into the science. That’s the bottom line.”

It’s How the Field Works

For now, CTC is looking to capitalize on the Intel Xeon Phi processor and Intel-supported SDVis. It is also looking further—to ongoing platform innovation as Intel drives toward exascale computing. “HPC is how the field works,” says Dr. Figueras. “Our problems and methods are only becoming more computationally intensive, so we depend on continued technology innovation to advance our science. We have an insatiable appetite. We will always need more, and the sooner we get it, the better. We will never run out of scientific problems to solve.”