In this special guest feature from Scientific Computing World, Wolfgang Gentzsch explains the role of HPC container technology in providing ubiquitous access to HPC.

Wolfgang Gentzsch, President, The UberCloud

The importance and success of high-performance computing (HPC) for engineering and scientific insight, product design, development, and innovation, – and for market competitiveness – have been demonstrated in countless case studies. But, until recently, HPC was found in the hands of a relatively small crowd of experts, not easily accessible by the majority.

In this article, however, I argue – despite the ever increasing complexity of HPC hardware and system components – that engineers and scientists today have never been closer to HPC, i.e. to ubiquitous HPC, as a common tool for everyone.

The main reason for this advance can be seen in the continuous progress of HPC software tools which assist enormously in the design, development, and optimization of engineering and scientific applications for powerful HPC systems. Here we demonstrate how the next chasm towards ubiquitous HPC will be crossed by new HPC software container technology which will dramatically facilitate software package-ability and portability, ease the access and use, and simplify software maintenance and support, and which finally will put HPC into the hands of every engineer and scientist.

Ultimately, UberCloud Software Containers are a perfect technology for users who do not have in-house HPC resources, or for companies that need to quickly scale out and increase their capacity for a short period.

First, a little container history

“In April 1956, a refitted oil tanker carried fifty-eight shipping containers from Newark to Houston. From that modest beginning, container shipping developed into a huge industry that made the boom in global trade possible. ‘The Box’ tells the dramatic story of the container’s creation, the decade of struggle before it was widely adopted, and the sweeping economic consequences of the sharp fall in transportation costs that containerization brought about’; economist Marc Levinson explaining how the container transformed the world’s economy, opening up new possibilities for trade. ‘By making shipping so cheap that industry could locate factories far from its customers, the container paved the way for Asia to become the world’s workshop and brought consumers a previously unimaginable variety of low-cost products from around the globe.”

40 years of HPC

The last 40 years saw a continuous struggle of our engineering and scientific community with HPC. In 1976 I started as a computer scientist at the Max Planck Institute for Plasmaphysics in Munich, developing my first FORTRAN computer program for solving the magneto-hydrodynamics plasma equations on a 3-MFLOPS IBM 360/91. Three years later, at the German Aerospace Center (DLR) in Gottingen, we got DLR’s first Cray-1S which marked my entry into vector computing and vectorization of numerical algorithms. In 1980, our team broke the 50-MFLOPS barrier with a speedup of 20 over DLR’s IBM 3081 mainframe computer, with fluid dynamics simulations for a nonlinear convective flow and a direct Monte-Carlo simulation of the von-Karman vortex street. To get to that level of performance. However, we had to modify several numerical algorithms and hand-vectorized and optimized compute-intensive program subroutines which took us several troublesome months. That was HPC for experts only, then.

Ubiquitous computing – Xerox PARC’s Mark Weiser

When we use the word ‘ubiquitous’ in the following, we mean synonyms like everywhere, omnipresent, pervasive, universal, and all-over. Here I’d like to quote the great Mark Weiser from Xerox PARC who wrote in 1988:

Ubiquitous computing names the 3rd wave in computing just now beginning. First were mainframes, each shared by lots of people. Now we are in the personal computing era, person and machine staring uneasily at each other across the desktop. Next comes ubiquitous computing, or the age of calm technology, when technology recedes into the background of our lives.” — Mark Weiser, 1988.

Weiser clearly looks at ‘ubiquitous computing’ with the eyes of the end-users, engineers, and scientists. According to Weiser, users shouldn’t care less about the ‘engine’ under the hood; all they care is about ‘driving’ safely, reliably, easily, from A to B; getting in the car, starting the engine, pulling out into traffic; anybody should be able to do that, anywhere, anytime.

Towards ubiquitous high-performance computing

HPC hardware and HPC software today are immensely complex, and their mutual interaction is highly sophisticated. For (high-performance) computing to be ubiquitous, Weiser suggested making it disappear into the background of our (business) lives, at least from an end user’s point of view. In the last decade, we were able to make a big step towards reaching this goal: we abstracted the application layer from the physical architecture underneath, through server virtualization. This great achievement came with great benefits for IT – and for the end-users too: such as provision servers faster, enhance security, reduce hardware vendor lock-in, increase uptime, improve disaster recovery, isolate applications and extend the life of older applications, and help move things to the cloud. With server virtualization we came already quite close to ubiquitous computing.

Ubiquitous HPC – with HPC software containers

However, server virtualization never gained a significant foothold in HPC, particularly for highly parallel applications requiring low latency and high bandwidth inter-process communication. Additionally, multi-tenant HPC servers with several VMs competing among each other for hardware resources such as I/O, memory, and network, can further slow down application performance.

Because VM’s failed to show a strong presence in HPC, the challenges of software distribution, administration, and maintenance kept HPC systems locked up in closets, available to only a select few HPC experts.

That is until 2013, when Docker Linux Containers were first introduced. The key practical difference between Docker and VMs is that Docker is a Linux-based system that makes use of a userspace interface for the Linux kernel containment features. Another difference is that rather than being a self-contained system in its own right (like a VM), a Docker container shares the Linux kernel with the operating system running the host machine. It also shares the kernel with other containers that are running on the host machine. That makes Docker containers extremely lightweight and well suited for HPC, in principle.

Still it took us [Ubercloud] about a year to develop – based on micro-service Docker container technology – our first version of the macro-service production-ready counterpart for HPC, layer by layer, plus enhancing and testing it with dozens of CAE applications and with complex engineering workflows, on about a dozen different HPC single- and multi-node HPC cloud resources.

These high-performance interactive software containers, whether they are on-premise, or on public or private clouds, bring a number of key benefits to the otherwise traditional HPC environments, with the goal to making HPC widely available, ubiquitous:

Packageability: bundle applications together with libraries and configuration files

A container image bundles libraries and tools as well as the application code and data, and the necessary configuration for these components to work together seamlessly. There is no need to install software or tools on the host compute environment, since the ready-to-run container image contains all the required components. The challenges regarding library dependencies, version conflicts, and configuration challenges all disappear, as do the huge replication and duplication efforts in our community when it comes to deploying HPC software which is one of the major goals of the OpenHPC initiative, and which can be handled by HPC Containers.

Portability: build container images once, deploy them rapidly in various infrastructures

Having a single container image makes it easy for the HPC workload to be rapidly deployed and easily moved from host to host, between development and production environments, and to other computing facilities. The container allows the end user to select the appropriate environment such as a public cloud, a private cloud, or an on-premise HPC cluster. There is no need to install new components or perform setup steps when using another host.

Accessibility: bundle tools such as SSH into the container for easy access. The container is setup to provide easy access via tools such as VNC for remote desktop sharing. In addition, containers running on computing nodes enable both end-users and administrators to have a consistent implementation regardless of the underlying compute environment.

Usability: provide familiar user interfaces and user tools with the application

The container has only the required components to run the application. By eliminating other tools and middleware, the work environment is simplified and usability is improved. The ability to provide a full featured desktop increases usability (especially for pre and post processing steps) and reduces the need for training. Further, the HPC containers can be used together with a resource manager such as Slurm or Grid Engine, increasing the usability even further by eliminating many administration tasks. The ease of use can be further increased through the use of other tools such as DCV from NICE software, which provide and accelerate remote visualization.

In addition,the lightweight nature of the HPC container suggests low-performance overhead. Our own performance tests with different real applications on several multi-host multi-container HPC systems demonstrate that there is no significant overhead in running high performance workloads in an HPC container.

HPC container case studies

To highlight the success of UberCloud I would like to highlight two recent HPC cloud case studies of software containers for engineering and scientific simulations. These case studies can be found in the UberCloud Voice September newsletter.

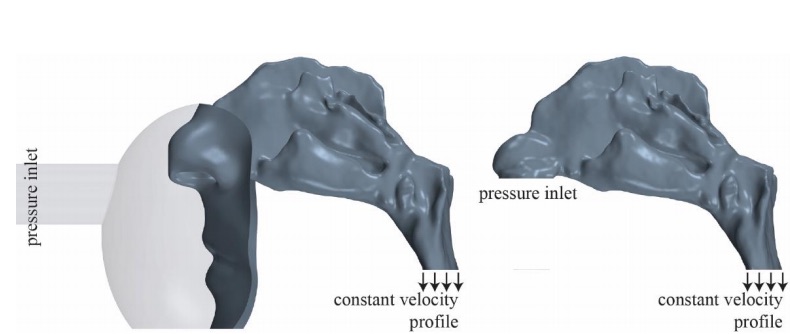

In case study 190 of the UberCloud Experiment, Jan Brüning and colleagues from Charite Universitätsmedizin Berlin, sponsored by CD-adapco, Microsoft Azure, Hewlett-Packard Enterprise, and Intel, the airflow within a nasal cavity of a patient without impaired nasal breathing was simulated using CD-adapco’s STAR-CCM+ software container on a dedicated compute cluster in the Microsoft Azure Cloud. Since information about necessary mesh resolutions found in the literature vary significantly (1 to 8 million cells) the researchers decided to complete a mesh independence study. Additionally, two different inflow models were tested. However, the main focus of this study was the usability of cloud-based HPC for numerical assessment of nasal breathing.

Figure 1: Case study 190 – computational model (left) with part of the face and a simplified mask attached to the nasal cavity, and wall shear stress distribution (right) at the nasal cavity’s wall.

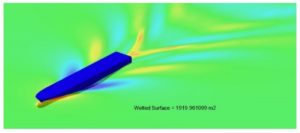

In case study 191 of the UberCloud Experiment by Vassilios Zagkas and Ioannis Andreou from SimFWD Engineering Services, Athens, Greece (sponsored by CPU 24/7, Hewlett-Packard Enterprise, Intel, and NUMECA). The researchers focused on the calculation of barehull resistance of a modern Ropax hullform. The Main goal was to assess the potential of reducing resistance of the ship hull with minor alterations. The purpose of this project was for a new entry engineer to become familiar with the mechanics of running a NUMECA FINE/Marine simulation in an UberCloud software container and to assess the performance of the cloud compared to resources currently used by the end-user. The benchmark was analyzed on local hardware, on virtual instances in the cloud, and on the bare-metal / container cloud solution offered by cloud provider CPU 24/7 and UberCloud.

Figure2 : Case study 191 – wave elevation comparison at 22-knots view from below.

During the past three years, UberCloud has successfully built HPC containers for ANSYS, CD-adapco, COMSOL, NICE, Numeca, OpenFOAM, PSPP, Red Cedar, Scilab, Gromacs, and others. These application containers are now running on cloud resources from Advania, Amazon AWS, CPU 24/7, Microsoft Azure, Nephoscale, OzenCloud, and other cloud and in-house resources.

The advent of lightweight pervasive, packageable, portable, scalable, interactive, easy to access and use HPC application containers based on Docker technology running seamlessly on workstations, servers, and clouds, is bringing us ever closer to the ‘democratization of HPC.’ Together with recent advances and trends in application software and high-performance hardware technologies, we are approaching the age of Ubiquitous High-Performance Computing – where HPC ‘recedes into the background of our lives.’

Wolfgang Gentzsch is President and Co-Founder of UberCloud and a consultant for high performance, technical and cloud computing. He was the Chairman of the Intl. ISC Cloud Conference Series, an Advisor to the EU projects DEISA and EUDAT, directed the German D-Grid Initiative, was a Director of the Open Grid Forum, Managing Director of the North Carolina Supercomputer Center (MCNC), and a member of the US President’s Council on Science & Technology PCAST. He was also a co-founder and president of Gridware which has been acquired by Sun Microsystems in 2000 for its Sun Grid Engine HPC cluster workload management system.

This story appears here as part of a cross-publishing agreement with Scientific Computing World.