“The world’s first GPU design for inferencing.” – Jen-Hsun introduces Tesla P40 for deep learning at GTC China.

Nvidia’s GPU platforms have been widely used on the training side of the Deep Learning equation for some time now. Today the company announced a new Pascal-based GPU tailor-made for the inferencing side of Deep Learning workloads.

With the Tesla P100 and now Tesla P4 and P40, NVIDIA offers the only end-to-end deep learning platform for the data center, unlocking the enormous power of AI for a broad range of industries,” said Ian Buck, general manager of accelerated computing at NVIDIA. “They slash training time from days to hours. They enable insight to be extracted instantly. And they produce real-time responses for consumers from AI-powered services.”

Modern AI services such as voice-activated assistance, email spam filters, and movie and product recommendation engines are rapidly growing in complexity, requiring up to 10x more compute compared to neural networks from a year ago. Current CPU-based technology isn’t capable of delivering real-time responsiveness required for modern AI services, leading to a poor user experience.

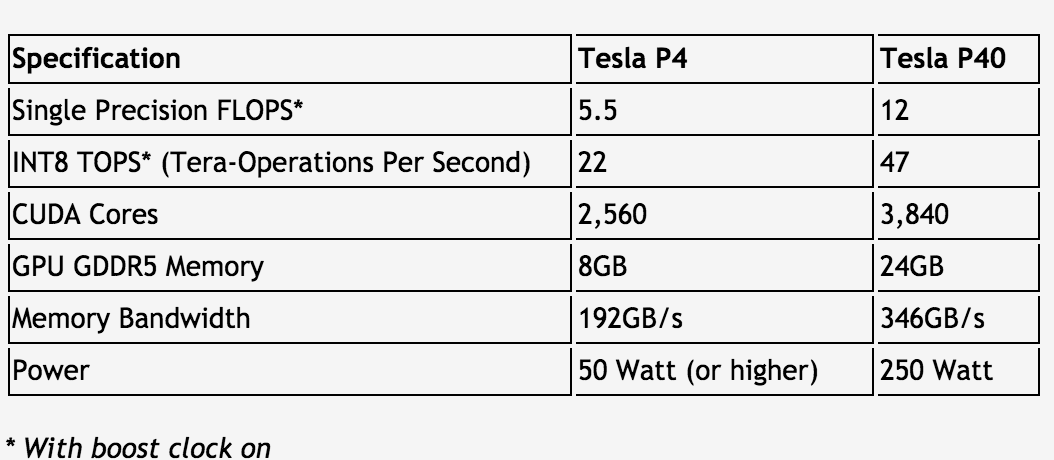

The Tesla P4 and P40 are specifically designed for inferencing, which uses trained deep neural networks to recognize speech, images or text in response to queries from users and devices. Based on the Pascal architecture, these GPUs feature specialized inference instructions based on 8-bit (INT8) operations, delivering 45x faster response than CPUs and a 4x improvement over GPU solutions launched less than a year ago.

The Tesla P4 delivers the highest energy efficiency for data centers. It fits in any server with its small form-factor and low-power design, which starts at 50 watts, helping make it 40x more energy efficient than CPUs for inferencing in production workloads. A single server with a single Tesla P4 replaces 13 CPU-only servers for video inferencing workloads, delivering over 8x savings in total cost of ownership, including server and power costs.

The Tesla P40 delivers maximum throughput for deep learning workloads. With 47 tera-operations per second (TOPS) of inference performance with INT8 instructions, a server with eight Tesla P40 accelerators can replace the performance of more than 140 CPU servers. At approximately $5,000 per CPU server, this results in savings of more than $650,000 in server acquisition cost.

Software Tools for Faster Inferencing

Complementing the Tesla P4 and P40 are two software innovations to accelerate AI inferencing: NVIDIA TensorRT and the NVIDIA DeepStream SDK.

TensorRT is a library created for optimizing deep learning models for production deployment that delivers instant responsiveness for the most complex networks. It maximizes throughput and efficiency of deep learning applications by taking trained neural nets — defined with 32-bit or 16-bit operations — and optimizing them for reduced precision INT8 operations.

NVIDIA DeepStream SDK taps into the power of a Pascal server to simultaneously decode and analyze up to 93 HD video streams in real time compared with seven streams with dual CPUs. This addresses one of the grand challenges of AI: understanding video content at-scale for applications such as self-driving cars, interactive robots, filtering and ad placement. Integrating deep learning into video applications allows companies to offer smart, innovative video services that were previously impossible to deliver.

NVIDIA customers are delivering increasingly more innovative AI services that require the highest compute performance.

Delivering simple and responsive experiences to each of our users is very important to us,” said Greg Diamos, senior researcher at Baidu. “We have deployed NVIDIA GPUs in production to provide AI-powered services such as our Deep Speech 2 system and the use of GPUs enables a level of responsiveness that would not be possible on un-accelerated servers. Pascal with its INT8 capabilities will provide an even bigger leap forward and we look forward to delivering even better experiences to our users.”

Availability

The NVIDIA Tesla P4 and P40 are planned to be available in November and October, respectively, in qualified servers offered by ODM, OEM and channel partners.

More details on performance claims are available in the release footnotes.