Researchers at the Department of Energy’s SLAC National Accelerator Laboratory are playing key roles in two recently funded computing projects with the goal of developing cutting-edge scientific applications for future exascale supercomputers that can perform at least a billion billion computing operations per second – 50 to 100 times more than the most powerful supercomputers in the world today.

Researchers at the Department of Energy’s SLAC National Accelerator Laboratory are playing key roles in two recently funded computing projects with the goal of developing cutting-edge scientific applications for future exascale supercomputers that can perform at least a billion billion computing operations per second – 50 to 100 times more than the most powerful supercomputers in the world today.

The first project, led by SLAC, will develop computational tools to quickly sift through enormous piles of data produced by powerful X-ray lasers. The second project, led by DOE’s Lawrence Berkeley National Laboratory (Berkeley Lab), will reengineer simulation software for a potentially transformational new particle accelerator technology, called plasma wakefield acceleration.

The projects, which will each receive $10 million over four years, are among 15 fully-funded application development proposals and seven proposals selected for seed funding by the DOE’s Exascale Computing Project (ECP). The ECP is part of President Obama’s National Strategic Computing Initiative and intends to maximize the benefits of high-performance computing for U.S. economic competiveness, national security and scientific discovery.

“Many of our modern experiments generate enormous quantities of data,” says Alex Aiken, professor of computer science at Stanford University and director of the newly formed SLAC Computer Science division, who is involved in the X-ray laser project. “Exascale computing will create the capabilities to handle unprecedented data volumes and, at the same time, will allow us to solve new, more complex simulation problems.”

Analyzing ‘Big Data’ from X-ray Lasers in Real Time

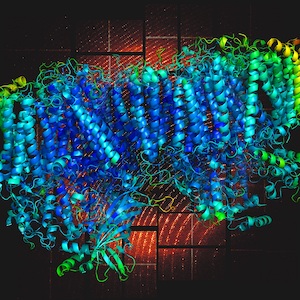

X-ray lasers, such as SLAC’s Linac Coherent Light Source (LCLS) have been proven to be extremely powerful “microscopes” that are capable of glimpsing some of nature’s fastest and most fundamental processes on the atomic level. Researchers use LCLS, a DOE Office of Science User Facility, to create molecular movies, watch chemical bonds form and break, follow the path of electrons in materials and take 3-D snapshots of biological molecules that support the development of new drugs.

At the same time X-ray lasers also generate giant amounts of data. A typical experiment at LCLS, which fires 120 flashes per second, fills up hundreds of thousands of gigabytes of disk space. Analyzing such a data volume in a short amount of time is already very challenging. And this situation is set to become dramatically harder: The next-generation LCLS-II X-ray laser will deliver 8,000 times more X-ray pulses per second, resulting in a similar increase in data volumes and data rates. Estimates are that the data flow will greatly exceed a trillion data ‘bits’ per second, and require hundreds of petabytes of online disk storage.

As a result of the data flood even at today’s levels, researchers collecting data at X-ray lasers such as LCLS presently receive only very limited feedback regarding the quality of their data.

This is a real problem because you might only find out days or weeks after your experiment that you should have made certain changes,” says Berkeley Lab’s Peter Zwart, one of the collaborators on the exascale project, who will develop computer algorithms for X-ray imaging of single particles. “If we were able to look at our data on the fly, we could often do much better experiments.”

Amedeo Perazzo, director of the LCLS Controls & Data Systems Division and principal investigator for this “ExaFEL” project, says, “We want to provide our users at LCLS, and in the future LCLS-II, with very fast feedback on their data so that can make important experimental decisions in almost real time. The idea is to send the data from LCLS via DOE’s broadband science network ESnet to NERSC, the National Energy Research Scientific Computing Center, where supercomputers will analyze the data and send the results back to us – all of that within just a few minutes.” NERSC and ESnet are DOE Office of Science User Facilities at Berkeley Lab.

X-ray data processing and analysis is quite an unusual task for supercomputers. “Traditionally these high-performance machines have mostly been used for complex simulations, such as climate modeling, rather than processing real-time data” Perazzo says. “So we’re breaking completely new ground with our project, and foresee a number of important future applications of the data processing techniques being developed.”

This project is enabled by the investments underway at SLAC to prepare for LCLS-II, with the installation of new infrastructure capable of handling these enormous amounts of data.

A number of partners will make additional crucial contributions.

At Berkeley Lab, we’ll be heavily involved in developing algorithms for specific use cases,” says James Sethian, a professor of mathematics at the University of California, Berkeley, and head of Berkeley Lab’s Mathematics Group and the Center for Advanced Mathematics for Energy Research Applications (CAMERA). “This includes work on two different sets of algorithms. The first set, developed by a team led by Nick Sauter, consists of well-established analysis programs that we’ll reconfigure for exascale computer architectures, whose larger computer power will allow us to do better, more complex physics. The other set is brand new software for emerging technologies such as single-particle imaging, which is being designed to allow scientists to study the atomic structure of single bacteria or viruses in their living state.”

Data taken with the LCLS X-ray laser (pattern in background) provide unprecedented views of atomic structures and motions in complex molecules. (N. Sauter/Berkeley Lab)

The “ExaFEL” project led by Perazzo will take advantage of Aiken’s newly formed Stanford/SLAC team, and will collaborate with researchers at Los Alamos National Laboratory to develop systems software that operates in a manner that optimizes its use of the architecture of the new exascale computers.

Supercomputers are very complicated, with millions of processors running in parallel,” Aiken says. “It’s a real computer science challenge to figure out how to use these new architectures most efficiently.”

Finally, ESnet will provide the necessary networking capabilities to transfer data between the LCLS and supercomputing resources. Until exascale systems become available in the mid-2020s, the project will use NERSC’s Cori supercomputer for its developments and tests.

Source: NERSC

Sign up for our insideHPC Newsletter

They should use FPGAs to do their real time calculations. FPGAs are being used at CERN and the SKA to do the initial processing on the raw data as it’s delivered. All the data gathered is integer and needs to be processed in a pipeline as it’s produced. FPGAs are perfect for that. Once the data is reduced, and the information extracted, they can use traditional processing systems to do the science.