In this video from the DDN User Group at SC16, Pamela Hill, Computational & Information Systems Laboratory, NCAR/UCAR, presents: NCAR’s Evolving Infrastructure for Weather and Climate Research.

In this video from the DDN User Group at SC16, Pamela Hill, Computational & Information Systems Laboratory, NCAR/UCAR, presents: NCAR’s Evolving Infrastructure for Weather and Climate Research.

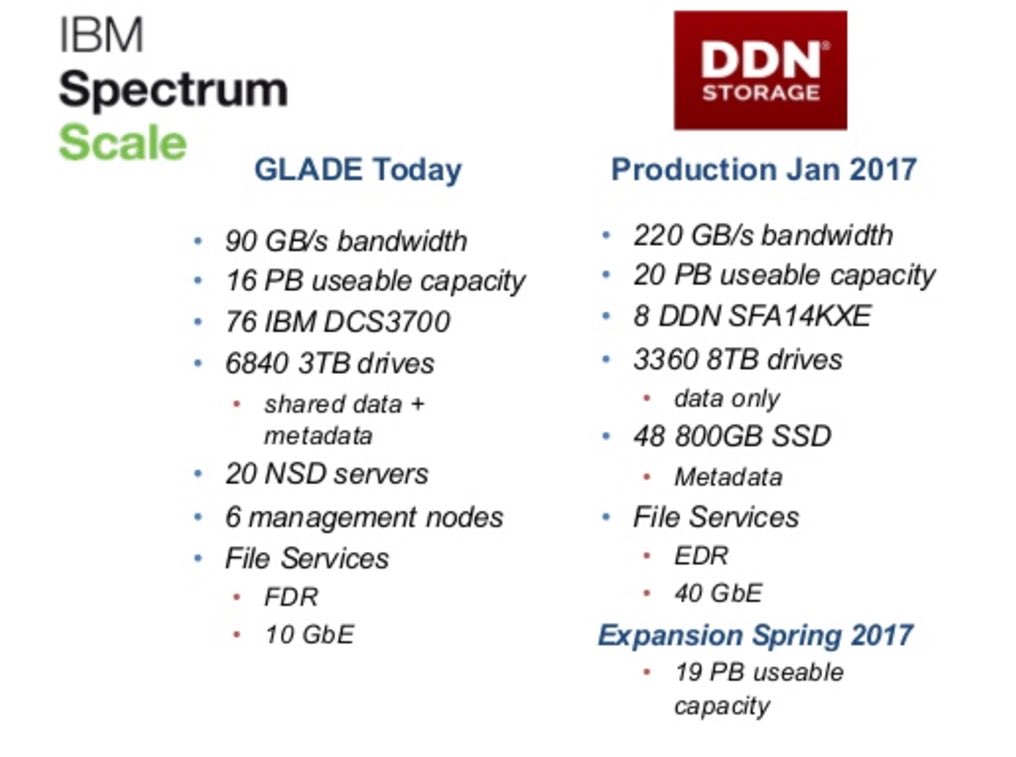

In January 2016, DDN announced that the National Center for Atmospheric Research (NCAR) has selected DDN’s new SFA14K high-performance hyper-converged storage platform to drive the performance and deliver the capacity needed for scientific breakthroughs in climate, weather and atmospheric-related science to power its “Cheyenne” supercomputer.

At NCAR/UCAR, the Globally Accessible Data Environment—a centralized file service known as GLADE—uses high-performance GPFS shared file system technology to give users a common view of their data across the HPC, analysis, and visualization resources that CISL manages. GLADE file spaces are intended as work areas for day-to-day tasks and are well suited for managing software projects, scripts, code, and data sets. They are available by default except for project spaces.

“Having a centralized, large-scale storage resource delivers a real benefit to our scientists,” said Anke Kamrath, director of the operations and services division at NCAR’s computing lab. “With the new system, we have a balance between capacity and performance so that researchers will be able to start looking at the model output immediately without having to move data around. Now, they’ll be able to get right down to the work of analyzing the results and figuring out what the models reveal.”

“Having a centralized, large-scale storage resource delivers a real benefit to our scientists,” said Anke Kamrath, director of the operations and services division at NCAR’s computing lab. “With the new system, we have a balance between capacity and performance so that researchers will be able to start looking at the model output immediately without having to move data around. Now, they’ll be able to get right down to the work of analyzing the results and figuring out what the models reveal.”

Whether it’s protecting aircraft from wind shear, investigating changes in the earth’s ozone layer, determining sunspot behavior or linking weather to factors that shape epidemics, NCAR is on the forefront of major climate and weather research. Simulations from the organization’s most advanced models are used to study past, present and future changes in weather patterns in richer detail and with greater accuracy. With each simulation generating hundreds of terabytes of output, data management challenges scale up rapidly.

With the game-changing SFA14K, NCAR now has the storage capacity and sustained compute performance to perform sophisticated modeling while substantially reducing workflow bottlenecks. As a result, the organization will be able to quickly process mixed I/O workloads while sharing up to 40 PBs of vital research data with a growing scientific community around the world.