This is the third entry in our series of features covering high performance system interconnect technology, HPC networking, and computing. This series, compiled in a complete Guide available here, is focused on HPC networking trends. The series also covers available trends in high performance computing and networking, as well as how to select an HPC technology and solution partner.

HPC Networking Trends in the TOP500

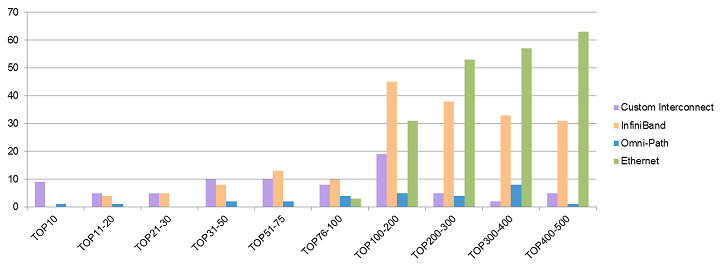

The TOP500 list is a very good proxy for how different interconnect technologies are being adopted for the most demanding workloads, which is a useful leading indicator for enterprise adoption. Vendor specific technologies have exclusive presence in the world’s current TOP 10 systems, and share presence with InfiniBand in the TOP 11-50 – custom 50%, InfiniBand 43%, and Omni-Path 8%. InfiniBand and Ethernet are currently the most commonly deployed interconnects in the remaining TOP 51-500. Ethernet is still the most widely deployed network technology in the TOP500 with 207 systems.

The world’s leading and most esoteric systems are currently dominated by vendor specific technologies which include Intel Omni-Path, the new pretender to the throne. InfiniBand and Ethernet currently account for 79% of the world’s fastest and most demanding systems, but Intel Omni-Path is likely to change that market dynamic.

TOP500 HPI Distribution (Nov 2016)

OrionX analysis of TOP500 data from Nov 2016

The essential takeaway is that the world’s leading and most esoteric systems are currently dominated by vendor specific technologies. Beyond the sparsely populated domain of the TOP10, the standardized HPI technologies of InfiniBand and Ethernet currently account for 79% of the world’s fastest and most demanding systems. InfiniBand currently accounts for 37% of the current TOP500 list and those systems are fairly evenly distributed. While Ethernet systems account for 41% of the TOP500 they are concentrated in the less demanding environments found below the TOP100 and primarily in the industry segment.

Although Intel Omni-Path is primarily derived from several established technologies it is not delivered to the market as a formal standard. Intel’s route to market is clearly through an extensive partner distribution model which has the opportunity to establish Omni-Path as a significant ‘de-facto standard’ and redefine market adoption trends through end-to-end architectural integration. In that sense Intel Omni-Path, InfiniBand and Ethernet can be expected to become the primary choices and widely adopted HPI technologies in the foreseeable future.

Integration through Standards and the Open Fabric Alliance

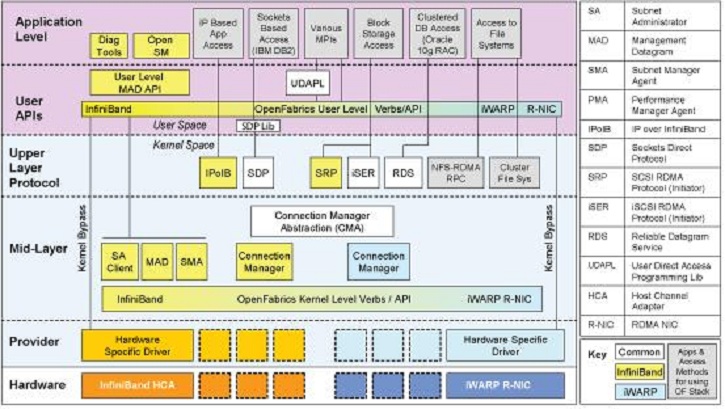

The Open Fabrics Alliance (OFA) will be increasingly important in the coming years as a forum to bring together the leading high performance interconnect vendors and technologies to deliver a unified, cross-platform, transport-independent software stack. Founded in 2004 as the OpenIB Alliance, the Alliance was originally focused on developing a vendor-independent, Linux-based InfiniBand software stack.

Since then the organization has expanded its charter to include support for iWARP, RoCE and the OpenFabrics Interfaces working group to investigate and incorporate support for other high performance networks. Today, the vision of the OpenFabrics Alliance is to deliver a unified, cross-platform, transport-independent software stack for RDMA and kernel bypass to enable users to run their applications agnostically over InfiniBand, iWARP, RoCE, or other fabrics, including Intel’s OPA though the OpenFabrics Software (OFS) open source offering.

The OpenFabrics Enterprise Distribution (OFED™)/OpenFabrics Software

Over the next few weeks we will dive into the following additional topics on HPC Networking:

- Special Report on top Trends in HPC Networking

- High Performance System Interconnect Technology

- Selecting HPC Network Technology

- The Dell EMC HPC Innovation Lab

If you prefer you can download the complete report, A Trusted Approach for High Performance Networking, courtesy of Dell EMC and Intel.