Gary Grider, LANL

In this video from the Storage Developer Conference, Gary Grider from LANL presents: MarFS – A Scalable Near-POSIX File System over Cloud Objects for HPC Cool Storage.

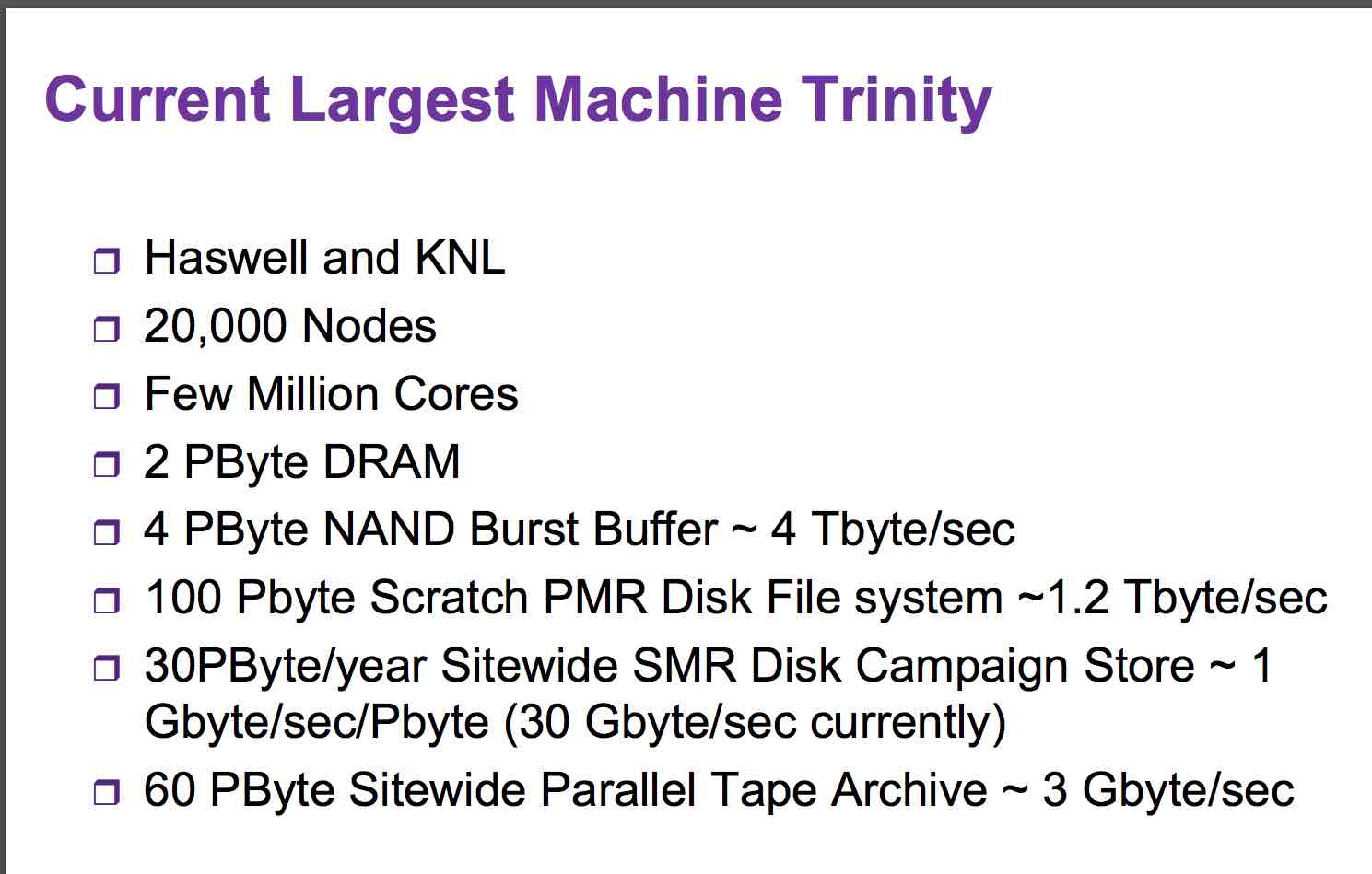

“Many computing sites need long-term retention of mostly cold data often “data lakes”. The main function of this storage tier is capacity but non trivial bandwidth/access requirements exist. For many years, tape was the best economic solution. Data sets have grown larger more quickly than tape bandwidth improvements and access demands have increased in the HPC environment. Disk can be more economically for this storage tier. The Cloud Community has moved towards erasure based object stores to gain scalability and durability using commodity hardware. The Object Interface works for new applications but legacy applications utilize POSIX for their interface. MarFS is a Near-POSIX File System using cloud storage for data and many POSIX file systems for metadata. Extreme HPC environments require that MarFS scale a POSIX namespace metadata to trillions of files and billions of files in a single directory while storing the data in efficient massively parallel ways in industry standard erasure protected cloud style object stores.”

Gary Grider is the Deputy Division Leader of the High Performance Computing (HPC) Division at Los Alamos National Laboratory, where he is responsible for managing the personnel and processes required to stand up and operate major supercomputing systems, networks, and storage systems for the Laboratory for both the DOE/NNSA Advanced Simulation and Computing (ASC) program and LANL institutional HPC environments. One of his main tasks is conducting and sponsoring R&D to keep the new technology pipeline full and provide solutions to problems in the Lab’s HPC environment. Gary is also the LANL lead in coordinating DOE/NNSA alliances with universities in the HPC I/O and file systems area. He is one of the principal leaders of a small group of multi-agency HPC I/O experts that guide the government in its I/O related computer science R&D investments through the High End Computing Interagency Working Group HECIWG, and is the Director of the Los Alamos/UCSC Institute for Scientific Scalable Data Management and the Los Alamos/CMU Institute for Reliable High Performance Information Technology. He is also the LANL PI for the Petascale Data Storage Institute, a SciDAC2 Institute award-winning project. Before working for Los Alamos, Gary spent 10 years with IBM at Los Alamos working on advanced product development and test and 5 years with Sandia National Laboratories working on HPC storage systems.

In related news, Gary Grider will present at the StartupHPC Conference on Nov. 13 in Salt Lake City.