Today the good folks at the Google Cloud Platform announced the availability of NVIDIA GPUs for multiple geographies. Cloud GPUs can accelerate workloads such as machine learning training and inference, geophysical data processing, simulation, seismic analysis, molecular modeling, genomics and many more high performance compute use cases.

Today the good folks at the Google Cloud Platform announced the availability of NVIDIA GPUs for multiple geographies. Cloud GPUs can accelerate workloads such as machine learning training and inference, geophysical data processing, simulation, seismic analysis, molecular modeling, genomics and many more high performance compute use cases.

Today, we’re happy to make some massively parallel announcements for Cloud GPUs. First, Google Cloud Platform (GCP) gets another performance boost with the public launch of NVIDIA P100 GPUs in beta. Second, NVIDIA K80 GPUs are now generally available on Google Compute Engine. Third, we’re happy to announce the introduction of sustained use discounts on both the K80 and P100 GPUs. The NVIDIA Tesla P100 is the state-of-the-art of GPU technology. Based on the Pascal GPU architecture, you can increase throughput with fewer instances while saving money. P100 GPUs can accelerate your workloads by up to 10x compared to K80.

Compared to traditional solutions, Cloud GPUs provide an unparalleled combination of flexibility, performance and cost-savings:

- Flexibility: Google’s custom VM shapes and incremental Cloud GPUs provide the ultimate amount of flexibility. Customize the CPU, memory, disk and GPU configuration to best match your needs.

- Fast performance: Cloud GPUs are offered in passthrough mode to provide bare-metal performance. Attach up to 4 P100 or 8 K80 per VM (Google offers up to 4 K80 boards, that come with 2 GPUs per board). For those looking for higher disk performance, optionally attach up to 3TB of Local SSD to any GPU VM.

- Low cost: With Cloud GPUs you get the same per-minute billing and Sustained Use Discounts that you do for the rest of GCP’s resources. Pay only for what you need.

- Cloud integration: Cloud GPUs are available at all levels of the stack. For infrastructure, Compute Engine and Google Container Enginer allow you to run your GPU workloads with VMs or containers. For machine learning, Cloud Machine Learning can be optionally configured to utilize GPUs in order to reduce the time it takes to train your models at scale with TensorFlow.

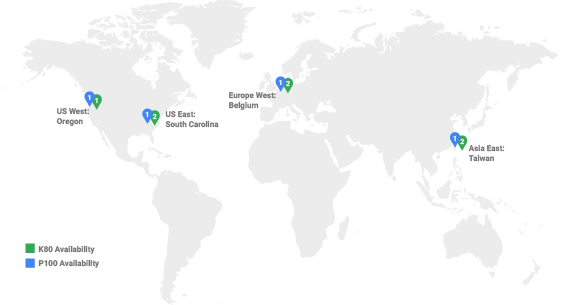

With today’s announcement, you can now deploy both the NVIDIA Tesla P100 and K80 GPUs in four regions worldwide. All of our GPUs can now take advantage of sustained use discounts, which automatically lower the price (up to 30%), of your virtual machines when you use them to run sustained workloads. No lock-in or upfront minimum fee commitments are needed to take advantage of these discounts.

Since launching GPUs, Google has seen customers benefit from the extra computation they provide to accelerate workloads ranging from genomics and computational finance to training and inference on machine learning models.

For certain tasks, NVIDIA GPUs are a cost-effective and high-performance alternative to traditional CPUs,” said Ben Belchak from Shazam, an early adopter of GPUs on GCP to power their music recognition service. “They work great with Shazam’s core music recognition workload, in which we match snippets of user-recorded audio fingerprints against our catalog of over 40 million songs. We do that by taking the audio signatures of each and every song, compiling them into a custom database format and loading them into GPU memory. Whenever a user Shazams a song, our algorithm uses GPUs to search that database until it finds a match. This happens successfully over 20 million times per day.”