Fans of open source Machine Learning got a boost today with the launch of an Exascale Visual AI initiative to sequence Visual DNA from images using Volume Learning to enable new applications.

Fans of open source Machine Learning got a boost today with the launch of an Exascale Visual AI initiative to sequence Visual DNA from images using Volume Learning to enable new applications.

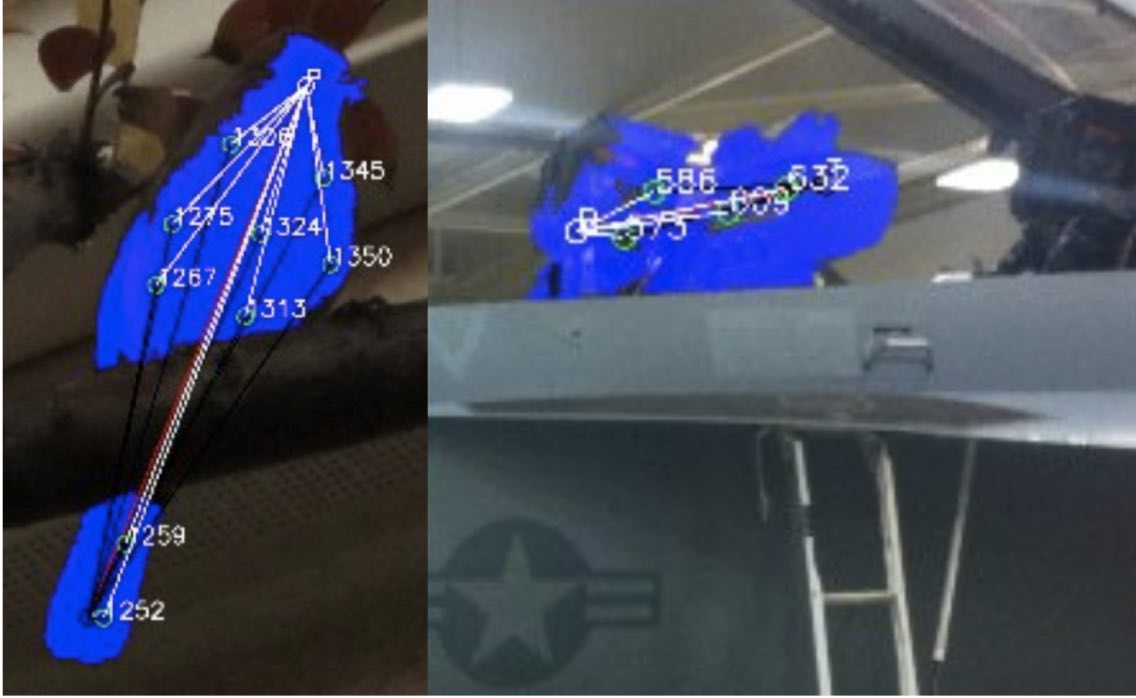

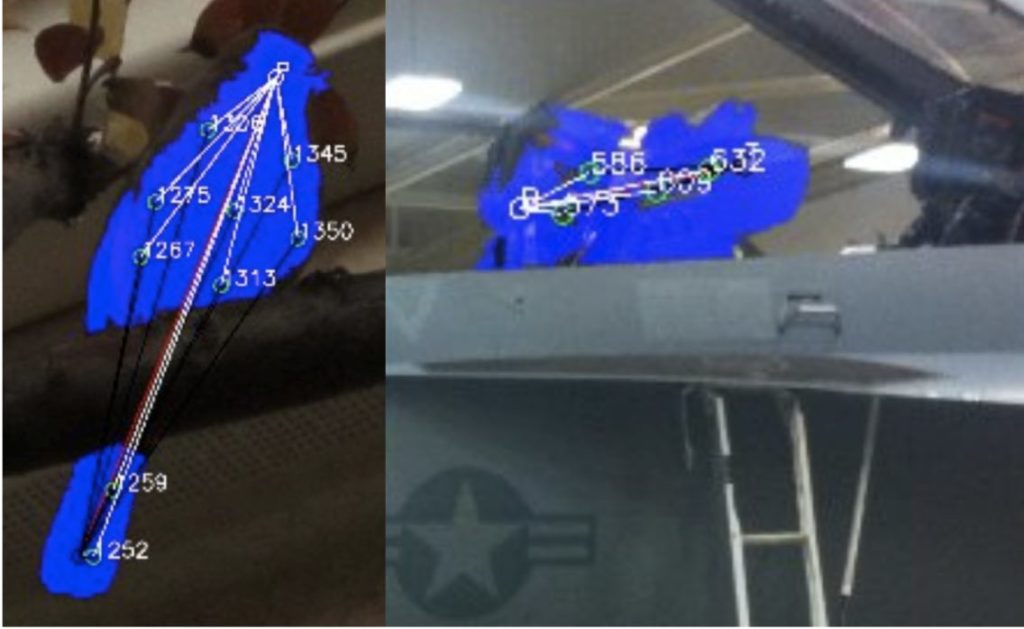

The OpenVDNA project provides public resources for visual AI solutions, including a visual DNA catalog, visual AI search engine, and a visual AI toolkit. Visual DNA are taken from thousands of small pieces of images composing the fabric of the image. Each visual DNA puzzle piece is analyzed and compared using over 16,000 feature metrics in four primary bases: Color, Shape, Texture and Glyphs. Visual DNA are learned and stored in the OpenVDNA catalog using Learning Agents to create structures representing higher-level objects, and then accessed via the OpenVDNA search engine and the OpenVDNA software toolkit.

The mission of the Visual Genomes Foundation is “OpenVDNA for everybody”. “Visual DNA are part of the future of AI, and a standard part of the infrastructure of modern life”, says Scott Krig, founder of the VGF. “A global backbone of supercomputers will learn, record and serve visual DNA to innumerable connected applications, providing basic visual AI services for industrial inspection, inventory, commerce and daily life. Volume learning and visual DNA will become ubiquitous, popular, simple and free.”

The OpenVDNA project provides an open ecosystem of resources to enable everyone to take advantage of Visual DNA, from everyday users of images, to researchers and engineers opening new commercial markets. The Visual Genomes Foundation is funding the work, intended for public, as well as commercial and academic groups.

The OpenVDNA catalog goal is to contain the world’s largest public collection of Visual DNA and Visual Learning Agents, including labeled strands and bundles of VDNA that represent higher level objects. Analogous to human DNA, collections of VDNA represent a visual gene or trait, as well as complete objects. Labels are provided for known objects, and unknown objects are cataloged as well with machine generated labels.

The OpenVDNA catalog is a large photographic memory that learns all the separate features in each image it has ever seen, which can recall features on demand and search for similarities and differences. The OpenVDNA catalog enables LCI applications to grow and learn over time. VGF Sponsors may license source code to learn proprietary OpenVDNA catalogs, containing Visual DNA for specific applications.

The Open VDNA search engine allows anyone to present an image for analysis to the OpenVDNA catalog, and discover the Visual DNA in the image. The OpenVDNA search engine returns lists of similar Visual DNA and images. Image geo-tagging and wiki annotations will make the Open VDNA search engine valuable for a world-wide visual AI infrastructure.

The OpenVDNA devkit will allow VGF members to programmatically access the OpenVDNA catalog, to create commercial or research applications. VGF sponsors can extend the synthetic vision model via open source code licensing, and create private OpenVDNA catalogs for specific commercial or research applications.

The Visual Genome Foundation (VGF) is similar in scope to the Human Genome Project of the USG. The VGF mission is to catalog visual intelligence as Visual DNA, Visual Genomes, and Visual learning Agents, using a synthetic visual pathway model of the human brain, to advance artificial visual intelligence, to enable sponsors & the public to reap the benefits, and freely create commercial spin-offs.

The initial Visual DNA catalog will be massive in terms of today’s computing capabilities, pushing the boundaries of computing systems, and challenging the fastest super computer systems”, says Krig.

VGF uses the Synthetic Vision model of the human visual pathway, discussed in the De Gruyter book “Synthetic Vision using Volume Learning and Visual DNA”. Synthetic vision is a dynamic, living, growing model for continual learning, mimicking the human visual system, unlike deep learning models which learn a static model of monovariate gradient edge features one label at a time, during a compute-intensive, tedious, back-and- forth training process. Synthetic vision uses Volume Learning for continual learning of multiple visual DNA features simultaneously.

VGF is actively looking for sponsors and partners now, to widen participation in the OpenVDNA project. VGF sponsors and partners will have a birds-eye seat to direct the VGF work, and reap the rewards. VGF enables collaboration, commercial spinoffs, and public research.