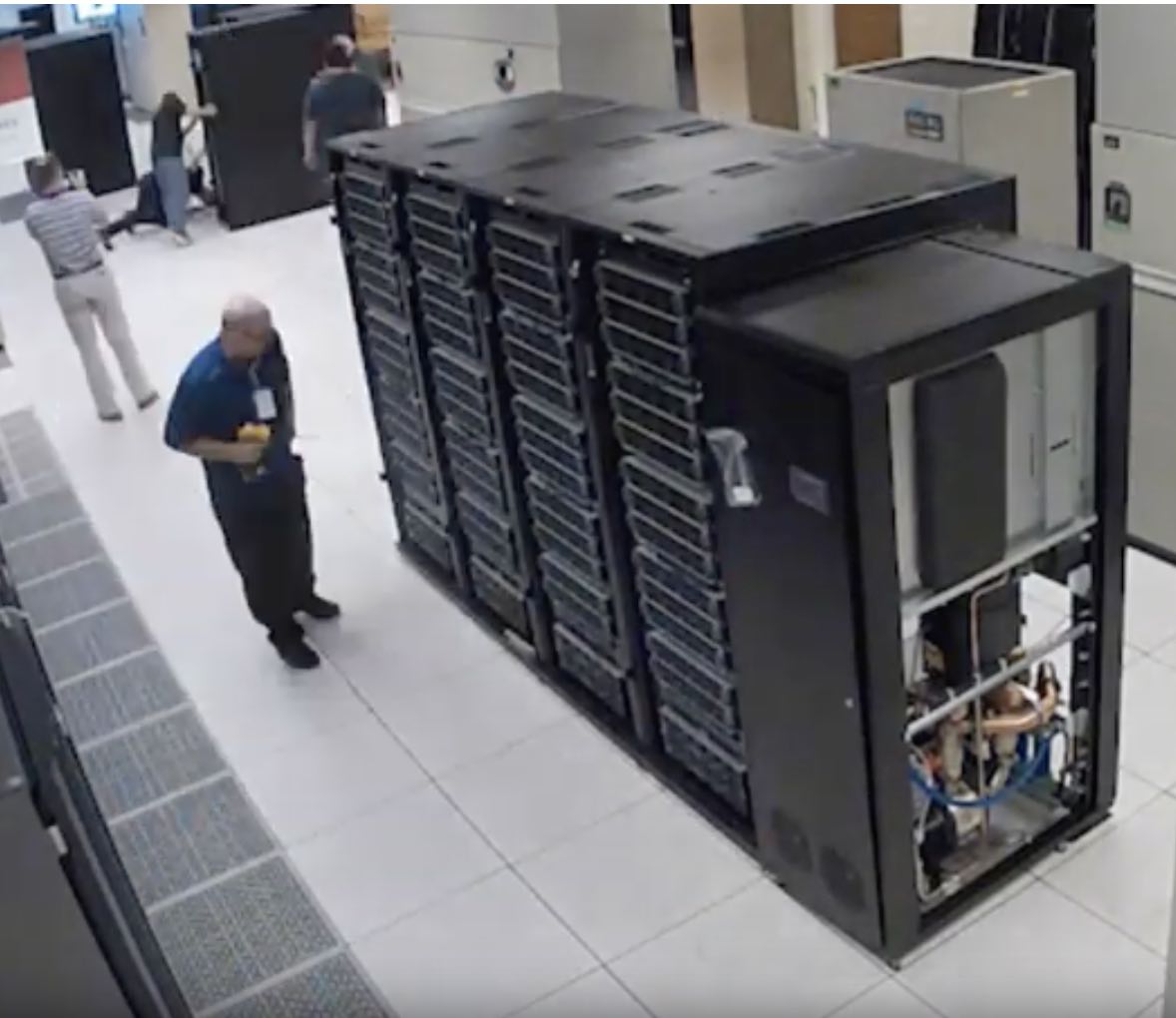

In this video, Dell EMC specialists and CoolIT technicians build the Ohio Supercomputing Center’s newest, most efficient supercomputer system, the Pitzer Cluster. Named for Russell M. Pitzer, a co-founder of the center and emeritus professor of chemistry at The Ohio State University, the Pitzer Cluster is expected to be at full production status and available to clients in November. The new system will power a wide range of research from understanding the human genome to mapping the global spread of viruses.

In this video, Dell EMC specialists and CoolIT technicians build the Ohio Supercomputing Center’s newest, most efficient supercomputer system, the Pitzer Cluster. Named for Russell M. Pitzer, a co-founder of the center and emeritus professor of chemistry at The Ohio State University, the Pitzer Cluster is expected to be at full production status and available to clients in November. The new system will power a wide range of research from understanding the human genome to mapping the global spread of viruses.

The Pitzer Cluster follows the long-running HPC trend of higher performance in a smaller footprint, offering clients nearly as much performance as the center’s most powerful cluster, but in less than half the space and with less power,” said David Hudak, executive director of OSC. “This valuable new addition to our data center allows OSC to continue addressing the growing computational, storage and analysis needs of our client communities in academia, science and industry.”

The theoretical peak performance of the new Dell EMC-built cluster is about 1.3 petaflops, meaning it is capable of performing 1.3 quadrillion calculations per second. In other words, to match the potential of what the Pitzer Cluster could do in just one second, a single person would have to perform one calculation every second for 41,195,394.5 years. The cluster also can achieve seven petaflops of theoretical peak performance for mixed-precision artificial intelligence workloads.

The Pitzer Cluster will feature 260 nodes, including Dell EMC PowerEdge C6420 servers with CoolIT Systems’ Direct Contact Liquid Cooling (DCLC) coupled with PowerEdge R740 servers. In total, the cluster will include 528 Intel Xeon Gold 6148 processors, 64 NVIDIA Tesla V100 Tensor Core GPUs, all connected with EDR InfiniBand network.

We worked with Dell EMC to create a highly efficient, dense and flexible petaflop-class system,” said Douglas Johnson, chief systems architect at OSC. “We have designed the Pitzer Cluster with some unique components to complement our existing systems and boost our total center performance to more than 2.8 petaflops.”

The Pitzer Cluster will join existing systems on the OSC data center floor at the State of Ohio Computer Center: The Dell EMC/Intel Owens Cluster (March 2017) and the HP/Intel Ruby Cluster (April 2015). The new system will replace the HP/Intel Oakley Cluster (March 2012).

Dell EMC is thrilled to continue our great collaboration with OSC with this new dense, efficient and liquid cooled system,” said Thierry Pellegrino, vice president, Dell EMC High Performance Computing. “The Pitzer Cluster brings to bear a multitude of new technologies to help OSC and its researchers more quickly and efficiently tackle immense challenges, using artificial intelligence and deep learning to ultimately drive human progress.”

The Pitzer Cluster will utilize CoolIT Systems’ DCLC, a modular, low-pressure, rack-based cooling solution that enables a dramatic increase in rack density, component performance and power efficiency. To support the high performance requirements of the system, CoolIT’s Passive Coldplate Loop for the PowerEdge C6420 servers delivers dedicated liquid cooling to the Intel processors in each of the 256 CPU nodes, managed by a stand-alone, central pumping CHx650 Coolant Distribution Unit.

To speed up data flow within the Pitzer Cluster, Dell EMC recommended components that improve memory bandwidth on each CPU node and increase network capacity between them. The Intel processors feature 6-channel integrated memory controllers, improving bandwidth by 50 percent compared to cores in the Owens Cluster. Mellanox EDR InfiniBand 100 Gigabit per second provided provides high data throughput, low latency and high message rate of 200 million messages per second. Additionally, the smart In-Network Computing acceleration engine provides higher application performance and overall improved efficiency.

The Pitzer Cluster will provide clients with access to four Large Memory nodes (Dell EMC PowerEdge R940), with up to three terabytes of memory per node, especially helpful for data-intensive operations, such as DNA sequencing. And, the cluster’s GPU nodes (Dell EMC PowerEdge R740) feature NVIDIA® Tesla® V100 Tensor Core GPUs, which are 50 percent more energy efficient than previous generation GPUs. These GPUs offer large increases in speed, especially useful for deep learning algorithms and artificial intelligence projects.

System specifications:

- 260 Dell Nodes

- Dense Compute

-

224 compute nodes (Dell PowerEdge C6420 two-socket servers with Intel Xeon 6148 (Skylake, 20 cores, 2.40GHz) processors, 192GB memory)

-

-

GPU Compute

-

32 GPU compute nodes — Dell PowerEdge R740 two-socket servers with Intel Xeon 6148 (Skylake, 20 cores, 2.40GHz) processors, 384GB memory

-

2 NVIDIA Volta V100 GPUs — 16GB memory

-

-

Analytics

-

4 huge memory nodes (Dell PowerEdge R940 four-socket server with Intel Xeon 6148 (Skylake 20 core, 2.40GHz) processors, 3TB memory, 2 x 1TB drives mirrored – 1TB usable)

-

- 10,560 total cores

- 40 cores/node & 192GB of memory/node

- Mellanox EDR (100Gbps) Infiniband networking

- Theoretical system peak performance

- 720 TFLOPS (CPU only)

- 4 login nodes:

- Intel Xeon 6148 (Skylake) CPUs

- 40 cores/node and 384GB of memory/node