This sponsored post from Intel makes the case for HPC and AI to share a common platform.

Finding the best solution to meet the requirements for intertwined HPC and AI workloads requires us to look at the overall platform benefits versus the benefits of individual technologies. With exascale on the horizon, the blending of HPC and AI algorithms, and ever-increasing data sets, having an overall robust platform is more important than ever.

A future where HPC and AI are converged will place significant demands on a platform. Addressing these holistically is far from simple – processor, memory subsystem (memory, persistence, capacity, bandwidth, cost), interconnect (bandwidth, cost, reliability), software tools, and applications (frameworks, eco-system, optimization/tuning) are all important.

A couple of recent cases provide insightful examples for why you would want HPC and AI to share a common platform. In one example, research at CERN has shown that AI-based models may be able to significantly boost simulation performance. In another, Deep Neural Networks (DNN) with medical images that place high demands on memory capacity and performance have been shown to benefit when processors are used to power the DNN. In both cases, vector processing (Intel AVX-512, including Intel DL Boost) and memory subsystem (capacity, bandwidth) are critical to the high performance of HPC and AI workloads.

The opportunity to address the world’s biggest problems has never been greater. High performance processors remain the critical element in building a platform to meet the diverse needs of the HPC and AI convergence.

Intel recently introduced the 2nd Generation Intel Xeon Scalable processor along with a compelling platform story that addresses HPC and AI demands. Benchmark results are showing the benefits of coupling outstanding processing performance with outstanding memory bandwidth per core, per node and at scale to serve a broad set of HPC, data analytics, and AI applications. For details, see the new technical brief 2nd Generation Intel® Xeon Scalable Processor Ushers in a New Era of Performance for HPC Platforms.

Six Key Platform Attributes to Consider

The 2nd Gen Intel Xeon Scalable processor features up to 56 cores, 12 memory channels (at 2933MT/s), and high-speed interconnect capabilities via 80 PCIe Gen3 lanes per node.

These differentiating characteristics make 2nd Gen Intel Xeon Scalable processors ideal for the challenges of AI/HPC convergence:

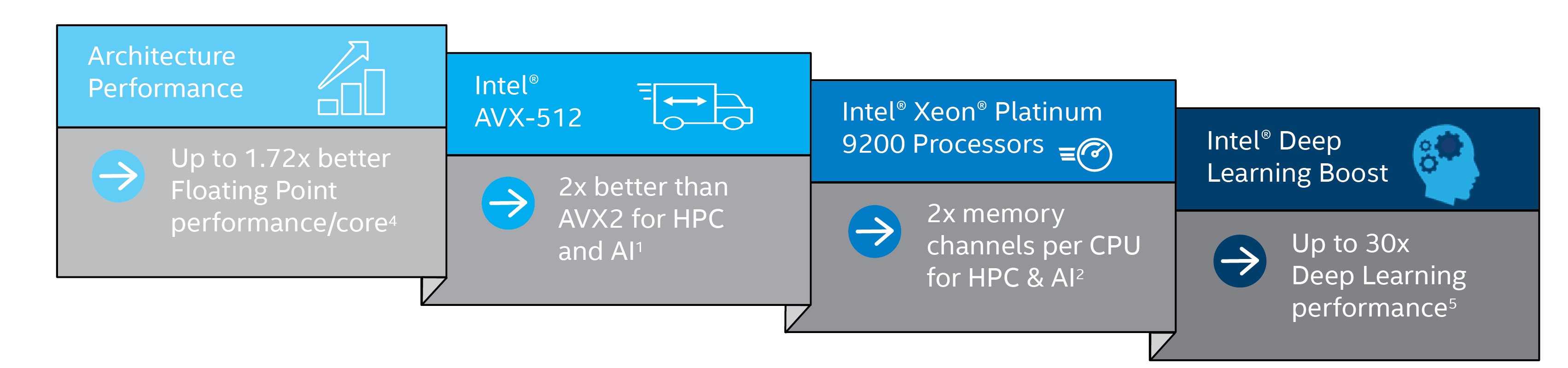

- Per Core Computational Ability: Core per core, Intel offers up to 1.72x the FP performance of competing processors4.

- Memory Bandwidth: High bandwidth to memory (up to 2933MT/s) – a boost for many workloads

- Scalability: High degrees of scaling (up to 56 cores) – reflected with LINPACK numbers up to 5.8X higher3

- HPC Acceleration: High performance computing for HPC workloads (Intel® Advanced Vector eXtensions512-bit (Intel® AVX-512) – an advantage over AVX2 of up to 2XFLOPS)1

- AI Acceleration: High performance computing for analytics and AI workloads (Intel AVX-512 and Intel® Deep Learning Boost (Intel® DL Boost)

- Platform Strength:Integration with the larger platform support portfolio including Intel Optane Persistent Memory, Intel Optane SSDs, Intel Interconnect products, Intel FPGA solutions, Software Defined Visualization (SDVis),Intel Parallel Studio XE 2019 software developer toolkit, eco-system support and optimizations

One particular 2nd Gen Intel Xeon Scalable processor, the Intel Xeon® Platinum 9200 processor, brings together all these into a package that is optimized for both density and performance. The processors feature up to 56 cores, 12 memory channels (at 2933MT/s), and high-speed interconnect capabilities via 80 PCIe Gen3 lanes per node. LINPACK benchmarking shows up to 5.8X advantage for the Intel Xeon Platinum 9282 processor over AMD EPYC 76013.

Scaling Platforms Up for Better Results

The first supercomputer featuring Intel Xeon Platinum 9200 processors will be HLRN-IV at the North-German Supercomputing Alliance. Prof. Dr. Ramin Yahyapourof Göttingen University echoed what all HPC centers see: “Science in general is getting more compute and data intensive. This means that having larger systems available translates into an ability for the scientists to do better work.”

Intel AVX-512 magnified by Intel DL Boost and high memory bandwidth

Intel’s investment in high performance support for vectors, including the widest format AVX-512 with dual fused multiply-adders (FMAs), not only pays off with outstanding FP throughput (e.g., LINPACK), but also for workloads accelerated by Intel DL Boost. Intel DL Boost also harnesses the power of the AVX-512 wide vector format to achieve high throughput, allowing a wide variety of analytics, AI, and HPC workload algorithms to seamlessly leverage the high throughput capabilities of the processors. This allows Intel DL Boost to be especially useful for deep learning when computational capacity is an issue — a win for HPC/AI converged applications where large models benefit from direct access to the large memory capacity of the processor.

Read the 2ndGeneration Intel® Xeon® Scalable Processor Ushers in a New Era of Performance for HPC Platforms technical brief to see why systems built with the latest Intel processors offer performance for a broad range of HPC workloads, and a compelling solution for integrating HPC, data analytics and AI in a single system.

Performance Test Details

12x better performance with AVX512 vs AVX2: Theoretical FLOPS when comparing Cascade Lake with AVX512 and 2 512 bit FMAs to older generation Broadwell with AVX2 and 2 256 bit FMAs.

22x memory channels: Comparing 2nd Gen Intel Xeon Platinum 9200 processors with 12 memory channels per socket vs. Intel Xeon

Scalable processors (Skylake) with 6 memory channels per socket.

3 5.8x LINPACK performance AMD EPYC 7601; tested by Intel on 7/31/2018: Supermicro AS-2023US-TR4 with 2 AMD EPYC 7601 (2.2 GHz, 32 core) processors, SMT OFF, Turbo ON, BIOS ver 1.1a, 4/26/2018, microcode: 0x8001227, 16x32GB DDR4-2666, 1 SSD, Ubuntu 18.04.1 LTS (4.17.0-041700-generic Retpoline), High Performance Linpack v2.2, compiled with Intel®Parallel Studio XE 2018 for Linux, Intel MPI version 18.0.0.128, AMD BLIS ver 0.4.0, Benchmark Confg: Nb=232, N=168960, P=4, Q=4, Score =1095GFs, vs. test by Intel 2/16/2019: vs.1-node, 2x Intel Xeon Platinum 9282 processor (56 core, 2.6 GHz) on Walker Pass with 768 GB (24x 32GB 2933) total memory, ucode 0x400000A on RHEL7.6,3.10.0-957.el7.x86_65, IC19u1, AVX512, HT on all (off Stream, Linpack), Turbo on all (off Stream, Linpack), Linpack=6411.

4 1.72x 1-copy SPECrate2017_fp_base* 2 socket Intel 8280 vs 2 socket AMD* EPYC* 7601; config details:tested by Intel on 2/6/2019 with security mitigations for variants 1,2,3,3a and L1TF, Xeon-SP 8280, Intel Xeon-based Reference Platform with 2 Intel Xeon 8280 processors (2.7GHz, 28 core), BIOS vSE5C620.86B.0D.01.0348.011820191451, 01/18/2019, microcode: 0x5000017, HT OFF, Turbo ON, 12x32GB DDR4-2933, 1 SSD, Red Hat EL 7.6 (3.10.0-957.1.3.el7.x86_64), 1-copy SPECrate2017_fp_rate base benchmark compiled with Intel Compiler 19.0.1.144, -xCORE-AVX512 -ipo -O, executed on 1 core using taskset and numactl on core 0. Estimated score = 9.6, vs. tested by Intel 2/8/2019: AMD EPYC 7601, Supermicro AS-2023US-TR4 with 2S AMD EPYC 7601 with 2 AMD EPYC 7601 (2.2GHz, 32 core) processors, BIOS v1.1c, 10/4/2018, SMT OFF, Turbo ON, 16x32GB DDR4-2666, 1 SSD, Red Hat EL 7.6 (3.10.0-957.5.1.el7.x86_64), 1-copy SPECrate2017_fp_rate base benchmark compiled with AOCC v1.0 -Ofast, -march=znver1, executed on 1 core using taskset and numactl on core 0. Estimated score = 5.56.

5 30x inference throughput improvement on Intel Xeon Platinum 9282 processor with Intel DL Boost: Tested by Intel as of 2/26/2019. Platform: Dragon rock 2 socket Intel Xeon Platinum 9282(56 cores per socket), HT ON, turbo ON, Total Memory 768 GB (24 slots/ 32GB/ 2933 MHz), BIOS:SE5C620.86B.0D.01.0241.112020180249, Centos 7 Kernel 3.10.0-957.5.1.el7.x86_64, Deep Learning Framework: Intel® Optimization for Caffe version: https://github.com/intel/caffe d554cbf1, ICC 2019.2.187, MKL DNN v0.17 (commit hash:830a10059a018cd2634d94195140cf2d8790a75a), model: BS=64, No datalayer syntheticData:3x224x224, 48 instance/2 socket, Datatype: INT8 vs Tested by Intel as of July 11th 2017: 2S Intel® Xeon® Platinum 8180 CPU @ 2.50GHz (28 cores), HT disabled, turbo disabled, scaling governor set to “performance” via intel_pstate driver, 384GB DDR4-2666 ECC RAM. CentOS Linux release 7.3.1611 (Core), Linux kernel 3.10.0-514.10.2.el7. x86_64. SSD: Intel® SSD DC S3700 Series (800GB, 2.5in SATA 6Gb/s, 25nm, MLC).Performance measured with: Environment variables: KMP_AFFINITY=’granularity=fne, compact‘, OMP_NUM_THREADS=56, CPU Freq set with cpupower frequency-set -d 2.5G -u 3.8G –g performance. Caffe: revision f96b759f71b2281835f690af267158b82b150b5c. Inference measured with “caffe time –forward_only” command, training measured with “caffe time” command. For “ConvNet” topologies, synthetic dataset was used. For other topologies, data was stored on local storage and cached in memory before training. Topology specs from GitHub (ResNet-50),. Intel C++ compiler v17.0.2 20170213, Intel MKL small libraries v2018.0.20170425. Caffe run with “numactl -l“.