This sponsored post from Intel explores running AI and HPC workloads together on existing infrastructure, and how organizations can gain rapid insights and experience faster time-to-market with advanced architecture technologies.

Because HPC technologies today offer substantially more power and speed than their legacy predecessors, enterprises and research institutions benefit from combining AI and HPC workloads on a single system. This approach increases the cost-efficiency of existing infrastructure investments by accelerating breakthrough scientific discoveries, shortening the time needed to bring new products to market, and offering real-time business intelligence.

For those institutions which have not made the leap to HPC and AI yet, there is good news. If they invested in Intel HPC solutions, they already have at their fingertips the tools they need to introduce AI into their enterprise or research data center environments. However, the task of optimizing the system for an organization’s unique needs is not always easy since HPC and AI use cases vary widely. Systems integrators and developers, therefore, face challenges like combining CPUs and accelerators for the highest performance, extending memory volumes for greater application design flexibility or avoiding the need to re-write applications to accommodate hardware changes.

For those institutions which have not made the leap to HPC and AI yet, there is good news. If they invested in Intel HPC solutions, they already have at their fingertips the tools they need to introduce AI into their enterprise or research data center environments. However, the task of optimizing the system for an organization’s unique needs is not always easy since HPC and AI use cases vary widely. Systems integrators and developers, therefore, face challenges like combining CPUs and accelerators for the highest performance, extending memory volumes for greater application design flexibility or avoiding the need to re-write applications to accommodate hardware changes.

Six platform investments from Intel will help reduce these obstacles and make HPC and AI deployment even more accessible and practical.

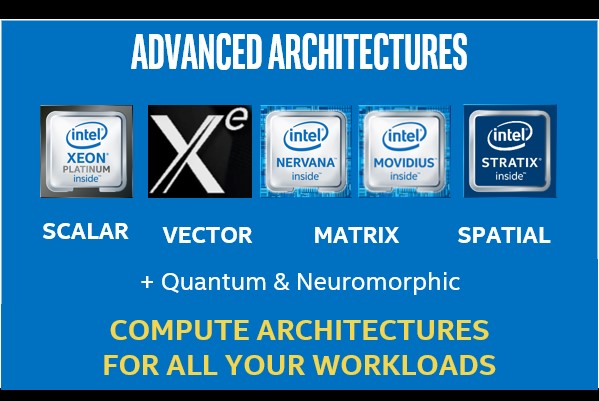

1. Advanced architectures for HPC and AI

While Intel Xeon Scalable processors serve a wide range of HPC use cases, some workloads benefit from hardware acceleration. For scenarios like three-dimensional modeling, AI, certain modeling workloads, and more, accelerators can employ vector, matrix, or spatially-based computational methods which result in a targeted improvement in system performance. Purpose-optimized technologies that supplement 2nd Generation Intel Xeon Scalable processors include:

-

-

- Intel Xe compute architecture, which, when available, will augment Intel’s solutions portfolio to addresses the unique characteristics of vector-intense HPC workloads

- Intel Nervana Neural Network processors designed to speed deep neural network-based workloads

- Intel Movidius technology which empowers intelligent connected devices at the edge, plus virtual and augmented reality scenarios

- Intel Stratix 10 SoC Field Programmable Gate Arrays (FPGAs) which deliver high performance, power efficiency, greater density, and improved systems integration.

-

2. Simplified programming with Intel One API

Enterprises and research institutions regularly choose accelerators to complement system CPUs for faster HPC performance. Developers, therefore, spend significant time ensuring their code is optimized to make the most of that single compute type. However, if a different kind of compute device drops into that mix, a developer would need to re-write the application to accommodate the new device and maximize its potential. When released, One API will eliminate this challenge.

With One API, developers have tools that will enable a unified programming model, simplifying development for workloads across multiple architectures. Intel One API, based on open standards, will give developers the freedom to create applications for one type of compute device, then run the same code on a different compute device or accelerator (for example CPU to GPU).

While supporting both direct programming and API programming, Intel One API also enables custom library functions which optimize native code performance across a variety of hardware. Developers also benefit from Intel’s enhanced debug and analysis tools that support SVMS architectures and DPC++.

3. Transforming memory and storage with DAOS

When creating applications for HPC, developers must work around memory bottlenecks. Since each server board supports a maximum of 128 gigabytes of memory per module, developers must “fit” their applications within those space constraints and optimize memory-intense HPC and AI applications to run more efficiently on HPC memory systems.

With One API, developers have tools that will enable a unified programming model, simplifying development for workloads across multiple architectures.

Innovations like Intel Optane technologies and the recently announced Distributed Asynchronous Object Storage (DAOS) address these barriers by offering developers ways to expand and speed HPC memory pools. Intel Optane DC persistent memory offers this expansion of memory sizes to tackle those memory-intensive workloads.

Distributed Asynchronous Object Storage (DAOS) supplements these technologies by offering a software-defined object store built for large scale, distributed Non-Volatile Memory (NVM). Unlike HPC workloads focused on mathematical calculations and storage, AI scenarios on HPC require non-linear movement of various-sized data blocks. DAOS technology accelerates AI workloads by directing smaller, “hot” data blocks onto memory DIMMs, and writing larger, “warm” data blocks to SSD storage. DAOS’s ability to place data intelligently makes the most of memory and storage resources and accelerates AI workloads in the process. With features like advanced data protection, transactional non-blocking I/O, and more DAOS offers organizations a performant, flexible storage option for today’s data centers.

4. A more intelligent interconnect, CXL

In the constant pursuit of more exceptional HPC system performance for AI applications, customers need ways to optimize the joint capabilities of CPUs and accelerators.

Intel’s new intelligent interconnect, Intel Compute Express Link (Intel CXL), addresses this need by enabling a faster gateway of communication between the CPU and accelerator. Intel CXL offers a standards-based solution to increase data transfer rates for AI applications, and reduce software stack complexity.

5. Support for manufacturing excellence

Increasingly, manufacturers tap the power of AI-based workloads to bring products to market faster, maximize their supply chains, avoid unexpected downtime of manufacturing equipment, and much more. Given the compute-intensive nature of AI, customers benefit from faster processor performance, which leads to faster business outcomes.

Intel is committed to manufacturing excellence by delivering advanced processors with ever increasing sophistication. For example, Intel FPGA and 10th Generation Intel Core processors are shipping this year with 10nm technology as well as having a clear path for 7nm and beyond. Foveros 3D packaging technology supplements these processor enhancements with the ability to combine processors, DRAM layers, and accelerators into a single, vertical “stack” that enables higher density per CPU socket and server board.

6. Built-in security

With new security threats occurring daily, data center IT experts need a platform they can count on for end-to-end security. Intel’s HPC-ready platform implements security technologies at all levels, including processors, boards, software, and more.

Find out more

There is no longer a doubt about the critical role AI can fill, or the value it adds. Instead, the debate is when and how to implement it to benefit your organization. The Intel HPC platform executes and accelerates AI-based workloads. Organizations that already adopted Intel technologies are closer to experiencing AI’s benefits than they may realize.

For more information about the benefits Intel’s HPC and AI platform can offer your organization, read the “Bringing AI into Your Existing HPC Environment” white paper.