Today NVIDIA posted the fastest results on new benchmarks measuring the performance of AI inference workloads in data centers and at the edge — building on the company’s equally strong position in recent benchmarks measuring AI training.

Today NVIDIA posted the fastest results on new benchmarks measuring the performance of AI inference workloads in data centers and at the edge — building on the company’s equally strong position in recent benchmarks measuring AI training.

As the industry’s first independent suite of AI benchmarks for inference, MLPerf Inference 0.5 demonstrates the performance of NVIDIA Turing GPUs for data centers and NVIDIA Xavier system-on-a-chip for edge computing. MLPerf’s five inference benchmarks — applied across a range of form factors and four inferencing scenarios — cover such established AI applications as image classification, object detection and translation.

NVIDIA topped all five benchmarks for both data center-focused scenarios (server and offline), with Turing GPUs providing the highest performance per processor among commercially available entries. Xavier provided the highest performance among commercially available edge and mobile SoCs under both edge-focused scenarios (single stream and multi stream).

AI is at a tipping point as it moves swiftly from research to large-scale deployment for real applications,” said Ian Buck, general manager and vice president of accelerated computing at NVIDIA. “AI inference is a tremendous computational challenge. Combining the industry’s most advanced programmable accelerator, the CUDA-X suite of AI algorithms and our deep expertise in AI computing, NVIDIA can help data centers deploy their large and growing body of complex AI models.”

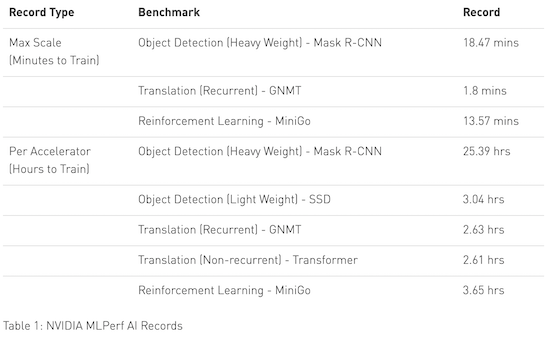

Simply put, our AI platform now slashes through models that once took a whole workday to train in less than two minutes.

Highlighting the programmability and performance of its computing platform across diverse AI workloads, NVIDIA was the only AI platform company to submit results across all five MLPerf benchmarks. In July, NVIDIA won multiple MLPerf 0.6 benchmark results for AI training, setting eight records in training performance.

NVIDIA GPUs accelerate large-scale inference workloads in the world’s largest cloud infrastructures, including Alibaba Cloud, AWS, Google Cloud Platform, Microsoft Azure and Tencent. AI is now moving to the edge at the point of action and data creation. World-leading businesses and organizations, including Walmart and Procter & Gamble, are using NVIDIA’s EGX edge computing platform and AI inference capabilities to run sophisticated AI workloads at the edge.

All of NVIDIA’s MLPerf results were achieved using NVIDIA TensorRT 6 high-performance deep learning inference software that optimizes and deploys AI applications easily in production from the data center to the edge. New TensorRT optimizations are also available as open source in the GitHub repository.

In related news, NVIDIA expanded its line of inference platforms today with Jetson Xavier NX. As “the world’s smallest, most powerful AI supercomputer for robotic and embedded computing devices at the edge,” Jetson Xavier NX is built around a low-power version of the Xavier SoC used in the MLPerf Inference 0.5 benchmarks.