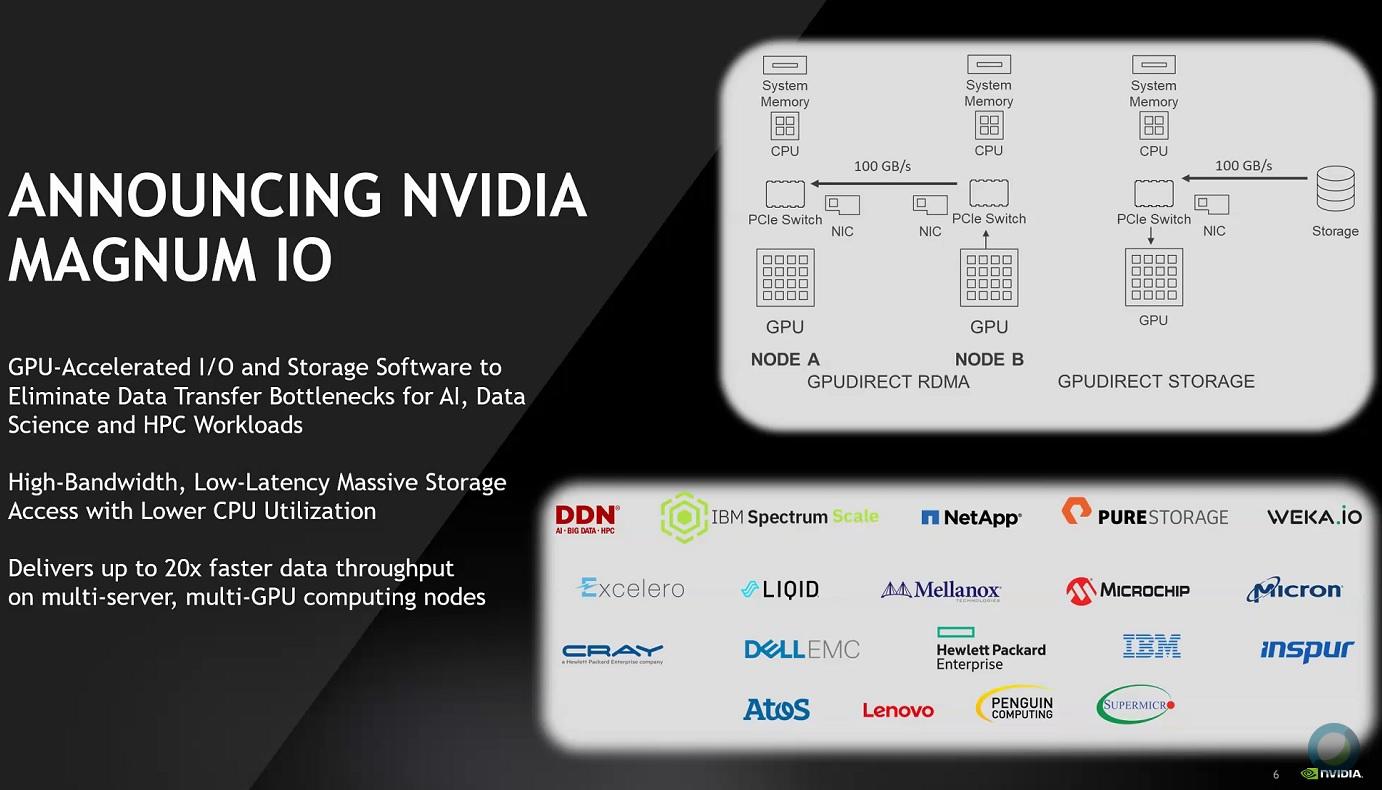

Today NVIDIA introduced NVIDIA Magnum IO, a suite of software to help data scientists and AI and high performance computing researchers process massive amounts of data in minutes, rather than hours.

Processing large amounts of collected or simulated data is at the heart of data-driven sciences like AI,” said Jensen Huang, founder and CEO of NVIDIA. “As the scale and velocity of data grow exponentially, processing it has become one of data centers’ great challenges and costs.

Optimized to eliminate storage and input/output bottlenecks, Magnum IO delivers up to 20x faster data processing for multi-server, multi-GPU computing nodes when working with massive datasets to carry out complex financial analysis, climate modeling and other HPC workloads.

NVIDIA has developed Magnum IO in close collaboration with industry leaders in networking and storage, including DDN, Excelero, IBM, Mellanox and WekaIO. Extreme compute needs extreme I/O. Magnum IO delivers this by bringing NVIDIA GPU acceleration, which has revolutionized computing, to I/O and storage. Now, AI researchers and data scientists can stop waiting on data and focus on doing their life’s work,” he said.

At the heart of Magnum IO is GPUDirect, which provides a path for data to bypass CPUs and travel on “open highways” offered by GPUs, storage and networking devices. Compatible with a wide range of communications interconnects and APIs — including NVIDIA NVLink™ and NCCL, as well as OpenMPI and UCX — GPUDirect is composed of peer-to-peer and RDMA elements.

Its newest element is GPUDirect Storage, which enables researchers to bypass CPUs when accessing storage and quickly access data files for simulation, analysis or visualization.

NVIDIA Magnum IO software is available now, with the exception of GPUDirect Storage, which is currently available to select early-access customers. Broader release of GPUDirect Storage is planned for the first half of 2020.

Modern HPC and AI research relies upon an incredible amount of data, often more than a petabyte in scale, which requires a new level of technology leadership to best handle the challenge, said Sven Oehme, chief research officer, DDN DDN, by taking advantage of NVIDIA’s Magnum IO suite of software along with our parallel EXA5-enabled storage architecture, is paving the way to a new direct data path which makes petabyte-scale data stores directly accessible to the GPU at high bandwidth, an approach that was not previously possible.”