The pinnacle of our current architectural landscape is within sight, with exascale systems starting to come to fruition. But as has been true with supercomputing since it first ‘became a thing,’ it is very much a moving target with ever constant growth in data forcing the issue as system architects work to optimize hardware and workflows to speed the time-to-results in systems.

The pinnacle of our current architectural landscape is within sight, with exascale systems starting to come to fruition. But as has been true with supercomputing since it first ‘became a thing,’ it is very much a moving target with ever constant growth in data forcing the issue as system architects work to optimize hardware and workflows to speed the time-to-results in systems.

Earlier this month Ayar Labs hosted a webinar on the topic of “Disaggregated System Architectures for Next Generation HPC and AI Workloads,” discussing the need for new architecture and the approaches that are being taken to bring new levels of power, efficiency, and composability to building the supercomputers, and eventually all computing systems of the future. Here are the top five trends that were discussed during the webinar driving the need for new HPC architectures:

- The AI Explosion – There is a considerable surge in compute demand due to rapidly growing AI and scientific models. This trend is only highlighted further by recent announcements during the SC20 virtual conference taking place at this time as hardware vendors, from chip makers to storage and beyond, unveil new architectures, technologies and strategies to address the growth of AI computing.

- The Need for Flexibility – At the moment, AI is dominated by GPU computing. However scientists need to be able to run their workflows using a variety of processing units. From CPUs, GPUs, DPUs, XPUs, FPGAs, AI accelerators and other emerging processing potentialities, there is growing diversity in processing. All workloads are not the same and there is a need to enable the kind of flexibility that allows for different computing for different workloads – and across workloads.

- Reducing Costs – Supercomputing is expensive. For all kinds of reasons, there is a need to improve the compute-per-watt and capacity-per-dollar equations that suck up budgets and make it cost prohibitive to scale up to new heights of computing (and the benefits that come with those new heights). The need for reducing costs is driving the growth of heterogeneous architectures and opening up new avenues for HPC architects to explore.

- The Memory Conundrum – During the webinar, the moderator, Timothy Prickett Morgan explained that we have either low latency, high bandwidth memory with small capacity (HMB) or higher latency access to high-capacity memory through a CPU. Too often researchers are left to pick a processor for the needs of the memory that they have, rather than what the workload needs. There is a need for new ways to hook memory into the system to provide enough low latency and enough high bandwidth to do the job. “This is where I think silicon photonics is going to really be a game changer for new architectures,” said Prickett Morgan.

- Throughput – There has been an ever-increasing need for I/O throughput since the dawn of supercomputing. However the problem has accelerated in recent years with I/O improvements trickling in at only 5-10% per year. While we’re now seeing PCI Express double every two years, and Ethernet and Infiniband making steady progress, it’s clear that electrical signaling is set to hit the wall of physics, and photonics appears to be the best path forward.

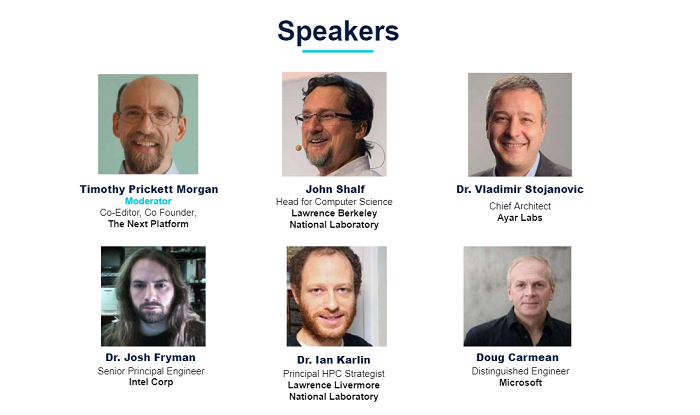

These were the top five trends identified as the top drivers for a need for new architectures. Ayar Labs brought together a blockbuster panel of some of the industry’s leaders to discuss these challenges and more, moderated by Timothy Pricket Morgan, including:

- Ian Karlin, Principal HPC Strategist at Lawrence Livermore National Laboratory

- John Shalf, Head for Computer Science at Lawrence Berkeley National Laboratory

- Vladimir Stojanovic, Chief Architect at Ayar Labs and a Professor of EECS at UC Berkeley

- Josh Fryman, Senior Principal Engineer at Intel Corp

- Doug Carmean, Architect at Microsoft

In a ranging discussion, it was clear that I/O remains a key impediment to overall system performance in exascale systems and beyond, and that the leaders of industry are exploring ways to solve these problems and bring us into a new future, where next generation disaggregated architectures are possible.

If you missed it, now is your chance to catch up on one of the most important conversations happening during SC20. Click here to watch the webinar and learn about how HPC and AI leaders are thinking about the next wave of architectures as they unfold in real time.