Our friends at the UberCloud have published the 2018 edition of their Compendium of Cloud HPC Case Studies. “The goal of the UberCloud Experiment is to perform engineering simulation experiments in the HPC cloud with real engineering applications in order to understand the roadblocks to success and how to overcome them. The Compendium is a way of sharing these results with the broader HPC community.”

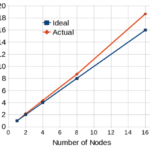

Maximizing Performance of HiFUN* CFD Solver on Intel® Xeon® Scalable Processor With Intel MPI Library

The HiFUN CFD solver shows that the latest-generation Intel Xeon Scalable processor enhances single-node performance due to the availability of large cache, higher core density per CPU, higher memory speed, and larger memory bandwidth. The higher core density improves intra-node parallel performance that permits users to build more compact clusters for a given number of processor cores. This permits the HiFUN solver to exploit better cache utilization that contributes to super-linear performance gained through the combination of a high-performance interconnect between nodes and the highly-optimized Intel® MPI Library.

NVIDIA Simplifies Building Containers for HPC Applications

In this video, CJ Newburn from NVIDIA describes how users can benefit from running their workloads in the NVIDIA GPU Cloud. “A container essentially creates a self contained environment. Your application lives in that container along with everything the application depends on, so the whole bundle is self contained. NVIDIA is now offering a script as part of an open source project called HPC Container Maker, or HPCCM that makes it easy for developers to select the ingredients they want to go into a container, to provide those ingredients in an optimized way using best-known recipes.”

Why UIUC Built HPC Application Containers for NVIDIA GPU Cloud

In this video from the GPU Technology Conference, John Stone from the University of Illinois describes how container technology in the NVIDIA GPU Cloud help the University distribute accelerated applications for science and engineering. “Containers are a way of packaging up an application and all of its dependencies in such a way that you can install them collectively on a cloud instance or a workstation or a compute node. And it doesn’t require the typical amount of system administration skills and involvement to put one of these containers on a machine.”

Video: VMware powers HPC Virtualization at NVIDIA GPU Technology Conference

In this video from from 2018 GPU Technology Conference, Ziv Kalmanovich from VMware and Fred Devoir from NVIDIA describe how they are working together to bring the benefits of virtualization to GPU workloads. “For cloud environments based on vSphere, you can deploy a machine learning workload yourself using GPUs via the VMware DirectPath I/O or vGPU technology.”

Singularity: The Inner Workings of Securely Running User Containers on HPC Systems

Michael Bauer from Sylabs gave this talk at FOSDEM’17. “This presentation will provide an in-depth look at how Singularity is able to securely run user containers on HPC systems. After a brief introduction to Singularity and its relationship to other container solutions, the details of Singularity’s runtime will be explored. The way that Singularity leverages Linux features such as namespaces, bind mounts, and SUID binaries will be discussed in further detail as well.”

Alces Flight: On Demand HPC now Available in the Azure Marketplace

Microsoft Azure customers worldwide now gain access to Alces Flight to take advantage of the scalability, reliability and agility of Azure. With Alces Flight, it is possible for researchers to spin up any size of High-Performance Computing cluster in minutes, providing users with a fully-featured HPC environment that includes thousands of open source applications.

Video: The Marriage of Cloud, HPC and Containers

Adam Huffman from the Francis Crick Institute gave this talk at FOSDEM’17. “We will present experiences of supporting HPC/HTC workloads on private cloud resources, with ideas for how to do this better and description of trends for non-traditionalHPC resource provision. I will discuss my work as part of the Operations Team for the eMedLab private cloud, which is a large-scale (6000-core, 5PB)biomedical research cloud using HPC hardware, aiming to support HPC workloads.”

Sharing High-Performance Interconnects Across Multiple Virtual Machines

Mohan Potheri from VMware gave this talk at the Stanford HPC Conference. “Virtualized devices offer maximum flexibility. This session introduces SR-IOV, explains how it is enabled in VMware vSphere, and provides details of specific use cases that important for machine learning and high-performance computing. It includes performance comparisons that demonstrate the benefits of SR-IOV and information on how to configure and tune these configurations.”