By Jorge Salazar, Science Writer, Texas Advanced Computing Center Viruses lurk in the grey area between the living and the nonliving, according to scientists. Like living things, they replicate but they don’t do it on their own. The HIV-1 virus, like all viruses, needs to hijack a host cell through infection in order to make copies […]

PSC’s Big Data and AI Supercomputer Replaced by New Bridges-2 Platform

February 15, 2021 — From the vastness of neutron-star collisions to the raw power of incoming tsunamis to the tiny, life-and-death details of how COVID-19 progresses, the Bridges platform at the Pittsburgh Supercomputing Center (PSC) has seen it all. Now Bridges has taken its final bow, ceding the title of PSC’s flagship high-performance computing (HPC) […]

MIT Researchers Develop Neural Networks for Computational Chemistry Using SDSC, PSC Supercomputers

Even though computational chemistry represents a challenging arena for machine learning, a team of researchers from the Massachusetts Institute of Technology (MIT) may have made it easier. Using Comet at the San Diego Supercomputer Center at UC San Diego and Bridges at the Pittsburgh Supercomputing Center, they succeeded in developing an artificial intelligence (AI) approach to detect electron correlation – the interaction between a system’s electrons – which is vital but expensive to calculate in quantum chemistry.

Video: Evolving Cyberinfrastructure, Democratizing Data, and Scaling AI to Catalyze Research Breakthroughs

Nick Nystrom from the Pittsburgh Supercomputing Center gave this talk at the Stanford HPC Conference. “The Artificial Intelligence and Big Data group at Pittsburgh Supercomputing Center converges Artificial Intelligence and high performance computing capabilities, empowering research to grow beyond prevailing constraints. The Bridges supercomputer is a uniquely capable resource for empowering research by bringing together HPC, AI and Big Data.”

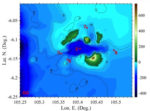

XSEDE Supercomputers Simulate Tsunamis from Volcanic Events

Researchers at the University of Rhode Island are using XSEDE supercomputer to show that high-performance computer modeling can accurately simulate tsunamis from volcanic events. Such models could lead to early-warning systems that could save lives and help minimize catastrophic property damage. “As our understanding of the complex physics related to tsunamis grows, access to XSEDE supercomputers such as Comet allows us to improve our models to reflect that, whereas if we did not have access, the amount of time it would take to such run simulations would be prohibitive.”

Supercomputing Ocean Wave Energy

Researchers are using XSEDE supercomputers to help develop ocean waves into a sustainable energy source. “We primarily used our simulation techniques to investigate inertial sea wave energy converters, which are renewable energy devices developed by our collaborators at the Polytechnic University of Turin that convert wave energy from large bodies of water into electrical energy,” explained study co-author Amneet Pal Bhalla from SDSU.

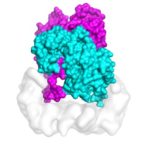

XSEDE Supercomputers Advance Skin Cancer Research

In this TACC podcast, UC Berkeley scientists describe how they are using powerful supercomputers to uncover the mechanism that activates cell mutations found in about 50 percent of melanomas. “The study’s computational challenges involved molecular dynamics simulations that modeled the protein at the atomic level, determining the forces of every atom on every other atom for a system of about 200,000 atoms at time steps of two femtoseconds.”

Pitt Researchers using HPC to turn CO2 into Useful Products

Researchers at the University of Pittsburgh are using XSEDE supercomputing resources to develop new materials that can capture carbon dioxide and turn it into a commercially useful substances. With global climate change resulting from increasing levels of carbon dioxide in the Earth’s atmosphere, the work could lead to a lasting impact on our environment. “The basic idea here is that we are looking to improve the overall energetics of CO2 capture and conversion to some useful material, as opposed to putting it in the ground and just storing it someplace,” said Karl Johnson from the University of Pittsburgh. “But capture and conversion are typically different processes.”

Pioneering and Democratizing Scalable HPC+AI at the Pittsburgh Supercomputing Center

Nick Nystrom from the Pittsburgh Supercomputing Center gave this talk at the Stanford HPC Conference. “To address the demand for scalable AI, PSC recently introduced Bridges-AI, which adds transformative new AI capability. In this presentation, we share our vision in designing HPC+AI systems at PSC and highlight some of the exciting research breakthroughs they are enabling.”

AI Breakthroughs and Initiatives at the Pittsburgh Supercomputing Center

Nick Nystrom and Paola Buitrago from PSC gave this talk at the HPC User Forum in Milwaukee. “The Bridges supercomputer at PSC offers the possibility for experts in fields that never before used supercomputers to tackle problems in Big Data and answer questions based on information that no human would live long enough to study by reading it directly.”