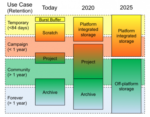

NERSC recently unveiled their new Community File System (CFS), a long-term data storage tier developed in collaboration with IBM that is optimized for capacity and manageability. “In the next few years, the explosive growth in data coming from exascale simulations and next-generation experimental detectors will enable new data-driven science across virtually every domain. At the same time, new nonvolatile storage technologies are entering the market in volume and upending long-held principles used to design the storage hierarchy.”

Deep Learning at scale for the construction of galaxy catalogs

A team of scientists is now applying the power of artificial intelligence (AI) and high-performance supercomputers to accelerate efforts to analyze the increasingly massive datasets produced by ongoing and future cosmological surveys. “Deep learning research has rapidly become a booming enterprise across multiple disciplines. Our findings show that the convergence of deep learning and HPC can address big-data challenges of large-scale electromagnetic surveys.”

Video: Managing large-scale cosmology simulations with Parsl and Singularity

Rick Wagner from Globus gave this talk at the Singularity User Group “We package the imSim software inside a Singularity container so that it can be developed independently, packaged to include all dependencies, trivially scaled across thousands of computing nodes, and seamlessly moved between computing systems. To date, the simulation workflow has consumed more than 30M core hours using 4K nodes (256K cores) on Argonne’s Theta supercomputer and 2K nodes (128K cores) on NERSC’s Cori supercomputer.”

ExaLearn Project to bring Machine Learning to Exascale

As supercomputers become ever more capable in their march toward exascale levels of performance, scientists can run increasingly detailed and accurate simulations to study problems ranging from cleaner combustion to the nature of the universe. Enter ExaLearn, a new machine learning project supported by DOE’s Exascale Computing Project (ECP), aims to develop new tools to help scientists overcome this challenge by applying machine learning to very large experimental datasets and simulations.