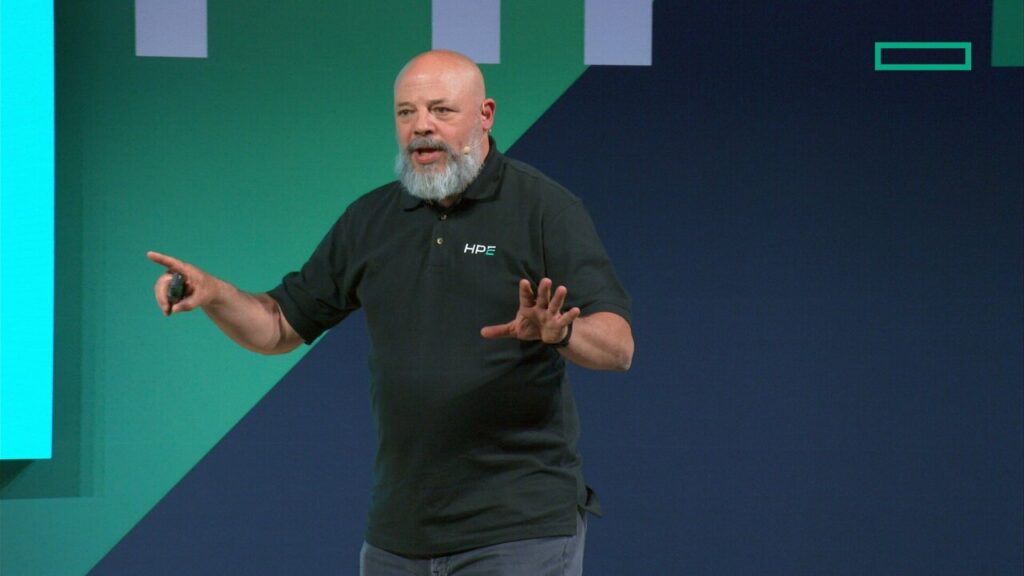

Kirk Bresniker

Last year at SC24, when we first heard about HPE’s quantum strategy – which could be summed up as “when the quantum community delivers something useful, we’ll be there with a supercomputer-qubits plug-in” – we thought it very clever.

For one, it supports the notion that quantum won’t so much supplant HPC as work alongside it, handling workloads for which it is best suited, complementing and augmenting HPC.

For another, the strategy seemed like a low-risk, no-lose proposition. Let the quantum people beat their brains out trying to get quantum to work, all HPE has to do is hook it up to an HPE-Cray EX supercomputer.

But at HPE’s recent Discover 2025 conference in Las Vegas we learned more about HPE’s quantum project, and it turns out our initial impression did not, in every respect, grasp the scope and complexity of HPE’s undertaking.

In fact, some of the smartest people at HPE are beating their brains out preparing HPE supercomputers to work in tandem with quantum, in whatever modality/ies that emerge from the quantum community.

The goal is to develop a heterogenous HPC-quantum infrastructure that works in an integrated, harmonious fashion, assigning workloads to appropriate architectures and modalities, exchanging massive data sets across HPC and quantum environme nt and delivering a powerhouse computing capability taking on problems beyond anything imaginable today. This is no easy feat.

HPE’s quantum strategy is the result of a reappraisal, a change in stance from non-participant from about 2011 to 2021 to active participant since then. It’s due in part to their acquisitions of SGI, in 2016, and Cray in 2019, which thrust HPE into the upper reaches of the supercomputing market and put HPE among customers that not only wanted things like exascale-class HPC, they also were interested in the next big things in HPC – including quantum.

Between the frequency of quantum questions raised at customer meetings and the growing quantum R&D investments in venture-backed quantum start-ups and established technology companies, HPE came to embrace the notion that quantum is probably an inevitability, and beginning about four years ago, the company was back in the quantum arena.

“You think about HPE at that time (before 2016), we weren’t talking to the top of the supercomputing industry,” Kirk Bresniker, HPE Labs chief architect, an HPE Fellow and vice president, told us last month at Discover.

“We weren’t in the position to compete for those premier HPC solutions. Then after Cray and SGI, we formed a community (with those companies and their customers), and really, it’s a testament to how that community jelled and then delivered those exascale systems on time and were accepted,” he said. “That also gave us a new perspective. It was time to come back to this (quantum) conversation, they’ve been asking questions for 10 years, and they deserved a better answer than we were giving them.”

Bresniker appears to have the right disposition for this project. He exudes not just smarts but also energetic optimism and good humor, valuable traits for anyone working in the head splitting, counter-reality of quantum, where things can be two different things at once and nothing happens in normal ways (see Scientiic American, “Quantum Physics Isn’t as Weird as You Think. It’s Weirder”).

The strategy he and his colleagues are pursuing parallels an effort begun in 2024 by the U.S. Defense Advanced Research Projects Agency’s DARPA Quantum Benchmarking Initiative (QBI). HPE is one of nearly 20 quantum computing companies* chosen to enter the program’s stage in which they will characterize their unique concepts for creating a useful, fault-tolerant quantum computer within a decade.

QBI, which kicked off in July 2024, aims to determine if it’s possible to build a quantum computer faster than has been generally predictions and intends to validate whether any quantum approach can achieve utility-scale operation (i.e., its computational value exceeds its cost) by the year 2033.

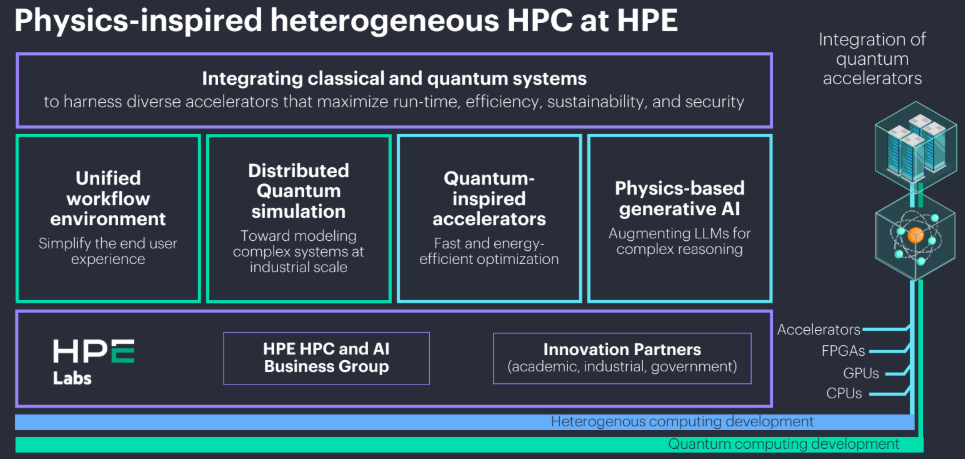

HPE’s quantum strategy has four interrelated parts, all of them reflecting more not so much an agnostic approach to constructing a classical-quantum infrastructure, it could be called all-embracing or, Bresniker’s term, “inclusive.”

1. Unified Workflow: This is enabling HPE-Cray supercomputers to work alongside quantum systems regardless of processor type, including CPU, GPU, FOGA or QPU (quantum processing unit). The supercomputer and the quantum system should be integrated seamlessly into a working whole.

“I don’t want to have people having to research individual disciplines and have a million tools, a million steps,” Bresniker said. “I want to understand across these very complex modalities, across something that not only has been integrated and has to be calibrated and is in the real time ecosystem. How do I get the most out of the QPU without everyone having to get a graduate degree in quantum mechanics? That’s the challenge.”

This part of the strategy is necessary given the current, immature state of quantum skills.

“We anticipate a future generation of quantum natives,” Bresniker said. “I don’t know if they’re in pre-school or first grade right now, but eventually, in a decade or two, we’ll have people who are probabilistic quantum computing natives. Until then, we need to make progress. We need to make today more productive. And that’s what the unified workflow program is all about.”

“We anticipate a future generation of quantum natives,” Bresniker said. “I don’t know if they’re in pre-school or first grade right now, but eventually, in a decade or two, we’ll have people who are probabilistic quantum computing natives. Until then, we need to make progress. We need to make today more productive. And that’s what the unified workflow program is all about.”

2. Distributed Quantum Simulation: HPE wants to use state-of-the-art supercomputing to design not only better qubits but also quantum-classical hybrid systems.

“It can take a problem and break it apart into a digital twin and tell me, ‘OK, this is necessarily a quantum piece, and you should put this half of the problem in a trapped ion. Then you put this half of the problem in a superconducting qubit, and for the rest, we have great classical approximations.’

“We want understand how we create that hybrid system,” Bresniker said. “The good thing about having that environment is it can tell us when we achieve a combination hybrid quantum-classical system, and what will it take. “We want to use the right qubit for each part of the necessarily quantum piece, but where possible I want to use classical approximations whenever they are good enough. I don’t want to waste a qubit on doing arithmetic.”

A benefit of this work is to help reveal whether the classical/quantum combination “is worth it, or should we use the simulation capability to tell the quantum hardware across six figures of merit on this particular type of qubit, that if you tweak this one a little bit … here’s what will open up as far as applications.”

3. Quantum-Inspired Accelerators: The third piece of the strategy is closely related to the first – HPE isn’t themselves developing quantum processors, but they are developing classical, low physics based accelerators that work with quantum processors.

“I said we weren’t going to blow the dust off” HPE’s QPU-related work from circa 2010 (which focused on nitrogen-vacancy in the diamond lattice), Bresniker said, “we’re letting other people (at quantum companies) who do that work on that.”

The team at HPE Labs is working on data communications technologies between classical systems and quantum, things like large-scale integrated photonics and Picojoules per bit for communication of classical data.

“Now they’re understanding how to re-purpose those same elements, inferometers, the diffraction grating couplers, the microring resonators,” he said. “This technology is right in the middle of where quantum also is, it’s a parallel solve – a parallel solve is quantum physics based accelerators, classical physics based accelerators, and then unified workflow.”

The point being: neither HPE nor its customers “…have to make one bet. I get to look over that entire field. Because, frankly, I don’t care if it is a superconducting qubit, a trapped ion qubit, a classical physics-based accelerator or whatever – whatever it takes to get an enterprise outcome out of these systems and begin to prepare the enterprise to capitalize on this technology is what I want.”

4. Physics-Based Generative AI: The fourth part of the strategy relates to solving large-scale logistics problem, the proverbial traveling salesman problem, also called “NP-hard optimization problems,” and HPE’s effort to build in several complementary ways of attacking them, be it with quantum or physic-based large language models or quantum-inspired classical HPC.

When, presumably, the time arrives for HPE supercomputers to work alongside quantum, the best means of solving the problem will be assigned the job in real time.

“This is utilizing physics-based accelerators to solve some of the same problems that quantum aspires to,” Bresniker said. “… here’s a gigantic binary equation with all those ‘ands, ors, nots,’ so which of the millions of inputs would have to be set in order for it to be true? …

“You can also begin to use this to evaluate propositional logic, and this is where we’re talking about adding a new faculty. LLMs are fantastic at interpolation, at inductive reasoning… What they aren’t capable of doing is deductive reasoning, they’re not capable of extrapolation. They’re not capable of evaluating a general purpose first order logic equation and give you that satisfiability.

That’s the fourth pillar, “using quantum-inspired classical, and eventually perhaps also classical” (on these problems). I actually don’t care which one is the right one to solve the job as long as we can connect them to interesting problems for enterprise. And in addition to the logistic problems, we also want to attach them to deep reasoning problems. So adding that ability for deep reasoning, that ability to evaluate deep propositional logic theorems and evaluate which ones are satisfied, which ones are unsatisfiable and which ones are valid, and that’s really that fourth pillar, using the quantum inspired classical for satisfiability problems.”

* QBI participating companies: Alice & Bob — Cambridge, MA, and Paris, (superconducting cat qubits); Atlantic Quantum — Cambridge, MA (fluxonium qubits with co-located cryogenic controls); Atom Computing — Boulder (scalable arrays of neutral atoms); Diraq — Sydney with operations in Palo Alto and Boston (silicon CMOS spin qubits); HPE; IBM — (quantum computing with modular superconducting processors); IonQ — College Park, MD (trapped-ion quantum computing); Nord Quantique — Sherbrooke, Quebec, (superconducting qubits with bosonic error correction); Oxford Ionics — Oxford and Boulder (trapped-ions); Photonic Inc. — Vancouver, BC, (optically-linked silicon spin qubits); Quantinuum — Broomfield, CO (trapped-ion quantum charged coupled device architecture); Quantum Motion — London (MOS-based silicon spin qubits); QuEra Computing — Boston (neutral atom qubits), Rigetti Computing — Berkeley (superconducting tunable transmon qubits); Silicon Quantum Computing Pty. Ltd. — Sydney (precision atom qubits in silicon); Xanadu — Toronto (photonic quantum computing)