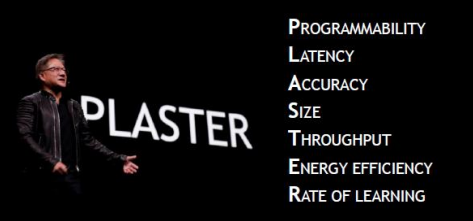

At the recent NVIDIA GPU Technology Conference (GTC) 2018, Jensen Huang, NVIDIA President and CEO, during his presentation focused on a new framework designed to contextualize the key challenges using AI systems and delivering deep learning-based solutions.

Download the full report.

A new white paper, from Tirias Research, and sponsored by NVIDIA, outlines these requirements — coined PLASTER.

The PLASTER framework covers the following seven major challenges for delivering AI-based services.:

- Programmability

- Latency

- Accuracy

- Size of Model

- Throughput

- Energy Efficiency

- Rate of Learning

The new report not only covers these seven challenges, but it also explores them in the context of NVIDIA’s deep learning solutions.

According to the report, anyone interested in developing and deploying AI services should factor in all of these elements to “arrive at a complete view of deep learning performance.”

The framework outlined is especially useful for those developing and delivering the inference engines behind AI-based services.

The paper goes through each measurements for each framework component, as well as offers examples of customers using NVIDIA solutions to tackle problems with machine learning.

For example, NVIDIA explores programmability first, as it is difficult even for experts to understand the model choices involved in machine learning, let alone choose the appropriate model to solve their AI business problems. NVIDIA addresses these training and inference challenges with two tools; for coding, the company has CUDA; and for inference, developers can use TensorRT, NVIDIA’s programmable inference accelerator.

Another framework detail integral to AI covered in the report is latency. Both humans and machines need a response to make decisions and take action, and latency is that time between requesting something and receiving a response. An instance of latency in action is Bing’s new visual search platform. The search giant was looking to deliver a visual search platform that could provide quick results. Using NVIDIA Tesla GPUs, Microsoft reduced their latency to just 40 milliseconds from 2.5 seconds initially.

Download the full report to walk through all seven of the PLASTER framework components.

NVIDIA points out that organizations that consider PLASTER as an organizing principle can achieve these three outcomes:

- Better manage performance of the DL systems

- Make more efficient use of developer time

- Create a DevOps environment in DL to support the products and services customers want

Ultimately, PLASTER is designed to allow organizations to better understand and manage the critical aspects of deep learning performance.

Download the new TIRIAS Research white paper, sponsored by NVIDIA, to explore using a framework to address the opportunities and challenges associate with AI systems.