In this special guest feature, Dan Olds from OrionX.net continues his series of stories on the SC19 Student Cluster Competition. Held as part of the Students@SC program, the competition is designed to introduce the next generation of students to the high-performance computing community.

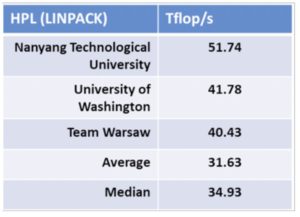

Nanyang Tech took home the Highest LINPACK Award at the recently concluded SC19 Student Cluster Competition. The team, also known as the Pride of Singapore (at least to me), easily topped the rest of the field with their score of 51.74 Tflop/s.

Nanyang Tech took home the Highest LINPACK Award at the recently concluded SC19 Student Cluster Competition. The team, also known as the Pride of Singapore (at least to me), easily topped the rest of the field with their score of 51.74 Tflop/s.

They had a system that was tailor made to conquer LINPACK. Dual nodes with 96 Xeon cores and a whopping 16 NVIDIA V100 GPUs was more than enough to vanquish the rest of the field.

Newcomer University of Washington pulled into second place with another two-node configuration, sporting a Xeon processor with beefier cores. However, the Udub students only had 8 NVIDIA V100 GPUs, which means they optimized the hell out of LINPACK in order to grab second place.

Washington barely edged out Team Warsaw, who finished third. This is the highest placement for a Warsaw team since they started competing back in 2017. They drove their five-node, eight GPU cluster to a LINPACK of 40.43, great job!

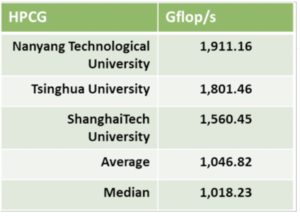

Nanyang also took home the Highest HPCG Award, which is not a real award, but something I’ve been tracking ever since it became part of the cluster competitions several years ago.

Nanyang also took home the Highest HPCG Award, which is not a real award, but something I’ve been tracking ever since it became part of the cluster competitions several years ago.

HPCG is usually right in Tsinghua’s wheelhouse, but they were denied this year, having to settle for second place to NTY.

ShanghaiTech grabbed a distant third on HPCG, but their score is still way above both the average and median scores of all 16 teams.

These scores are great, but not record breaking. The Student Cluster Competition highest LINPACK ever is 56.51 Tflop/s and was posted last year at SC18. The highest HPCG ever recorded was 2,080 Gflop/s at ASC18 by Tsinghua University.

A LINPACK or HPCG record doesn’t last all that long, usually a competition or two. As hardware advances, records fall, which shouldn’t surprise anyone. The only significant hardware change in the last year or so is the advent of 32GB V100 GPUs and they don’t seem to be helping out on these benchmarks. The average core count per CPU has risen over the last few years, but the frequencies have dropped commensurately, so there’s no real win there – at least when it comes to LINPACK and HPCG.

Next up we’ll be looking at and analyzing the official results and then doing our own deep dive analysis with our patented algorithms to see who got the most out of their hardware. If you’re looking for even more cluster competition coverage, check out www.studentclustercomp.com and revel in the rich history of these competitions.

Next up we’ll be looking at and analyzing the official results and then doing our own deep dive analysis with our patented algorithms to see who got the most out of their hardware. If you’re looking for even more cluster competition coverage, check out www.studentclustercomp.com and revel in the rich history of these competitions.

| Overall: | Tsinghua University |

| Linpack: | Nanyang Technological University |

| HPCG: | Nanyang Technological University |

| io500: | Tsinghua University |

Dan Olds is an Industry Analyst at OrionX.net. An authority on technology trends and customer sentiment, Dan Olds is a frequently quoted expert in industry and business publications such as The Wall Street Journal, Bloomberg News, Computerworld, eWeek, CIO, and PCWorld. In addition to server, storage, and network technologies, Dan closely follows the Big Data, Cloud, and HPC markets. He writes the HPC Blog on The Register, co-hosts the popular Radio Free HPC podcast, and is the go-to person for the coverage and analysis of the supercomputing industry’s Student Cluster Challenge.