In this video from the PBS User Group 2013, Jeffrey Denton from Clemson University presents: Using PBS and myHadoop to Schedule Hadoop MapReduce Jobs Accessing a Persistent OrangeFS Installation.

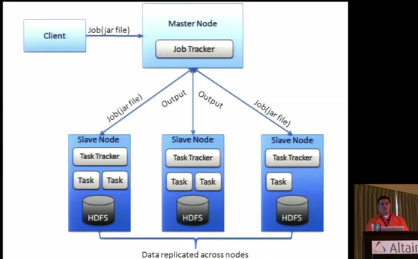

Using PBS Professional and a customized version of myHadoop has allowed researchers at Clemson University to submit their own Hadoop MapReduce jobs on the “Palmetto Cluster”. myHadoop makes the task of configuring Hadoop to run in a scheduled environment much simpler by automating adjustments to the Hadoop configuration files based upon the PBS job’s resources. Now, researchers at Clemson can run their own dedicated Hadoop daemons in a PBS scheduled environment as needed. Research conducted at Carnegie Mellon University showed that PVFS (OrangeFS’s precursor), with some additional middleware and Hadoop extensions, could perform as well as Hadoop’s file system (HDFS) in the Hadoop framework. Since Clemson University maintains a large OrangeFS installation for HPC I/O, the OrangeFS Client JNI Shim was developed to enable Hadoop MapReduce to leverage OrangeFS. This means that data written to OrangeFS doesn’t have to be staged into HDFS before a MapReduce job can be run. In fact, when OrangeFS is used as the underlying file system, Hadoop’s HDFS daemons aren’t needed at all. We hope to see an improvement in Hadoop MapReduce performance by scaling to a large number of PBS scheduled, Hadoop MapReduce clients accessing a persistent OrangeFS installation connected via high speed InfiniBand.”

You can check out more talks from the show at our PBS Works User Group Video Gallery.