This article is part of the Five Essential Strategies for Successful HPC Clusters series which was written to help managers, administrators, and users deploy and manage HPC cluster growth.

Almost all HPC clusters grow in size and capability after installation. Indeed, most clusters see several stages of growth beyond their original design. Expanding a cluster almost always means new or next-generation processors and/or coprocessors plus accelerators. The original cluster configuration often will not work with new hardware due to the need for updated drivers and OS kernels. Thus, there needs to be some way to manage growth.

In addition to capability, the management scale of clusters often changes when they expand. Many times, home-brew clusters can be victims of their own success. After setting up a successful cluster, users often want more node capacity. The assumption that “more is better” makes sense from an HPC perspective, however, most standard Linux tools are not designed to scale their operation over large numbers of systems. In addition, many custom scripts that previously worked on a handful of nodes may break when the cluster grows. Unfortunately, this lesson is often learned after the new hardware is installed and placed in service. Essentially, the system becomes unmanageable at scale – and may require complete redesign of custom administration tools.

Smaller clusters often overload a single server with multiple services such as file, resource scheduling, plus monitoring/management. While this approach may work for systems with fewer than 100 nodes, these services can overload the cluster network or the single server as the cluster grows. For instance, imagine that a cluster-wide reboot is needed to update the OS kernel with new drivers. If the tools do not scale, there may be nodes that do not reboot, or end up in an unknown state. The next step is to start using an out-of-band IPMI tool to reboot the stuck nodes by hand. Other changes may suffer a similar fate, and fixing the problem may require significant downtime while new solutions are tested.

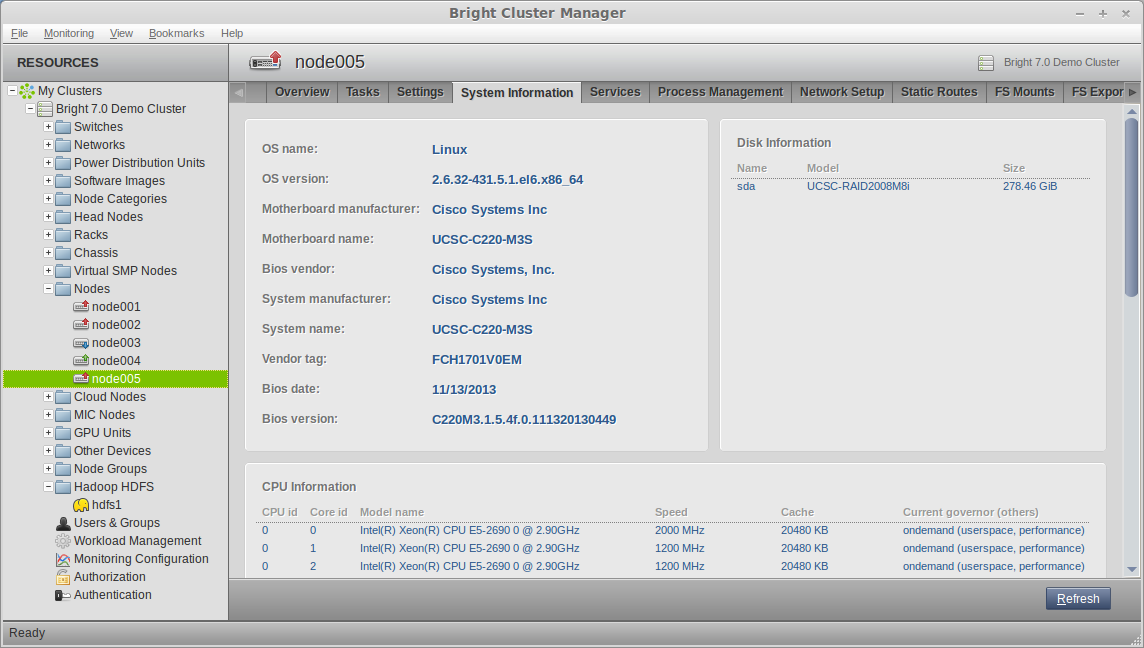

An alternative to managing a production cluster with unproven tools is to employ software that is known to scale. Some of the open source tools work well in this regard, but should be configured and tested prior to going into production mode. A fully integrated system like Bright Cluster Manager offers a tested and scalable approach for any size cluster. Designed to scale to thousands of nodes, Bright Cluster Manager is not dependent on third-party (open source) software that may not support this level of scalability. As shown in Figure 2, enhanced scalability is accomplished by a single management daemon with low CPU and memory overhead on each node, multiple load-balancing provisioning nodes, synchronized cluster management daemons, built-in redundancy, and support for diskless nodes.

Bright Cluster Manager manages heterogeneous-architecture systems and clusters through a single GUI. Here a flexible, multiplexed Cisco UCS rack server provides physical resources for a Hadoop HDFS instance as well as for HPC via Bright.

Recommendations for Managing Cluster Growth

- Assume the cluster will grow in the future and make sure you can accommodate both scalable and non-homogeneous growth. (i.e. expansion with different hardware than the original cluster (see below).

- Though difficult in practice, attempt to test any homegrown tools and scripts for scalability. Many Linux tools were not designed to scale to hundreds of nodes. Expanding your cluster may push these tools past their usable limits.

- In addition, test all open source tools prior to deployment. Remember proper configuration may affect scalability as the cluster grows.

- Make sure you have a scalable way to push package and OS upgrades to nodes without having to rely on system-wide reboots. Make sure the “push” method is based on a scalable tool.

- A proven and unified approach, using a solution such as Bright Cluster Manager, offers a scalable, low overhead method for managing clusters. Changes in the type or scale of hardware will not impact your ability to administer the cluster. Removing the dependence on homegrown tools or hand configured open source tools allows system administrators to focus on higher-level tasks.

Next week’s article will look at How to Manage Heterogeneous Hardware/Software Solutions. If you prefer you can download the entire insideHPC Guide to Successful HPC Clusters, courtesy of Bright Computing, by visiting the insideHPC White Paper Library.