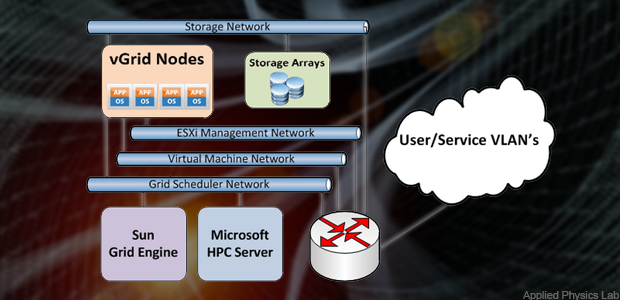

Over at GCN, John Breeden II writes that virtualization is finally starting to see adoption in HPC. He cites an interesting use case at the Johns Hopkins University Applied Physics Laboratory, where Edmond DeMattia migrated independent grids into a fully virtualized environment that reduced idle computing cycles while a providing a big jump in throughput when pushing millions of calculations through the system.

My team fundamentally redesigned how high-performance scientific computing is performed in the Air and Missile Defense Department by utilizing virtualization and distributed storage as the framework for pooling resources across multiple departments,” DeMattia said. “By leveraging the ESXi abstraction layer, multiple stove-piped high-performance computing grids are aggregated into a single 3,728 core vGrid, hosting multiple operating systems and grid scheduling engines. This has allowed our engineers to achieve decreased simulation runtimes by an order of magnitude for many studies.”

Read the Full Story.