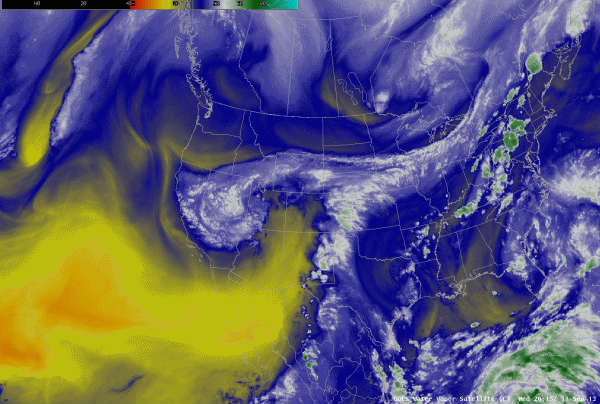

This is an animated loop of water vapor systems over the western area of North America on September 12th, 2013 as shown by the GOES- 15 and GOES-13 satellites.

Researchers are using supercomputers at LBNL to determine how global climate change has affected the severity of storms and resultant flooding.

In September 2013, severe storms struck Colorado with prolonged, heavy rainfall, resulting in at least nine deaths, 1,800 evacuations and 900 homes destroyed or damaged. The eight-day storm dumped more than 17 inches of rain, causing the Platte River to reach flood levels higher than ever recorded.

The severity of the storms, which also occurred unusually late in the year, attracted the interest of scientists at Lawrence Berkeley National Laboratory who specialize in studying extreme weather. In many instances, their research has shown that such events are made more intense in a warmer climate.

In a paper that appeared online on July 18, 2017 at Weather and Climate Extremes, the team reports that climate change attributed to human activity made the storm much more severe than would otherwise have occurred.

The storm was so strong, so intense, that the standard climate models that do not resolve fine-scale details were unable to characterize the severe precipitation or large scale meteorological pattern associated with the storm,” said Michael Wehner, a climate scientist in the lab’s Computational Research Division and co-author of the paper.

The researchers then turned to a different framework using the Weather Research and Forecasting regional model to study the event in more detail. The group used the publicly available model, which can be used to forecast future weather, to “hindcast” the conditions that led to the Sept. 9-16, 2013 flooding around Boulder, Colorado. The model allowed them to study the problem in greater detail, breaking the area into 12-kilometer squares.

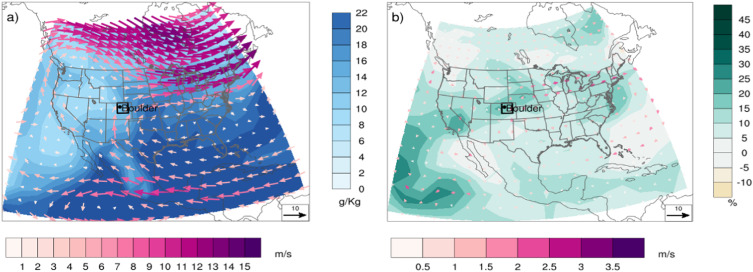

Modelled synoptic situation and change for the rainy week of September 2013. (a) Total 7-day (00Z 9 September – 00Z 16 September 2013) precipitable water (g/Kg), and average 7-day 700 hPa winds (m/s) over the WRF model domain. Values are ensemble-averages over an ensemble of 101 WRF model simulations under anthropogenic conditions. (b) Corresponding change (%) relative to the ensemble-average of 101 WRF model simulations under non-anthropogenic conditions. Black box demarcates the northeast Colorado target area, including Boulder.

They ran 101 hindcasts of two versions of the model: one based on realistic current conditions that takes human-induced changes to the atmosphere and the associated climate change into account, and one that removed the portion of observed climate change attributed to human activities. The difference between the results were then attributed to these human activities. The human influence was found to have increased the magnitude of heavy rainfall by 30 percent. The authors found that this increase resulted in part from the ability of a warmer atmosphere to hold more water.

This event was typical in terms of how the storm sent water to the area, but it was unusual in terms of the amount of water and the timing,” said co-author Dáithí Stone, also of Berkeley Lab. “We know that the amount of water air can hold increases by about 6 percent per degree Celsius increase, which led us to expect that rainfall would have been 9-15 percent higher, but instead we found it was 30 percent higher.”

The results perplexed the team initially as the answers were turning out to be more complicated than they originally postulated – the storm was more violent in terms of both wind and rain.

We had expected the moist air hitting the mountain range to be ’pushing’ water out of the air,” said lead author Pardeep Pall. “What we had not realized was that the rain itself would also be ’pulling’ more air in. Air rises as it is raining, and that in turn pulled in more air from below, which was wet, producing more rain, causing more air to raise, pulling in more air, and so on.”

The greater rainfall in turn led to more flooding and more damage. Photos from the storm showed many cars wrecked or stranded as roads and bridges were washed away. Damage to roadways alone was estimated at $100-150 million.

The increase in precipitation was greater than the warming alone would have predicted,” Stone said. “Using the local dynamical model, we found that the “storm that was” was more violent than the “storm that might have been”, something we hadn’t hypothesized.”

Christina Patricola, co-author and research scientist in the lab’s Climate and Ecosystem Sciences Division, who was working at Texas A&M when doing the study, said understanding extreme weather is important because the way we experience climate, for instance through weather-related damage, tends to be dominated by extreme weather. However, the nature of such events is also hard to understand because they are so rare. Event attribution studies like the one described in the paper can help lead to improved understanding.

The authors emphasized that the study is not intended to predict such events in the future.

“This was a very rare event and remains so, and we’re not making predictions with this work,” Stone said. “The exact event won’t happen again, but if we get the same sort of weather pattern in a climate that is even warmer than today’s, then we can expect it to dump even more rain.” But beyond the increased amount of precipitation, Wehner adds, “this study more generally increases our understanding of how the various processes in extreme storms can change as the overall climate warms.”

Despite the understanding gained through this study, many questions about extreme weather events remain.

Our climate modeling framework opens the door to understanding other types of extreme weather events,” said Patricola. “We are now investigating how humans may have influenced tropical cyclones. Advances in supercomputing make it feasible to run simulations that can reveal what is happening inside storm clouds.”

The models were run as part of the Calibrated and Systematic Characterization, Attribution, and Detection of Extremes (CASCADE) project at Berkeley Lab. The models were run on supercomputers at the National Energy Research Scientific Computing (NERSC) Center, a DOE Office of Science User Facility located at Berkeley Lab.

Other authors of the paper are Christopher Paciorek of the University of California, Berkeley; and CASCADE program head William Collins of Berkeley Lab.

Source: LBNL