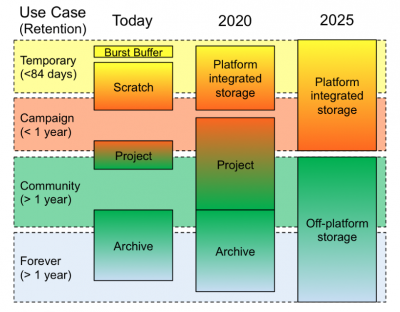

Evolution of the NERSC storage hierarchy between 2017, when the Storage 2020 report was published, and 2025.

NERSC recently unveiled their new Community File System (CFS), a long-term data storage tier developed in collaboration with IBM that is optimized for capacity and manageability.

The CFS replaces NERSC’s Project File System, a data storage mainstay at the center for years that was designed more for performance and input/output than for capacity or workflow management. But as high performance computing edges closer to the exascale era, the data storage and management landscape is changing, especially in the science community,” noted Glenn Lockwood, acting group lead of NERSC’s Storage Systems Group. “In the next few years, the explosive growth in data coming from exascale simulations and next-generation experimental detectors will enable new data-driven science across virtually every domain. At the same time, new nonvolatile storage technologies are entering the market in volume and upending long-held principles used to design the storage hierarchy.”

Multi-tiered Approach

In 2017, these emerging challenges prompted NERSC and others in the HPC community to publish the Storage 2020 report, which outlines a new, multi-tiered data storage and management approach designed to better accommodate the next generation of HPC and data analytics. Thus was born the CFS, a disk-based layer between NERSC’s short-term Cori scratch file system and the High Performance Storage System tape archive that supports the storage of, and access to, large scientific datasets over the course of years. It is essentially a global file system that facilitates the sharing of data between users, systems, and the outside world, providing a single, seamless user interface that simplifies the management of these “long-lived” datasets. It serves primarily as a capacity tier as part of NERSC’s larger storage ecosystem, storing data that exceeds temporary needs but should be more readily available than the tape archive tier.

With an initial capacity of 60PB of data (compared to the Project File System’s 11PB, and expanding to 200PB by 2025) and aggregate bandwidth of more than 100GB/s for streaming I/O, “CFS is by far the largest file system NERSC currently has – twice the size of Cori scratch,” said NERSC’s Kristy Kallback-Rose, who is co-leading the CFS project with Lockwood and gave a presentation on the new system at the recent Storage Technology Showcase in Albuquerque, NM.

The strategy is we have this place where users with large data requirements can store longer term data, where users can share that data, and where community data can live,” Lockwood said. “High capacity, accessibility, and availability were the key drivers for CFS.”

Working with IBM to develop and refine the system has been key to its success, Lockwood and Kallback-Rose emphasized. The CFS is based on IBM’s Spectrum Scale, a high-performance clustered file system designed to manage data at scale and perform archive and analytics in place. IBM Elastic Storage Server is a modern implementation of software-defined storage, combining IBM Spectrum Scale software with IBM POWER8 processor-based I/O-intensive servers and dual-ported storage enclosures. IBM Spectrum Scale is the parallel file system at the heart of IBM ESS. IBM Spectrum Scale scales system throughput as it grows while still providing a single namespace. This eliminates data silos, simplifies storage management, and delivers high performance within an easily scalable storage architecture.

IBM is delighted to be partnering with NERSC, our decades-old client, to provide our latest storage solutions that will accelerate their advanced computational research and scientific discoveries,” said Amy Hirst, Director, Spectrum Scale and ESS Software Development. “With this IBM storage infrastructure, NERSC will be able to provide its users with a high performance file system that is highly reliable and will allow for seamless storage growth to meet their future demands.”

End-to-End Data Integrity

CFS has a number of additional features designed to enhance user interaction with their data, Kallback-Rose noted. For example, every NERSC project has an associated Community directory that offers a “snapshot” capability that gives users a seven-day history of their content and any changes made to that content. In addition, the system offers end-to-end data integrity; this means that, from client to disk, there are checks along the way to ensure that, for instance, any bits that are removed are those the user intended to be removed.

This speaks to the goal of making the CFS a very reliable place to store data,” Lockwood said. “There are a lot of features to protect against data corruption and data loss due to service disruption. Your data, once it is in the CFS, it will always be there in the way that you left it.”

Another important aspect of the CFS is that, in terms of data storage, it is designed to outlive NERSC’s supercomputers. “When Cori is decommissioned, all the data in it will be removed,” Lockwood said. “And when Perlmutter arrives, it will have no data in it. But the CFS, and all the data stored there, will be there throughout these and future transitions.”

The data migration from the Project File System to the CFS, which occurred in early January, took only three days, and NERSC users are so far enthusiastic about the new system’s impact on their workflows. For example, being able to adjust quotas on a per directory basis makes administering shared directory space much easier, according to Heather Maria Kelly, a software developer with SLAC who is involved with the Large Synoptic Survey Telescope (LSST) Dark Energy Science Collaboration. “Previously we had to rely on the honor system to control disk use, and too often users would inadvertently over-use both disk and inode allocations. CFS frees up our limited staff resources,” she said.

In addition, the ability to receive disk allocation awards “made a huge difference in terms of our project’s ability to utilize NERSC for our computational needs,” Kelly said. “The disk space we received will enable our team to perform the simulation and data processing necessary to prepare more fully for LSST’s commissioning and first data release.”

The Community File System gives our users a much needed boost in data storage and data management capabilities, enabling advanced analytics and AI on large scale online datasets,” said Katie Antypas, Division Deputy and Data Department Head at NERSC. “This is a crucial step for meeting the DOE SC’s mission science goals.”

Source: NERSC