The June 2014 TOP500 list was just announced at ISC’14. And for those us who follow such things, it should look very familiar as this listing includes the same top nine systems as last time.

The June 2014 TOP500 list was just announced at ISC’14. And for those us who follow such things, it should look very familiar as this listing includes the same top nine systems as last time.

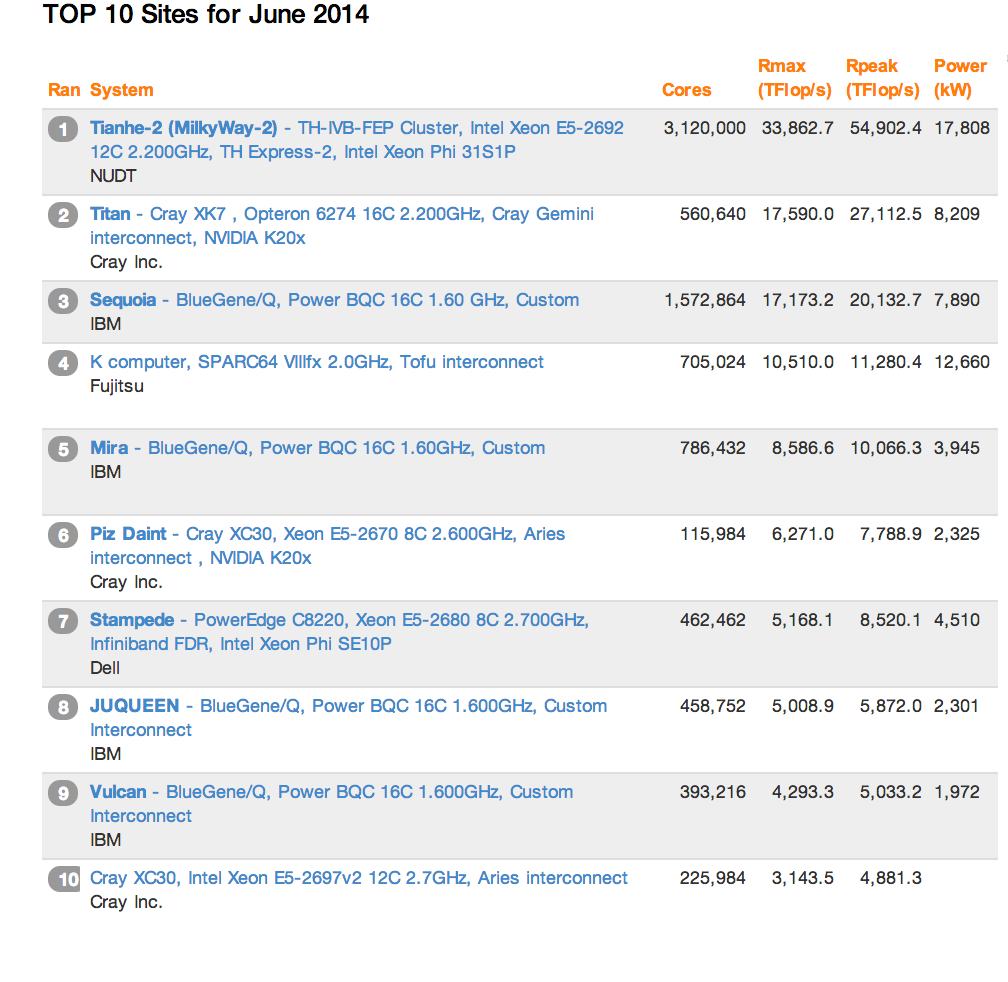

For the third consecutive list, Tianhe-2 remains world’s No. 1 system with a performance of 33.86 Petaflops on the Linpack benchmark. The US entries from Titan and Sequoia come in at number two and three, respectively, followed by the formidable K computer in Japan coming in at number four.

To me, what this TOP10 list shows is that it is very lonely at the top if you are looking for new blood. The only new entry was at number 10 — a 3.14 Pflop/s Cray XC30 installed at an undisclosed U.S. government site.

According to TOP500, the overall growth rate of all the systems is at a historical low. Does this mean that Moore’s Law is running out of gas? I would say no, but it is getting harder to get these big clusters to scale without running into power requirements that get impractical. For most sites, the 17 megawatts that it takes to run a top machine like Tianhe-2 is simply out of reach as that translates into $17 million per year in electricity just to run the thing.

So before we get too frustrated, it is important to mention that yes, bigger systems are in the works. The 150 petaflop CORAL systems coming will be to the labs in 2017 and the next generation of the K computer could best 100 petaflops as well.

At the same time, the vendors have exciting technologies in the works that will enable these kinds of leaps at the top while at the same time making Petascale machines much more accessible. The new HP Apollo 8000 systems can do a Petaflop in just four racks (without accelerators) and that kind of configuration is much more within the realm of possibility for your typical datacenter to handle. This notion of “accessible Petascale” is what gets a lot of scientists excited.

In terms of country rankings, the United States remains the top country in terms of overall systems with 233, this is down from 265 on the November 2013 list. The number of Chinese systems on the list rose from 63 to 76, giving the Asian nation nearly as many supercomputers as the UK, with 30; France, with 27; and Germany, with 23; combined. Japan also increased its showing, up to 30 from 28 on the previous list.

Highlights:

- Total combined performance of all 500 systems has grown to 274 Pflop/s, compared to 250 Pflop/s six months ago and 223 Pflop/s one year ago. This increase in installed performance also exhibits a noticeable slowdown in growth compared to the previous long-term trend.

- There are 37 systems with performance greater than a Pflop/s on the list, up from 31 six months ago.

- The No. 1 system, Tianhe-2, and the No. 7 system, Stampede, use Intel Xeon Phi processors to speed up their computational rate. The No. 2 system, Titan, and the No. 6 system, Piz Daint, use NVIDIA GPUs to accelerate computation.

- A total of 62 systems on the list are using accelerator/co-processor technology, up from 53 from November 2013. Forty-four of these use NVIDIA chips, two use ATI Radeon, and there are now 17 systems with Intel MIC technology (Xeon Phi). The average number of accelerator cores for these 62 systems is 78,127 cores/system.

- Intel continues to provide the processors for the largest share (85.4 percent) of TOP500 systems. The share of IBM Power processors remains at 8 percent, while the AMD Opteron family is used in 6 percent of the systems, down from 9 percent on the previous list.

- Ninety-six percent of the systems use processors with six or more cores and 83 percent use eight or more cores.

- HP has the lead in systems and now has 182 systems (36 percent) compared to IBM with 176 systems (35 percent). HP had 196 systems (39 percent) six months ago, and IBM had 164 systems (33 percent) six months ago. In the system category, Cray remains third with 10 percent (51 systems).

Sign up for our insideHPC Newsletter.