Over at the Altair Blog, Bill Nitzberg has started a series of posts looking at the road to Exascale. He starts by looking back at the trends that have lead us to this point, concluding that Exascale infrastructure will require advances in four areas: scale, speed, resilience, and power management.

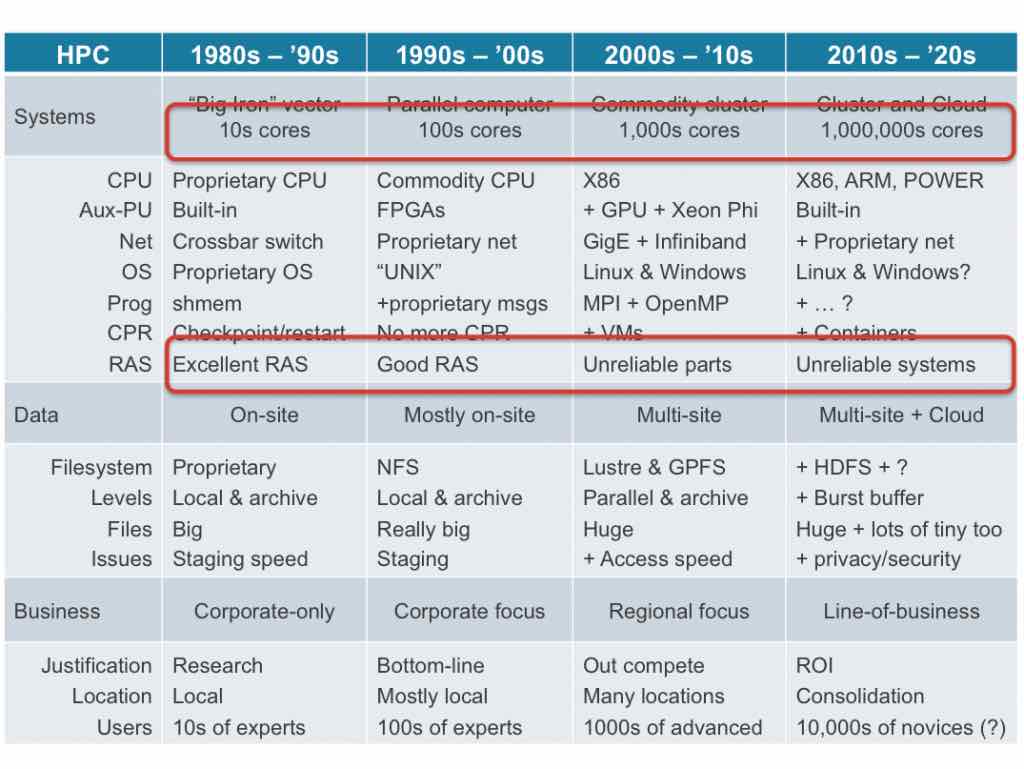

Actually, scale, speed, resilience and power management are really interdependent. With greater scale comes the need for greater software efficiency (speed) and greater power. The resilience connection comes from the need to keep costs affordable: as systems have increased in scale, the industry has moved from using a small set of proprietary (expensive) components to using a very large set of commodity (inexpensive) components. The thing about inexpensive commodity components is that they are also more unreliable. (That’s one reason they are less expensive!)

Altair is playing an important role here, both by scaling up our HyperWorks solvers to provide accurate, repeatable results at huge scale, and by providing PBS Works (my main area of focus). In particular, PBS Professional provides job scheduling and workload management – “must have” core capabilities for every HPC system – ensuring HPC goals are met by enforcing site-specific use policies, enabling users to focus on science and engineering rather than IT, and optimizing utilization of hardware, licenses, and power to minimize waste.