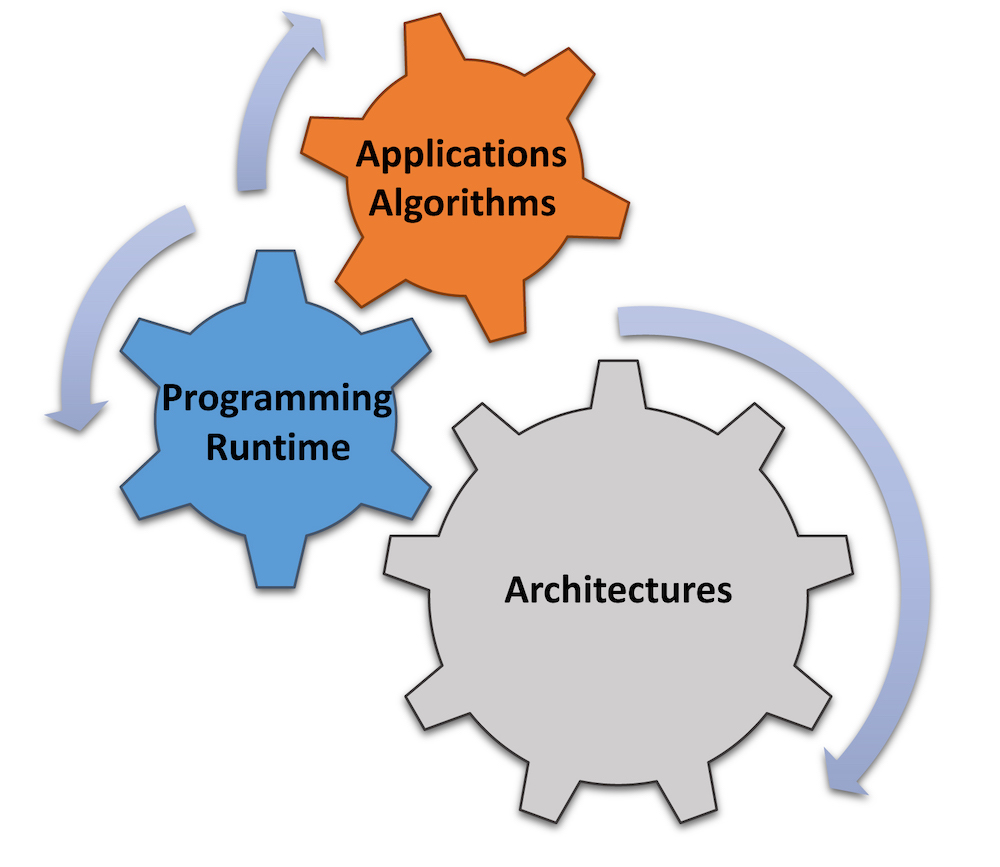

The Artificial Intelligence-focused Architectures and Algorithms concept has applications, algorithms, programming runtime and architectures all working together in the center featuring Sandia National Laboratories, Pacific Northwest National Laboratory and the Georgia Institute of Technology. (Graphic design by Roberto Gioiosa and Siva Rajamanickam)

Sandia National Laboratories, PNNL, and the Georgia Institute of Technology are launching a research center that combines hardware design and software development to improve artificial intelligence technologies that will ultimately benefit the public. The Department of Energy Office of Advanced Scientific Computing Research will provide $5.5 million over three years for the research effort, called Artificial Intelligence-focused Architectures and Algorithms.

The center will focus on the most challenging basic problems facing the young field, with the intention of speeding advances in cybersecurity, electric grid resilience, physics and chemistry simulations and other DOE priorities,” said Sandia project lead Siva Rajamanickam, an expert in high-performance computing. “A codesign center is a wonderful opportunity because people of diverse backgrounds — hardware designers, theoretical computer scientists, mathematicians and domain scientists — come together to develop solutions to a very challenging problem, the codesign of machine learning accelerators.”

The promise of AI

Artificial intelligence and the subfield machine learning allow computer systems like those in self-driving cars to automatically learn from experience without being explicitly programmed. Such technology can perform tasks that formerly only a human could do: see, identify patterns, inform decisions and respond with actions.

Special-purpose computing devices focused on such machine-learning tasks should encourage rapid deployment of these technologies in several fields, says Rajamanickam. Designing these devices, and influencing their design elsewhere, is important to position the United States as a leader in this emerging field, he says.

Not a physical facility but a collaborative environment, the new center is intended to encourage researchers at the three locations, each with their own specialty, to simulate and evaluate artificial intelligence hardware when employed on current or future supercomputers. Researchers also should be able to improve AI and machine-learning methods as they replace or augment more traditional computation methods.

The center will work in close collaboration with DOE’s newly formed Artificial Intelligence and Technology Office, which was created by Secretary of Energy Rick Perry to coordinate the department’s artificial intelligence work and accelerate the research, development and adoption of AI to impact people’s lives in a positive way.

We welcome the center’s announcement,” said AITO senior adviser Dan Wilmot. “Partnerships between academia and DOE’s national labs will be essential to our success in realizing the unlimited potential of AI to advance our core missions.”

Sandia’s computing history

Sandia has a background in high-performance computing. Its researchers created the first parallel processing supercomputer, the Paragon; the first teraflop computer, ASCI Red; and the supercomputer Red Storm used by the U.S. military to shoot down an errant satellite traveling at 17,000 miles per hour, which was described as “hitting a bullet with a bullet.” The Astra supercomputer recently installed at Sandia is the first and fastest supercomputer to use Arm-based processors, thus widening the supplier field for supercomputer components. Arm processors previously had been used exclusively for low-power mobile computers, including cell phones and tablets.

Now, as part of the ARIAA center, Sandia will develop methods to use emerging machine-learning devices effectively and provide access to computer facilities and testbeds to AI researchers.

Sandia is extremely well-positioned to address the challenges in the co-design of AI and machine-learning accelerators for DOE’s broad set of applications,” said Jim Stewart, senior manager and Sandia’s immediate connection with DOE’s Advanced Scientific Computing Research. “We’re excited to be partnering with PNNL and Georgia Tech in this new multiyear effort that will enable AI and machine learning for science and engineering at unprecedented scales.”

A focus of the center will be on sparse computations, a type of computation that utilizes the principle that in real life there might be many interactions but only a few that may affect the outcome to a problem. For example, there might be millions or even billions of users on a social media site, but a user cares about updates only from a few hundred friends.

Sparse computations will be a focus of the ARIAA center because the method greatly reduces the number of computations on problems with large amounts of data,” said Rajamanickam. “It is highly desirable to several computational areas of interest to DOE.”

Other contributors

PNNL, the lead lab, with its principal investigator Roberto Gioiosa, has expertise in simulations related to power grids, chemistry and cybersecurity. It has a history of research in computer architecture and programming models and owns a variety of computing resources that includes systems for testing emerging architectures.

Georgia Tech, with its principal investigator Tushar Krishna, has experience in developing custom hardware accelerators for machine learning. The institution will focus on using this hardware for sparse linear algebra.

The foundation of the partnerships reflected in this center were made possible by the strategic collaboration between Sandia and Georgia Tech over the past few years,” Rajamanickam said.