Over at Colfax Research, Andrey Vladimirov, Vadim Karpusenko, and Tony Yoo have posted a new whitepaper entitled: File I/O on Intel Xeon Phi Coprocessors: RAM disks, VirtIO, NFS and Lustre.

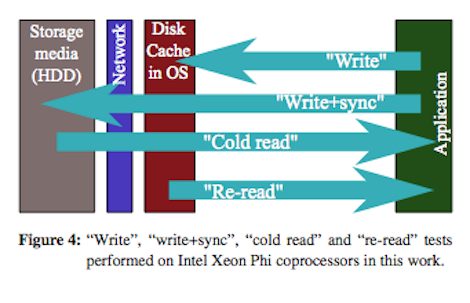

The key innovation brought about by Intel Xeon Phi coprocessors is the possibility to port most HPC applications to manycore computing accelerators without code modification. One of the reasons why this is possible is support for file input/output (I/O) directly from applications running on coprocessors. These facilities allow seamless usage of manycore accelerators in common HPC tasks such as application initialization from file data, saving running output, checkpointing and restarting, data post-processing and visualization, and other. This paper provides information and benchmarks necessary to make the choice of the best file system for a given application from a number of the available options: RAM disks, virtualized local hard drives, and distributed storage shared with NFS or Lustre. We report benchmarks of I/O performance and parallel scalability on Intel Xeon Phi coprocessors, strengths and limitations of each option. In addition, the paper presents system administration procedures necessary for using each file system on coprocessors, including bridged networking and InfiniBand configuration, software installation and MPSS image modifications. We also discuss the applicability of each storage option to common HPC tasks.