In this special guest feature from Scientific Computing World, Robert Roe investigates the use of collaboration and open source programming models to drive computational modeling of weather and climate research.

The complexity and scale of weather and climate simulation have led weather centers and research groups to turn to their own community, either through direct collaboration or open source software initiatives, to increase performance and usability of these hugely complex models.

The complexity and scale of weather and climate simulation have led weather centers and research groups to turn to their own community, either through direct collaboration or open source software initiatives, to increase performance and usability of these hugely complex models.

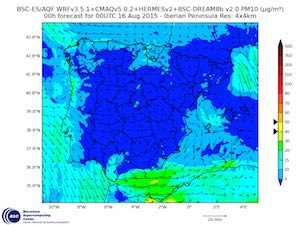

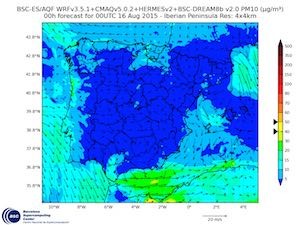

At the Barcelona Supercomputing Centre in Spain, one model, called Caliope, helps researchers forecast air quality using a combination of different models that have all been unified into this single piece of software. Caliope consists of a model for emissions (Hermesv2), a meteorological model (WRF-ARW v3.5.1), a chemical transport model (CMAQ v5.0.2), and a mineral dust atmospheric model (BSC-Dream8bv).

Kim Serradell, co-leader of the Computer Earth Sciences group (CES) at the BSC, stated: ‘All of these systems we are applying in six different domains. We go from the coarse domain of Europe at a resolution of 30 kilometers, and then we are doing nesting from Europe to the peninsula Iberica. We are very interested in running these models on the European scale at 1 kilometer. We are running at that scale in Barcelona, Madrid, and also the south of the peninsula’.

All of this work creates a huge demand for computational resources, which is why many of these centers need their own HPC cluster. At the end of 2014, the UK’s Met Office announced that it would purchase one such cluster and has awarded a 4 year contract valued at around £97 million to Cray for its next HPC and storage system. To get an idea of the scale, this was the largest single order that Cray had received outside of the USA.

Per Nyberg, director of business development at Cray sees such investment as a continuation of a trend towards a realization of the true value in this kind of research. Huge sums of money can be saved through accurate disaster protection, but regular forecasting of weather and air pollution are equally important services provided through these centers.

Nyberg said: “One of the main drivers for all of this spending is the impact on society and economies around the world. I have been working with weather centers for around two and a half decades now; that area of looking at an ROI and looking at it as a business case for these investments – that is something that has really become prominent only in the last few years.”

Resilience and redundancy

Nyberg continued: ‘When you look at the models themselves, or the forecast products or deliverables, maybe you are looking at hyperlocal precipitation to forecast flood warnings, for example. You need to be at a spatial resolution that is very fine, and then you also obviously have a need to deliver that product as quickly as possible.’

Delivering these regular forecasts at increasing resolutions or with integrated models providing a more comprehensive view of the physics involved, increases computational demand considerably. For operational centers like the Met Office this task is made more difficult as it cannot afford even a small amount of downtime. Nyberg explained that, to overcome these challenges, resiliency and redundancy must be built into the initial contract requirements.

Nyberg said: “One critical aspect of this is that it is a multi-year contract, and that is quite typical for the larger weather services. Ultimately, what you are delivering is a weather forecasting capability over time.”

“It is almost standard now, at all of the main weather services, that they have two different and essentially mirrored systems that share a single pool of storage, so that you can switch over very quickly. Some mirroring goes on there also, mainly operational data, in case there is some kind of a failure within the storage infrastructure.”

To overcome the technical and operational challenges with using such large systems, computer scientists must strive to increase performance of their code but usability of the software is just as important according to Francisco Doblas Reyes, earth sciences department director at the Barcelona Supercomputing Centre.

Optimizing run-times across different machines

Reyes explained that much of their work cannot be completed on the BSC supercomputer, because the simulations are just too big for the time that is available. This has led Reyes’ group to look out across Europe for more compute time on other top European HPC systems.

The model and its underlying code must be optimized so that they can run efficiently on these platforms. The code must be optimized not only for the Mare Nostrum system, therefore; it must run efficiently on any cluster with sufficient computational power,” said Reyes.

Reyes continued: “The fact is, if we want to run these kind of experiments then we have to be ready to run them on different platforms and to port our system as quickly and efficiently as possible. So the scene is very different to the one ten years ago, when each institution would have its own computer and people would optimize their model to that specific platform.”

In order to speed up this process, the BSC team has developed a custom tool that automatically allocates resources from the system to attain optimal performance of the simulation.

Oriol Mula-Valls, computing earth sciences group leader, said: “We have developed a custom tool that is called Autosubmit (Autosubmit 3.0.6) that allows us to make the best usage of the computing resources that we have available.”

Mula-Valls continued: “Previously we were just trying to deploy the model and perform a small performance test in order to assess the number of processors needed for each component, so that we could determine the best performance.”

He went on to explain that now the research is progressing to the point that they are now using the BSC performance tools to analyse the behaviour of the model itself: ‘We try to tune the model, even modifying the code and reporting back to the developers,’ Mula-Valls concluded.

Reyes said: “This tool that Oriol was referring to, Autosubmit, is trying to make life a bit easier for the scientists. It offers an interface to the user which is uniform; it does not depend on the platform on which they are going to run their experiments. This really simplifies life a lot in this context, where you have resources distributed across many different HPC centers.”

Reyes said: “This is why we have the Computer Earth Sciences group. It provides computational and data support to all the other groups, and tries provide solutions to some of the problems that are slowing down progress; not just in the department but also in the whole community working on weather, air quality, and climate research.”

Usability is a key factor for weather simulation and HPC in general, because software must be able to scale effectively for simulations of this size. Anything that developers can do to alleviate these challenges through performance tools, the quicker a simulation can be undertaken.

Usability was a key aspect of the Met Office requirements for its new system from Cray, as Nyberg explained: “From a usability perspective, scalability is absolutely critical and one of the things that they need to be doing is implementing new science. The faster they can implement new science, the more immediate that return on investment.”

Nyberg continued: “Ultimately the benefit is improved forecast accuracy. One of the big trends especially at the larger weather services, and the Met Office is a leader in this, is this move towards a suite of seamless forecasting services – everything from emergency response, all the way through to mitigation policy and climate vulnerability assessments.”

Predicting storm surges

Regular forecasting is massively reliant on constant availability of systems and usability of software, but it is equally important to have easy to use, scalable software in disaster prevention when a forecast must be prepared in advance to warn local communities.

A team of researchers at the University of Louisiana focus on a very specific aspect of weather – simulating storm surges – but they face the same sorts of challenges associated with delivering more general weather forecasts: a short space of time and a very high resolution.

Dr Jim Chen, professor in coastal engineering from the department of civil and environmental engineering at Louisiana State University, said: ‘Here in Louisiana, we have seen a lot of hurricanes, including hurricane Katrina. What we have been doing here at LSU is using models to forecast storm surges, but also waves on top of the surge that impact the coastal structure and coastal communities.’

The primary models they use are the Advanced Circulation model (ADCIRC) and another one called SWAN, a wave model developed by the Delft University of Technology. Chen explained that these are open source models which are crucial to LSU’s studies, because it allows them to update models integrating new physics or improving the model by interacting with the community. Chen said: ‘A crucial point here is that we use those models, but we also improve those models because they are open source – that is the reason that we use them.’

These models are very computationally intensive; it requires HPC resources to complete these kinds of runs, especially an approaching hurricane,” said Chen.

He went on to explain that it is not just the waves themselves that must be simulated but also a wave’s impact on the coast. Erosion, sediment transport, and coastal erosion caused by a hurricane must also be simulated.

Chen concluded: “For impact studies, we also have sediment transport and water quality models. Hurricanes can cause erosion to the coast, but they can also transport sediment and there can be some sediment deposition on the wetlands; so you must look at the overall impacts, which requires large computational resources.”

Finding these computational resources is not always easy. Chen said that the LSU had been very lucky: it had got involved with HPC more than a decade ago, and currently has three large HPC clusters in addition to some several smaller systems.

“We have a number of clusters here at LSU: our latest is SuperMIKE2 and SuperMIC which is a Xeon Phi cluster; we also have QB2 which is a state wide resource.”

Collaboration across different disciplines

At the BSC, Reyes is faces similar challenges. Although the BSC has one of the largest supercomputers in Europe his team still cannot get enough core hours to complete some of the largest simulations. Reyes said: ‘If we want to run them at very high resolution, we are talking – just for single simulation – resources in the region of 10-20 million hours, which is not something you get from one day to the next.’

Reyes was keen to stress that it is not just in the quest for computational resources that he must collaborate with European partners. They must also collaborate just to get their models to run efficiently, because there are so many different disciplines involved in this area of science, from physical scientists to computer scientists to climate experts.

Reyes highlighted that it can be tough job to get all these different experts together, but the BSC has worked hard to generate a community of researchers from different disciplines that can work effectively on a common goal. He said: ‘Getting all those experts next to the climate modelers is something unique. It is not something that you find in other weather research or climate research groups in Europe. It is not easy to bring together people with such a different range of profiles.’

These intricate software models cannot be developed without collaboration and this is not only between scientists of different back grounds but also between institutions whether through direct collaboration or building software through open source initiatives.

Open Source aids collaboration

Chen from LSU has found that open source models were the key to being able to integrate the different physical models needed to develop a better understanding of storm surge physics and its impact on the coastal communities.

Chen said: “One example is that most models do not consider the effect of wetlands, of vegetation, on the storm surge and waves. Most places have sandy beaches and only a small area has a lot of wetlands. Louisiana is different.”

Chen continued: “The waves that are near the coast are non-linear, they are very hard to predict the way that they interact with structures with coastal landscapes, forest and vegetation so we have to develop a model called Cafunwave which has the capability to simulate the waves near the coast. However at the same time it can simulate the storm surge conditions, combining them into one model.”

He went on to explain that Louisiana has a fairly unusual geography due to the presence of the Mississippi river delta, and expansive wetlands and vegetation which have an impact in the event of a hurricane.

Chen concluded: “We can improve the physics; we can collaborate with other universities on combining certain aspects of other physics to design a model that provides a more realistic representation of the costal systems.”

Legacy software

One thing that Mula-Valls stressed was that these collaborations mean that not everyone involved with the project has a wealth of HPC experience, and many projects deal with code that may be 20-30 years old.

Mula-Valls said: “You can find routines in the code from the 1980s or the 1990s so, in some cases, it is really ancient code that one has to maintain. It can be difficult to work with these models. But bringing all these experts here can provide another point of view, and it can make the code development a lot easier.”

Reyes said: “What I want to convey is that this is a community effort, and in that community we play a double role. One is to improve the science that goes into the models, but also we increasingly play the role of being one of the few places that can help these scientists, the physical scientists, to improve the performance of their codes and to teach them what they are doing wrong.”

At the LSU, Chen has found a similar model works for his research also. Chen said: “A typical model will have around ten students, some of whom are from engineering disciplines and then two or three from computer science. We work together as a team, but actually it is the students that are gaining that experience and collaborating to get a lot of things done quickly.”

Reyes said: “I think one of the problems that has been overlooked in the past is how the users are going to interact with these very complex machines, and how they are going to run their experiments in an efficient way without wasting months trying to learn how to access that machine and how to get the data out of that machine.”

He concluded: “It is something where we really want to spend a lot of effort, not just making our models 10 per cent faster on a specific architecture, but also how to make the work of the physical scientists much faster because this means money. We need solutions that are not just applicable to the BSC but applicable all across Europe.”

This story appears here as part of a cross-publishing agreement with Scientific Computing World.