In this guest post, experts from Asetek discuss how to successfully implement liquid cooling in your HPC facility or data center.

As seen at ISC18 in Frankfurt Germany this month, HPC facilities are under increasing pressure to incorporate higher and higher wattage nodes into their sites.

As seen at ISC18 in Frankfurt Germany this month, HPC facilities are under increasing pressure to incorporate higher and higher wattage nodes into their sites.

The need for high reliability cooling without reducing the rack density to handle high wattage nodes is no easy task.

Reducing rack densities in HPC clusters can mean increasing interconnect distances resulting in greater latency when using air cooling. This makes liquid cooling of the high density CPU/GPU nodes appealing but can be a difficult for traditional air cooled sites.

The need for high reliability cooling without reducing the rack density to handle high wattage nodes is no easy task.

Because many of the liquid cooling approaches are one-size-fits-all solutions, it can be difficult to move to liquid cooling on an as-needed basis. What is required is an architecture that is flexible to a variety of heat rejection scenarios.

Asetek’s direct-to-chip liquid cooling provides a distributed cooling architecture to address the full range of heat rejection scenarios. It is based on low pressure, redundant pumps and sealed liquid path cooling within each server node.

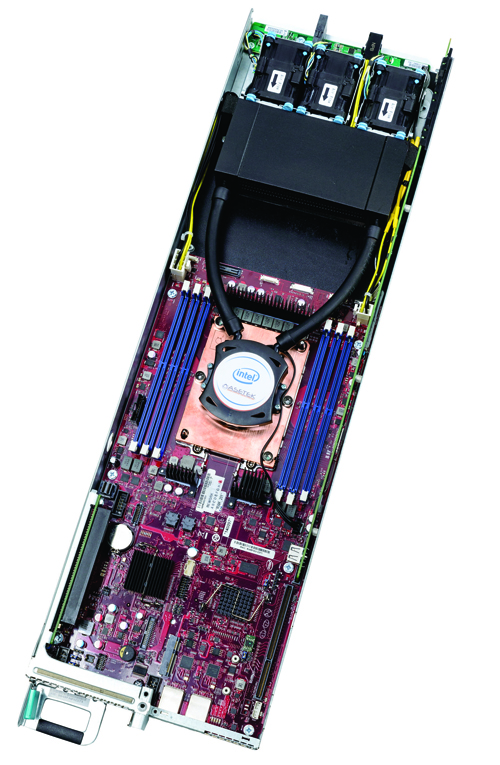

ServerLSL™

Unlike centralize pumping systems, placing coolers (integrated pumps/cold plates) within server or blade nodes, with the coolers replacing CPU/GPU air heat sinks to remove heat with hot water provides flexibility in heat capture. This distributed pumping is the foundation for flexibility on the side of heat-capture by being applicable to different heat rejection requirements.

The Asetek architecture heat rejection options provide adaption to existing air-cooled data centers and to liquid-cooled facilities.

Adding liquid cooling with no impact on data center infrastructure can be done with Asetek’s ServerLSL™, a server-level Liquid Assisted Air Cooling (LAAC). With ServerLSL the redundant liquid pump/cold plates are paired with a HEX (radiator) also in the node. Via the HEX the captured heat is exhausted into the data center. Existing data center HVAC systems handle the heat. ServerLSL allows incorporation of the highest wattage CPUs/GPUs and additionally racks can contain a mix of liquid-cooled and air-cooled nodes.

InRackLAAC™

Available in 2018, InRackLAAC™ places a shared HEX with a 2U “box” that is connected to a “block” of up to 12 servers. Because the HEX is removed from the individual nodes, greater component density is possible.

When facilities water is routed to the racks, Asetek’s RackCDU D2C (Direct-to-Chip) can capture 60 to 80 percent of server heat into liquid, reducing data center cooling costs by over 50 percent and allowing 2.5x-5x increases in data center server density. Because hot water (up to 40ºC) is used to cool, it does not require expensive HVAC systems and can utilize inexpensive dry coolers.

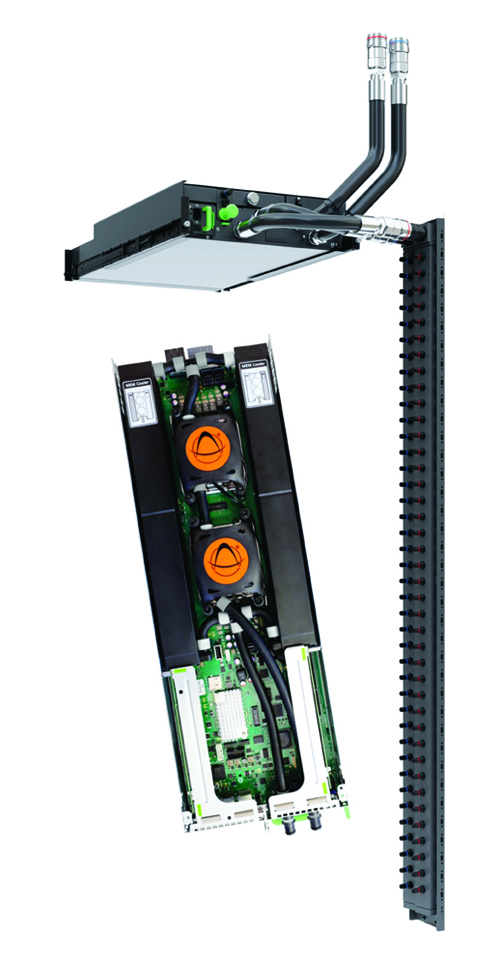

InRackCDU™

With RackCDU the heat collected is moved via a sealed liquid path to heat exchangers for transfer of heat into facilities water. RackCDUs come in two types to give additional flexibility to data center operators.

InRackCDU™ is mounted in the rack along with servers. Using 4U, it connects to nodes via Zero-U PDU style manifolds in the rack. Alternatively, VerticalRackCDU™ consists of a Zero-U rack level CDU (Cooling Distribution Unit) mounted as a 10.5” extension at the rear of the rack.

The distributed pumping architecture at the server, rack, cluster and site levels delivers flexibility in the areas of heat capture, coolant distribution and heat rejection that other approaches do not.

Visit Asetek.com to learn more.