Sponsored Post

Innovative organizations that are involved in the implementation and use of Artificial Intelligence (AI) and Machine Learning (ML) software are requiring that their suppliers and vendors “up the game” in the design and delivery of state-of-the-art servers. Inspur Electronic Information Industry Co., LTD is delivering new servers that address the most demanding performance from companies that are implementing AI and ML into their workflows. Reducing the Total Cost of Ownership (TCO) while increasing the productivity of their teams is critical for CIOs and Line of Business leadership.

Inspur is a leading supplier of highly optimized servers that address a wide range of use cases. Of critical importance when designing new systems is to incorporate the latest, state-of-the-art components. Inspur is committed to delivering a full line of servers that incorporate the NVDIA A100 GPUs, the most powerful AI servers in the entire industry. According to Inspur, Inspur is the earliest supplier in the industry to launch the most comprehensive AI servers supporting NVIDIA A100 GPUs. Customers can also be assured that they will be the earliest to receive shipments of these servers in the entire industry. The newly announced NF5488M5-D and NF5488A5 have taken the lead in shipments.

TheNF5488M5-D and the NF5488A5 models are ideal for organizations that are integrating AI into their workflows. Both servers are designed for the most demanding applications, those that need extremely high performance in a compact and energy efficient enclosure. This “extreme” design gives users the choice of the latest Intel Xeon Scalable Processors or the AMD EPYC Rome CPUs. The innovative mechanical design of these systems allows for up to eight of the NVIDIA A100 Tensor Core 40GB GPUs with 6912 Tensor cores in a compact 4U system. Comparing with the DGX A100, which contain eight of the GPUs in 6U form factor, the Inspur 5488M5-D and 5488A5 are in a 4U compact chassis that exhibit an excellent performance to density ratio.

High density servers save both space and operating costs. Modern CPUs can contain up to 32 cores each, which can each operate independently from one another. In addition, new training algorithms can take advantage of multiple GPUs, aggregating their performance. When multiple GPUs are installed in a system, the networking performance between the separate GPUs is critical, which increases overall performance. Bottlenecks between individual GPUs can lead to sub-optimal performance and slower completion of the task.

The NF5488M5-D and the NF5488A5 are designed to minimize the number of hops between the CPUs and GPUs, resulting in faster application performance. When so much computing power is designed into one enclosure that will be used for mission critical applications, attention to the power supplies and cooling systems are needed and must be designed along with the components. The use of 54V power supply scheme can effectively improve the power conversion efficiency up to 95%. By using high efficiency redundant power supplies and an innovative mechanical design to dissipate heat, these servers are not only reliable under maximum loads but reduce Operating Expenses (OPEX) as well.

Within this family, the NF5488M5-D, contains up to two Intel Xeon Scalable Processors is optimal for application scenarios that include image, video and voice recognition. The NF5488M5-D is equipped with the latest Intel Cascade Lake CPU, and integrates Intel DL Boost, AVX-512, MKL and other tools to provide users with optimized and tested configurations. This enables users that have high AI training and inference needs to increase their capabilities on the commonly used hardware, and will enhance AI deployment efficiency and help enterprise customers to reduce deployment time and TCO. Additional workloads for this server include financial analysis and intelligent customer service.

Inspur has also designed the NF5488A5 server with the highest data transmission efficiency in mind. This system incorporates two AMD EPYC processors with PCIe GEN4, which speeds data movement both in terms of lower latencies and higher bandwidth between the CPU to the GPU, resulting in higher overall performance for AI workloads. This system also allows for the direct communication between the CPU and main memory, bypassing the CPU, which allows for even faster data transfer. This system has been designed for application scenarios that include smart cities, 5G tele-communications, natural language processing, financial analysis, and intelligent customer service. Customers will benefit AI users who are building an underlying computing power platform with the highest data communication efficiency, thus accelerating the improvement of application performance.

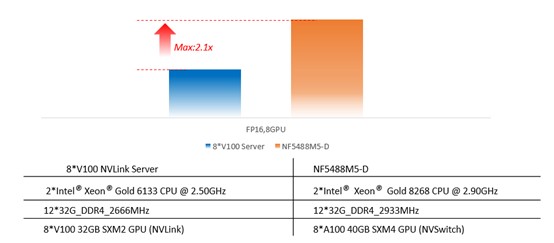

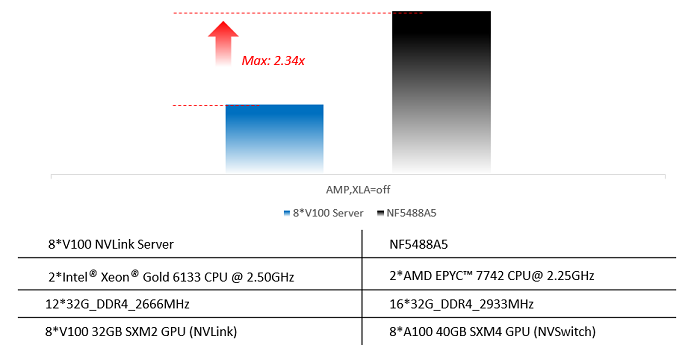

In the figures below, the NF5488M5-D and NF5488A5 have demonstrated multiple times performance increase compared with the previous generation.

In the Bert training benchmark, the NF5488M5-D outperforms the previous generation of servers.

Figure 1 – The Bert model, compared with the previous generation 8*V100 server, the performance of NF5488M5-D is improved by up to 210%.

In the Resnet50 model benchmark, the NF5488A5 again shows its amazing performance.

Figure 2 – In the ResNet50 training process, the GPU utilization rate is higher (93%), compared with the previous generation 8*V100 server, up to 234% performance improvement.

Above all, the performance of NF5488M5-D and NF5488A5 increases dramatically compared with the previous generation, and delivers up to FP64 tensor Core 156TFLOPS. The new Inspur AI servers with NVIDIA A100 GPUs can significantly improve the computing performance, and are great choices for the customers.

Inspur Electronic Information Industry Co., LTD is a leading global data center and cloud computing solutions provider. Inspur delivers and deploys robust, performance-optimized, purpose-built solutions to major data centers around the globe to address important emerging fields and applications. These servers which were recently introduced will easily integrate into existing data centers and will speed up applications requiring the latest technologies that need to lead the way with AI and ML.

Visit www.inspursystems.com for more information.