Over at Science Node, Lance Farrell writes that researchers are using XSEDE supercomputer resources to solve the mysteries of the Old Faithful geyser.

Once Lijun Liu saw the proximity of subduction zones to supervolcanoes, the game was afoot. His NSF-funded research project is a harbinger of the age of numerical exploration.

The Old Faithful geyser at Yellowstone National Park has thrilled park visitors for over a century, but it wasn’t until this year that scientists figured out the geophysical factors powering it.

With over 2 million visitors annually, Yellowstone remains one of the most popular nature destinations in the US. Spanning an area of almost 3,500 square miles, the park sits atop the Yellowstone Caldera. This caldera is the largest supervolcano in North America, and is responsible for the park’s geothermal activity.

Until last week, most geologists had explained this activity with the so-called mantle plume hypothesis. This elegant theory proposed an idealized situation where hot columns of mantle rock rose from the core-mantle boundary all the way to the surface, fueling the supervolcano and the geothermal geysers.

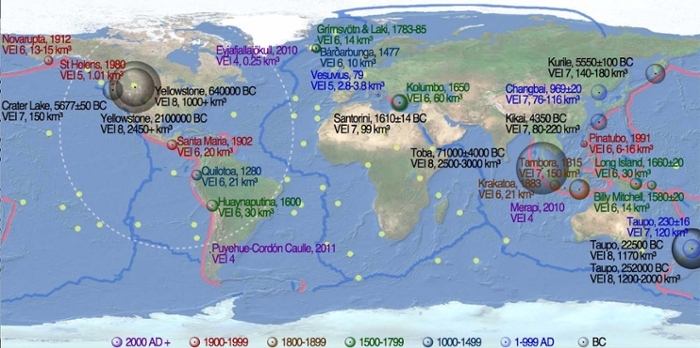

Neighborhood watch. A map of the worldwide distribution of supervolcanoes. The light reddish lines are subduction zones, the thick blue lines are mid-ocean ridges, and the size of the circles scales with the magnitude of supervolcanoes. Courtesy Lijun Liu.

This theory didn’t sit well with Lijun Liu, assistant professor in the department of Geology at the University of Illinois, however. “If you look at the distribution of supervolcanoes globally, you’ll find something very interesting. You will see that most if not all of them are sitting close to a subduction zone,” Liu observes. “This close vicinity made me wonder if there were any internal relations between them, and I thought it was necessary and intriguing to further investigate this.”

Solving the mystery

To investigate the formation of Yellowstone volcanism, Liu and co-author Tiffany Leonard turned to the supercomputers at the Texas Advanced Computing Center (TACC) and the National Center for Supercomputing Applications (NCSA). Using Stampede for benchmarking work in 2014, and Blue Waters for modeling in 2015, Liu and Leonard ran 100 models, each requiring a few hundred core hours. The models weren’t too computationally intensive, using only 256 cores and generating only about 10 terabytes of data. In subsequent research, Blue Waters, more attuned to extreme scale calculations, has allowed Liu to scale up experiments up to 10,000 cores.

Lijun Liu, University of Illinois Department of Geology

To make the Yellowstone discovery, Liu received valuable technical assistance from the Extreme Science and Engineering Discovery Environment (XSEDE). “We have been using XSEDE machines from very early on, Lonestar, Ranger, and, more lately, Stampede. We got a lot of assistance from XSEDE on installing the code, so by the time we got to this particular project we were pretty fluent using the code.”

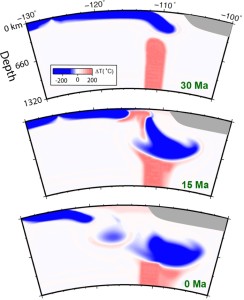

Liu and Leonard’s models, recently published in American Geophysical Union, simulated 40 million years of North American geological activity. By using the most well accepted history of surface plate motion and matching the complex mantle structure seen today with geophysical imaging techniques, Liu’s team imposed two powerful constraints to make sure their models didn’t deviate from reality. The models left little doubt that the flows of mantle beneath Yellowstone are actually modulated by moving plates rather than a single mantle plume.

Analytical evolution

According to Liu, prior to high-performance computing (HPC), debates about Yellowstone volcanic activity were like the proverbial blind men touching and describing the elephant. Without HPC, scientists lacked the geophysical data or imaging techniques to see under the surface. Most of the models of that time relied heavily on surface records only.

“Numerical simulations are so important, especially now we are moving away from simple explanations and analytical solutions,” Liu admits. “We are definitely in the numerical era now. Most of these problems we couldn’t have solved a few years ago.”

Blue Waters douses Yellowstone. Simulating 40 million years of North American tectonic movement, Liu’s geodynamic models determined that subduction plates are always in the way of a rising mantle plume. Plumes can reach the surface only through breaks in the slab. Courtesy Lijun Liu.

But with the advent of HPC and seismic tomography about 10 years ago, geologists were finally able to peer into the subsurface. By 2010, the scientific landscape had shifted dramatically when the US National Science Foundation (NSF) -funded nationwide seismic experiment calledEarthscope unearthed an unprecedented amount of data and corresponding good imagery of the underlying mantle.

From these images, geologists could see not only localized slow seismic structures called putative plumes, but also widespread fast anomalies often called slabs, or subducting oceanic plates. This breakthrough has created the opportunity for more questions, spawning even more models and hypotheses. Because of the complexity of the system, this is a situation ripe for HPC, Liu reasons.

Understanding the volcanism powering Yellowstone is important because if this supervolcano erupts, it will affect a large area of the US. “That’s a real threat and a real natural hazard,” Liu quips. “But more seriously, if a mantle plume is powering the Yellowstone flows, then in theory its distribution could be more random — it can form almost anywhere. So it is possible that people in the Midwest who never worry about volcanoes are sitting right above a mantle plume.”

But if the subduction process is more responsible for Yellowstone, and most of us sit further away from the subduction zone, we can rest a little bit easier.

Liu’s research was made possible by funding from the NSF, support that procured not only supercomputing time but also student assistance. Providing an educational advantage is the more important benefit of NSF support, Liu says.

In sum, Liu is convinced of the importance of HPC to the future of geological analysis. “HPC and models with a multidisciplinary theme should be the trend and should be encouraged for future research because this is really the way to solve complex natural systems like Yellowstone.”

This story comes to us courtesy of Science Node under the Creative Commons license.