In this video, researchers from the Texas Advanced Computing Center describe how Intel data-centric technologies power the Frontera supercomputer, which is currently under installation.

In this video, researchers from the Texas Advanced Computing Center describe how Intel data-centric technologies power the Frontera supercomputer, which is currently under installation.

The Texas Advanced Computing Center (TACC) will use Intel Xeon Platinum 8200 processors and Intel Optane DC persistent memory for its Frontera system. This system will provide researchers the groundbreaking computing capabilities needed to grapple with some of science’s largest challenges. Frontera will provide greater processing and memory capacity than TACC has ever had, accelerating existing research and enabling new projects that would not have been possible with previous systems.”

Frontera Configuration:

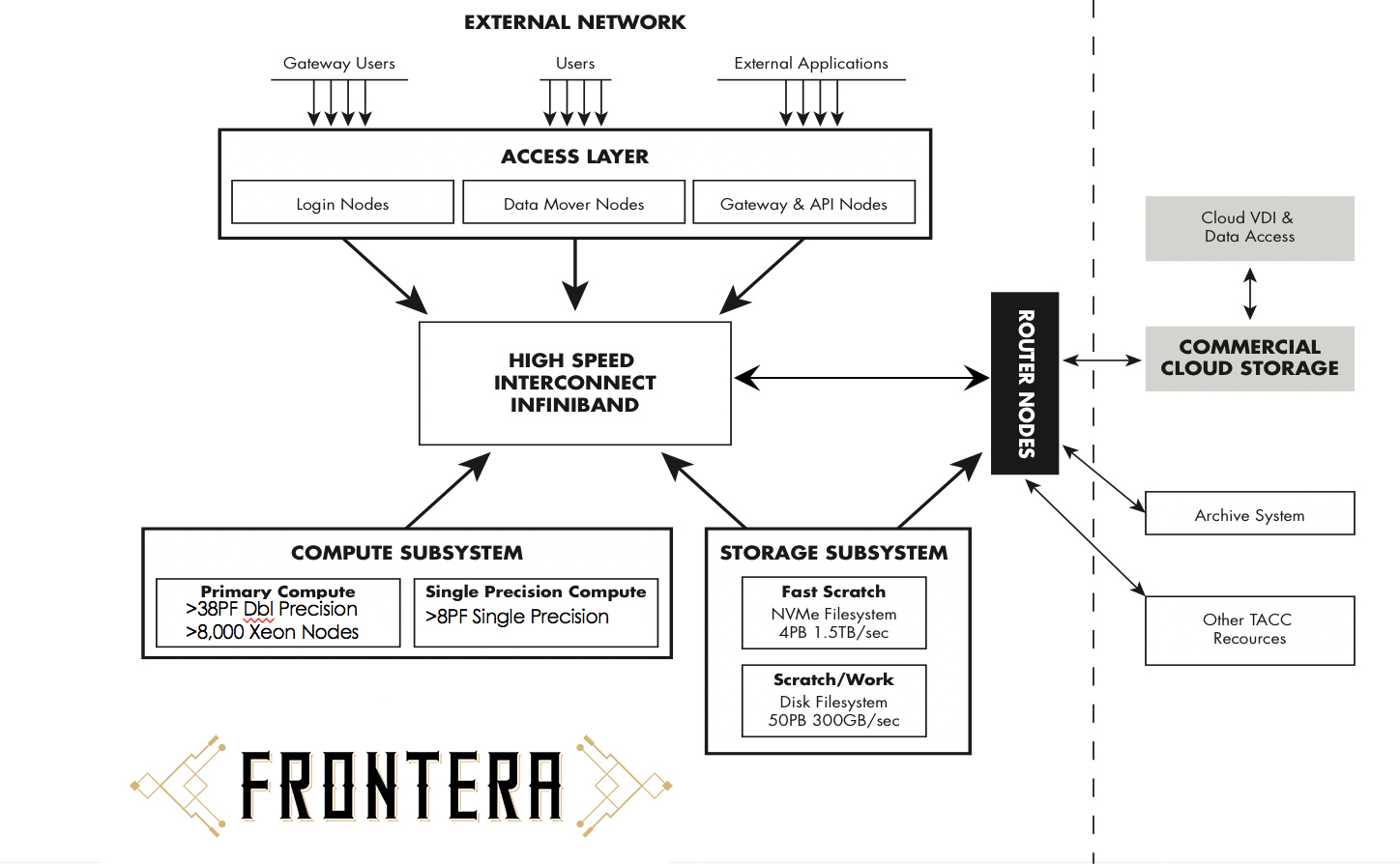

- Primary Compute System. The primary computing system will be provided by Dell/EMC and powered by Intel processors, interconnected by a Mellanox Infiniband HDR and HDR-100 interconnect. The initial configuration of the system will have 8,008 available compute nodes.

- System Interconnect. Frontera compute nodes will be interconnected with HDR-100 links to each node, and HDR (200Gb) links between leaf and core switches. The interconnect will be configured in a fat tree topology with a small oversubscription factor of 11:9.

- Storage. Frontera will have multiple file systems; In addition to a home directory, users will be assigned to one of several scratch filesystems on a rotating basis. Each scratch filesystem will have a disk capacity of approximately 15 usable petabytes, and each individual filesystem is expected to maintain a bandwidth of >100GB/s. Total scratch capacity will exceed 50PB. Users with very high bandwidth or IOPS requirements will be able to request an allocation on an all-NVMe filesystem with an approximate capacity of 3PB, and bandwidth of ~1.2TB/s. We intend to limit the number of simultaneous users on the “solid state” scratch component. Users may also request allocation on the “/work” filesystem, a medium-term filesystem (longer than scratch, but shorter than home or archive) which is shared among all TACC platforms. Work is for migration between systems, or the need for “semi-persistent” storage (i.e., a copy of reference genomes that are infrequently updated, but used by compute jobs over 1-2 years). Storage system hardware is provided by DataDirect Networks.