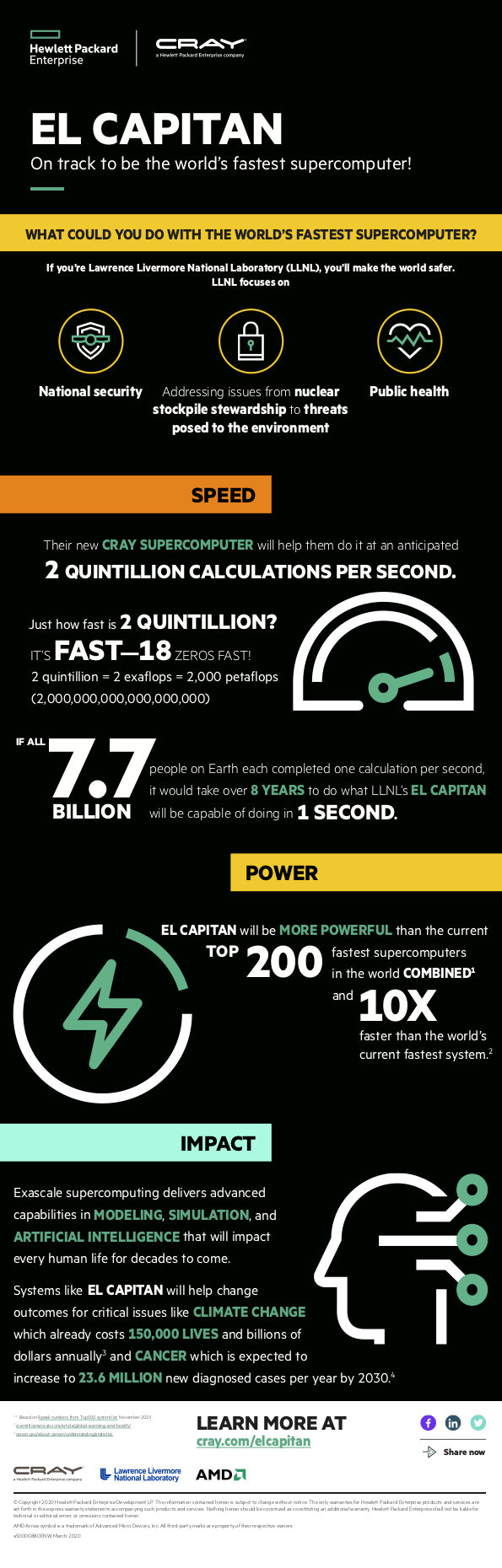

Today HPE announced that it will deliver the world’s fastest exascale-class supercomputer for NNSA at a record-breaking speed of 2 exaflops – 10X faster than today’s most powerful supercomputer. The new system, which the DOE’s Lawrence Livermore National Laboratory (LLNL) has named El Capitan, is expected to be delivered in early 2023 and will be managed and hosted by LLNL for use by the three NNSA national laboratories: LLNL, Sandia National Laboratories and Los Alamos National Laboratory. The system will enable advanced simulation and modeling to support the U.S. nuclear stockpile and ensure its reliability and security.

Today HPE announced that it will deliver the world’s fastest exascale-class supercomputer for NNSA at a record-breaking speed of 2 exaflops – 10X faster than today’s most powerful supercomputer. The new system, which the DOE’s Lawrence Livermore National Laboratory (LLNL) has named El Capitan, is expected to be delivered in early 2023 and will be managed and hosted by LLNL for use by the three NNSA national laboratories: LLNL, Sandia National Laboratories and Los Alamos National Laboratory. The system will enable advanced simulation and modeling to support the U.S. nuclear stockpile and ensure its reliability and security.

We are pleased to continue our longstanding journey with HPE in co-developing innovative technologies for a range of solutions, and now, for the world’s most powerful, exascale-class supercomputer,” said Forrest Norrod, senior vice president and general manager of the Datacenter and Embedded Solutions Business Group, AMD. “We look forward to continuing our collaboration with HPE to bring together next-generation AMD EPYC CPUs and Radeon Instinct GPUs with HPE’s Cray Shasta system to power complex, data-intensive HPC and AI workloads for El Capitan that today’s systems cannot manage.”

HPE is optimizing the DOE’s El Capitan to power complex and time-consuming 3D exploratory simulations for NNSA missions that today’s state-of-the-art supercomputers cannot successfully manage. El Capitan will provide opportunities for researchers to explore new applications using emerging, data-intensive workloads such as modeling, simulation, analytics, and AI to support future NNSA missions.

The DOE’s El Capitan will use next-generation AMD EPYC™ processors, codenamed “Genoa” featuring the “Zen 4” processor core, next-generation AMD Radeon™ Instinct GPUs, based on a new compute-optimized architecture, and the 3rd Generation AMD Infinity Architecture, which will provide a high-bandwidth, low latency connection between the CPUs and GPUs.

Strengthening Nation’s Nuclear Stockpile, Security and Defense with Exascale Technologies

“As an industry and as a nation, we have achieved a major milestone in computing. HPE is honored to support the U.S. Department of Energy and Lawrence Livermore National Laboratory in a critical strategic mission to advance the United States’ position in security and defense,” said Peter Ungaro, senior vice president and general manager, HPC and Mission Critical Solutions (MCS), at HPE. “The computing power and capabilities of this system represent a new era of innovation that will unlock solutions to society’s most complex issues and answer questions we never thought were possible.”

HPE’s Cray Shasta technologies, which were built from the ground up to support a diverse set of processor and accelerator technologies to meet new levels of performance and scalability, will enable the DOE’s El Capitan to meet NNSA requirements, which include the NNSA’s Life Extension Program (LEP), a critical part of stockpile stewardship that aims to modernize aging weapons in the U.S. nuclear stockpile that are to remain safe, secure, and effective.

LLNL is managing the new system for the NNSA and has developed emerging techniques that allow researchers to create faster, more accurate models for primary missions across stockpile modernization and inertial confinement fusion (ICF), a key aspect of stockpile stewardship.

LLNL researchers will use the system to explore new applications that integrate AI and machine learning into HPC workloads and is already applying HPE supercomputing and AI solutions to make breakthroughs in medical and drug research initiatives, including:

- Accelerating cancer drug discovery from six years to one year through a partnership with the GlaxoSmithKline (GsK), a multinational pharmaceutical company, the National Cancer Institute and other DOE national laboratories through the ATOM consortium.

- Understanding the dynamics and mutations of RAS proteins that are linked to 30% of human cancers by collaborating with The National Cancer Institute and other partner institutions.

Breaking Speed Barrier with 2 Exaflops

Breaking Speed Barrier with 2 Exaflops

Systems like the DOE’s El Capitan are ushering in a new class of supercomputing with exascale-class systems that are 1,000X faster than the previous generation petascale systems first introduced 12 years ago.

The new performance record of 2 exaflops (2,000 petaflops) will be more powerful than the Top 200 fastest supercomputers in the world combined and is an increase of more than 30% from initially projected estimates calculated seven months ago. This was made possible by a new partnership between HPE, AMD and the U.S. DOE to combine HPE’s Cray Shasta system and Slingshot interconnect, a specialized HPC networking solution, with next-generation AMD EPYC™ processors and next-generation AMD Radeon Instinct GPUs. The decision to choose this architecture was based on NNSA’s strategic, mission critical requirements.

The exceptional computing power promised by El Capitan, based on HPE’s Cray Shasta architecture, will ensure the NNSA laboratories can continue to excel at their national security missions and make it possible for the U.S. to remain competitive on the global stage in high performance computing for many years to come,” said Bill Goldstein, director, Lawrence Livermore National Laboratory. “We look forward to continuing our work with HPE and AMD to usher in this new exascale era with the most capable hardware on the planet.”

Enhanced Performance for DOE’s El Capitan

HPE and AMD jointly designed new technologies to support critical HPC and AI workloads with the following enhancements:

- Streamlined communication between HPE’s Cray Slingshot interconnect, a specialized HPC networking solution, and new next-generation AMD Radeon Instinct GPUs that are based on a new compute optimized architecture for workloads including HPC and AI

- High density compute blades powered by next-generation AMD EPYC™ processors, codenamed “Genoa” featuring the “Zen 4” processor core

- New approach using accelerator-centric compute blades (in a 4:1 GPU to CPU ratio, connected by the 3rd Gen AMD Infinity Architecture for high-bandwidth, low latency connections) to increase performance for data-intensive AI, machine learning and analytics needs by offloading processing from the CPU to the GPU.

Other performance enhancements include unique storage and software capabilities that are integrated with HPE’s Cray Shasta architecture. Additional use of flash-based local storage systems, designed specifically for the new system’s performance needs, will provide a buffer to balance existing on-board memory and data-tiering, which is monitored by Cray Shasta’s intelligent software solutions to automate data movement for optimal storing and timely access.

New LLNL Partnership to Demonstrate Optics in DOE’S El Capitan

HPE is expanding its partnership with LLNL to actively explore HPE optics technologies, a computing solution that uses light to transmit data, to feature in the DOE’s El Capitan. HPE’s optics technologies stem from R&D efforts related to PathForward, a program backed by U.S. DOE’s Exascale Computing Project. HPE developed and demonstrated breakthrough optics prototypes that integrate electrical-to-optical interfaces to enable broad use in future classes of system interconnects.

Together, HPE and LLNL are exploring ways to integrate these optics technologies with HPE’s Cray Slingshot for DOE’s El Capitan to transmit more data, more efficiently. This approach aims to improve power efficiency, reliability and ability to cost-effectively increase global system bandwidth.

In addition to the DOE’s El Capitan, HPE will deliver the other two U.S DOE exascale systems announced in 2019, Aurora and Frontier.

Over time, HPE will integrate its exascale technologies into its broader HPC product portfolio to deliver supercomputers of any size for every data center, and democratize Exascale Era technologies for broader market uses.