“Los Alamos has lots of supercomputing power, and we do lots of simulations that are well supported here. But we’ve found that Big Data analysis projects need to use different frameworks, which often have dependencies that differ from what we have already on the supercomputer. So, we’ve developed a lightweight ‘container’ approach that lets users package their own user defined software stack in isolation from the host operating system.”

ISC 2017 Workshop Preview: Optimizing Linux Containers for HPC & Big Data Workloads

Christian Kniep is hosting a half-day Linux Container Workshop on Optimizing IT Infrastructure and High-Performance Workloads on June 23 in Frankfurt. “Docker as the dominant flavor of Linux Container continues to gain momentum within datacenter all over the world. It is able to benefit legacy infrastructure by leveraging the lower overhead compared to traditional, hypervisor-based virtualization. But there is more to Linux Containers – and Docker in particular, which this workshop will explore.”

HPC Workflows Using Containers

“In this talk we will discuss a workflow for building and testing Docker containers and their deployment on an HPC system using Shifter. Docker is widely used by developers as a powerful tool for standardizing the packaging of applications across multiple environments, which greatly eases the porting efforts. On the other hand, Shifter provides a container runtime that has been specifically built to fit the needs of HPC. We will briefly introduce these tools while discussing the advantages of using these technologies to fulfill the needs of specific workflows for HPC, e.g., security, high-performance, portability and parallel scalability.”

New Univa Grid Engine Release Doubles Performance over Previous Versions

“Grid Engine 8.5’s significant performance improvement for submitting jobs and reduced scheduling times will have a profound impact to our customers’ bottom line as they can now get more work done in the same amount of time. By reducing the wait time for end-users, our customers can save significant costs. Managing the purchase of more servers and getting higher throughput means deadlines can be met with confidence and on budget. Our goal was to increase the value Univa provides over previous versions of Grid Engine, including the popular open source version 6.2U5,” said Bill Bryce, Vice President of Products at Univa.

Designing HPC & Deep Learning Middleware for Exascale Systems

DK Panda from Ohio State University presented this deck at the 2017 HPC Advisory Council Stanford Conference. “This talk will focus on challenges in designing runtime environments for exascale systems with millions of processors and accelerators to support various programming models. We will focus on MPI, PGAS (OpenSHMEM, CAF, UPC and UPC++) and Hybrid MPI+PGAS programming models by taking into account support for multi-core, high-performance networks, accelerators (GPGPUs and Intel MIC), virtualization technologies (KVM, Docker, and Singularity), and energy-awareness. Features and sample performance numbers from the MVAPICH2 libraries will be presented.”

Video: Singularity – Containers for Science, Reproducibility, and HPC

“Explore how Singularity liberates non-privileged users and host resources (such as interconnects, resource managers, file systems, accelerators …) allowing users to take full control to set-up and run in their native environments. This talk explores Singularity how it combines software packaging models with minimalistic containers to create very lightweight application bundles which can be simply executed and contained completely within their environment or be used to interact directly with the host file systems at native speeds. A Singularity application bundle can be as simple as containing a single binary application or as complicated as containing an entire workflow and is as flexible as you will need.”

Microsoft Releases Batch Shipyard on GitHub for Docker on Azure

“Available on GitHub as Open Source, the Batch Shipyard toolkit enables easy deployment of batch-style Dockerized workloads to Azure Batch compute pools. Azure Batch enables you to run parallel jobs in the cloud without having to manage the infrastructure. It’s ideal for parametric sweeps, Deep Learning training with NVIDIA GPUs, and simulations using MPI and InfiniBand.”

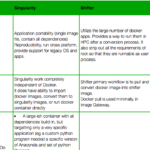

New Site Compares Docker, Singularity, Shifter, and Univa Grid Engine Container Edition

A new site developed by Tin H compares the HPC virtualization capabilities of Docker, Singularity, Shifter, and Univa Grid Engine Container Edition. “They bring the benefits of container to the HPC world and some provide very similar features. The subtleties are in their implementation approach. MPI maybe the place with the biggest difference.”

Nimbix Cloud Adds Docker Integration to JARVICE

“PushToCompute is the easiest and most advanced DevOps pipeline for high performance applications available today”, said Nimbix CTO Leo Reiter. “It seamlessly enables serverless computing of even the most complex workflows, greatly simplifying application deployment at scale, and eliminating the need for any platform orchestration or user interface work. Developers simply focus on their specific functionality, rather than on building cloud capabilities into their applications.”

Putting HPC into the Hands of Every Engineer and Scientist

In this special guest feature from Scientific Computing World, Wolfgang Gentzsch explains the role of HPC container technology in providing ubiquitous access to HPC. “The advent of lightweight pervasive, packageable, portable, scalable, interactive, easy to access and use HPC application containers based on Docker technology running seamlessly on workstations, servers, and clouds, is bringing us ever closer to the democratization of HPC.”