Dec. 6, 2022 — NeuReality, an AI hardware startup specializing in AI inferencing platforms, announced a $35M Series A funding round led by Samsung Ventures, Cardumen Capital, Varana Capital, OurCrowd and XT Hitech. SK Hynix, Cleveland Avenue, Korean Investment Partners, StoneBridge, and Glory Ventures also participated in the round. The round brings NeuReality’s total funding to […]

Tenstorrent Ships Grayskull High Performance AI Processor

Canadian startup Tenstorrent has launched its new Grayskull high performance AI processor. According to the company, Grayskull provides a substantial performance and power efficiency advantage over all existing inference solutions to date, and enables machine learning platforms that offer multiple levels of inference performance compared to all other inference solutions, in a cost effective manner. “Grayskull delivers significant baseline performance improvements on today’s most widely used machine learning models, like BERT, ResNet-50 and others, while opening up orders of magnitude gains using conditional execution on both current models and future ones optimized for this approach.”

Research Findings: HPC-Enabled AI

Steve Conway from Hyperion Research gave this talk at the HPC User Forum. “AI adds fourth branch to the scientific method, inferencing. Inferencing complements theory, experiments and established simulation methods. Essentially, inferencing is the ability to guess, based on incomplete information. At the same time, simulation is becoming much more data intensive with the rise of iterative methods. When inferencing is applied to data intensive simulation, the result is intelligent simulation.”

Podcast: HPC & AI Convergence Enables AI Workload Innovation

In this Conversations in the Cloud podcast, Esther Baldwin from Intel describes how the convergence of HPC and AI is driving innovation. “On the topic of HPC & AI converged clusters, there’s a perception that if you want to do AI, you must stand up a separate cluster, which Esther notes is not true. Existing HPC customers can do AI on their existing infrastructure with solutions like HPC & AI converged clusters.”

‘AI on the Fly’: Moving AI Compute and Storage to the Data Source

The impact of AI is just starting to be realized across a broad spectrum of industries. Tim Miller, Vice President Strategic Development at One Stop Systems (OSS), highlights a new approach — ‘AI on the Fly’ — where specialized high-performance accelerated computing resources for deep learning training move to the field near the data source. Moving AI computation to the data is another important step in realizing the full potential of AI.

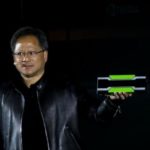

Nvidia Unveils World’s First GPU Design for Inferencing

Nvidia’s GPU platforms have been widely used on the training side of the Deep Learning equation for some time now. Today the company announced a new Pascal-based GPU tailor-made for the inferencing side of Deep Learning workloads. “With the Tesla P100 and now Tesla P4 and P40, NVIDIA offers the only end-to-end deep learning platform for the data center, unlocking the enormous power of AI for a broad range of industries,” said Ian Buck, general manager of accelerated computing at NVIDIA.”