The OpenMP community has issued its Call for Submissions for OpenMPCon 2019 and IWOMP 2019. The events take place September 9-13 in Auckland, New Zealand. “OpenMPCon is the annual conference for OpenMP developers to discuss of all aspects of parallel programming with OpenMP. The International Workshop on OpenMP (IWOMP) is an annual workshop dedicated to the promotion and advancement of all aspects of parallel programming with OpenMP.”

Celebrating 20 Years of the OpenMP API

“The first version of the OpenMP application programming interface (API) was published in October 1997. In the 20 years since then, the OpenMP API and the slightly older MPI have become the two stable programming models that high-performance parallel codes rely on. MPI handles the message passing aspects and allows code to scale out to significant numbers of nodes, while the OpenMP API allows programmers to write portable code to exploit the multiple cores and accelerators in modern machines.”

Dispelling the Myth “OpenMP Does Not Scale”

Ruud van der Pas from Oracle presented this talk at OpenMPcon. “Unfortunately it is a very widespread myth that OpenMP Does Not Scale – a myth we intend to dispel in this talk. Every parallel system has its strengths and weaknesses. This is true for clustered systems, but also for shared memory parallel computers. While nobody in their right mind would consider sending one zillion single byte messages to a single node in a cluster, people do the equivalent in OpenMP and then blame the programming model. Also, shared memory parallel systems have some specific features that one needs to be aware of. Few do though. In this talk we use real-life case studies based on actual applications to show why an application did not scale and what was done to change this. More often than not, a relatively simple modification, or even a system level setting, makes all the difference.”

Video: Enabling Application Portability across HPC Platforms

“In this presentation, we will discuss several important goals and requirements of portable standards in the context of OpenMP. We will also encourage audience participation as we discuss and formulate the current state-of-the-art in this area and our hopes and goals for the future. We will start by describing the current and next generation architectures at NERSC and OLCF and explain how the differences require different general programming paradigms to facilitate high-performance implementations.”

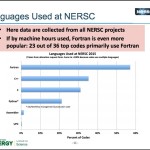

Video: Using OpenMP at NERSC

“This presentation will describe how OpenMP is used at NERSC. NERSC is the primary supercomputing facility for Office of Science in the US Depart of Energy (DOE). Our next production system will be an Intel Xeon Phi Knights Landing (KNL) system, with 60+ cores per node and 4 hardware threads per core. The recommended programming model is hybrid MPI/OpenMP, which also promotes portability across different system architectures.”

OpenMPCon Lists Abstracts and Tutorials

Today the OpenMPCon Developer Conference published their abstracts of papers and tutorials.

Speaker Lineup Announced for OpenMPCon Developer Conference

Today the OpenMP Architecture Review Board (ARB) announced the full line-up of world-class keynote speakers, technical presentations, tutorials and panel sessions to be hosted at the inaugural OpenMPCon Developer Conference, which will take place Sept. 28-30 in Aachen, Germany.