Over the past decade, teams of engineers, chemists and biologists have analyzed the physical and chemical properties of cicada wings, hoping to unlock the secret of their ability to kill microbes on contact. If this function of nature can be replicated by science, it may lead to products with inherently antibacterial surfaces that are more […]

OLCF and Stony Brook: Using HPC Simulations to Learn How Cicada Wings Kill Bacteria

New TOP500 HPC List: Frontier Extends Lead with Performance Upgrade

After a flurry of new competitors in 2022 at the top of the TOP500 list of the world’s most powerful supercomputers, the first list of 2023 – issued here in Hamburg this morning at the ISC conference – reveals the same top 10 systems in the same order. Still, the HPC community will no doubt […]

Orion: Frontier’s Massive File System

We’re accustomed to the massive scale of everying associated with exascale supercomputing. Now we’re getting details on the file system that will support Frontier, the world’s first exascale-certified system housed at Oak Ridge Leadership Computing Facility’s High-Performance Computing Storage and Archive Group at Oak Ridge National Laboratory. The file system, called Orion, consists of 50 cabinets […]

Oak Ridge Lab and Veterans Affairs Use Summit Supercomputer Security Framework for Health Research

Dec. 1, 2022 — A team from Oak Ridge National Laboratory (ORNL) and the US Department of Veterans Affairs (VA) used the CITADEL security framework to securely transfer and analyze veterans’ health records on ORNL’s Summit, an IBM AC922 supercomputer housed at the Oak Ridge Leadership Computing Facility, a US Department of Energy (DOE) Office […]

Scientists Using Frontier Supercomputer Win 2022 Gordon Bell Prize, Another Frontier Team Named Prize Finalist

[SPONSORED CONTENT] How many researchers can say they’ve not only run their scientific job on the AMD-powered Frontier supercomputer, the world’s no. 1 ranked HPC system and the first exascale-class machine, but also on Fugaku, Summit and Perlmutter, the world’s second-, fifh- and eighth-ranked HPC systems in the world, respectively? But that’s the case with an interntional group of researchers working on particle-in-cell simulations who have developed code that won….

Research Team Uses Summit to Earn Gordon Bell Prize Nomination for Simulating Carbon in Extreme Conditions

A research team used machine-learned descriptions of interatomic interactions on the 200-petaflop Summit supercomputer at the US Department of Energy’s (DOE’s) Oak Ridge National Laboratory (ORNL) to model more than a billion carbon atoms at quantum accuracy and observe how diamonds behave under some of the most extreme pressures and temperatures imaginable. The team was led by scientists […]

ORNL Study on COVID-19 Earns Gordon Bell Prize Nomination

As the coronavirus pandemic entered its second year, a team of scientists from the U.S. Department of Energy’s Oak Ridge National Laboratory used the nation’s fastest supercomputer to streamline the search for potential treatments. “The approach we used to search for promising molecules resembles natural selection in fast forward,” said Andrew Blanchard, one of the […]

Toolkit Delivers 4D Visualization, Addresses Data Volume Challenges in Exascale

The Exascale Computing Project (ECP) has announced that a team of researchers has developed the Feature Tracking Kit (FTK), which uses simplicial spacetime meshing to simplify, scale, and deliver novel feature-tracking algorithms for in situ data analysis and scientific visualization. FTK delivers feature-tracking tools, scales feature-tracking algorithms in distributed and parallel environments, and simplifies development […]

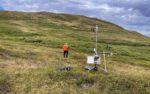

ORNL Researchers, Summit HPC Support IPCC Climate Change Report

As part of the Next-Generation Ecosystem Experiments Arctic project, scientists are gathering and incorporating new data about the Alaskan tundra into global models that predict the future of our planet. Credit: ORNL/U.S. Dept. of Energy Improved data, models and analyses from Oak Ridge National Laboratory scientists and many other researchers in the latest global climate […]

Summit Supercomputer Helps Crack Code to Signature Superconductor ‘Kink’

May 19, 2021 — Scientists have long been trying to understand the behavior of superconductors, materials that have zero electrical resistance when they reach sufficiently low temperatures. Superconductors might be useful for technologies such as magnets for MRIs, fusion devices, and particle accelerators. To understand superconductors, one concept is pivotal: phonons, which are quantum waves […]