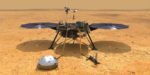

NASA supercomputers have helped several Mars landers survive the nerve-racking seven minutes of terror. During this hair-raising interval, a lander enters the Martian atmosphere and needs to automatically execute many crucial actions in precise order….

Exascale Software and NASA’s ‘Nerve-Racking 7 Minutes’ with Mars Landers

Exascale Computing Project: Leveraging HPC and Neural Networks for Cancer Research

What happens when Department of Energy (DOE) researchers join forces with chemists and biologists at the National Cancer Institute (NCI). They use the most advanced high-performance computers to study cancer at the molecular, cellular and population levels.

Exascale: Bringing Engineering and Scientific Acceleration to Industry

At SC23, held in Denver, Colorado, last November, members of ECP’s Industry and Agency Council, comprised of U.S. business executives, government agencies, and independent software vendors, reflected on how ECP and the move to exascale is impacting current and planned use of HPC….

@HPCpodcast: Views and Insights on the New TOP500 List

The big news on the Monday of each annual Supercomputing Conference is the release of the new TOP500 ranking of the world’s most powerful supercomputers. At this years SC23 here in Denver, the big news is that the top 10 of this year’s list has more changes than any list in recent memory….

Aurora Exascale Install Update: Cautious Optimism

The twice-annual TOP500 list of the world’s most powerful supercomputers is not universally loved, arguments persist whether the LINPACK benchmark is an optimal way to assess HPC system performance. But few would argue it serves a valuable purpose: for those installing leadership-class supercomputers, the TOP500 poses a challenge and a looming deadline that “concentrates the mind wonderfully.”

@HPCpodcast: Paul Messina and the Journey to Exascale

From the early days of supercomputing through the success of exascale supercomputing, few HPC luminaries have played as important and integral leadership role in HPC as Dr. Paul Messina. So as we observe Exascale Day today, we are delighted to discuss the exascale journey with someone instrumental to the 10 orders of magnitude of improvement in ….

Building a Capable Computing Ecosystem for Exascale

With ECP, working together was a prerequisite for participation. “From the beginning, the teams had this so-called ‘shared fate,’” says Siegel. When incorporating new capabilities, applications teams had to consider relevant software tools developed by others that could help meet their performance targets, and if they didn’t choose to use them….

LLNL: 9,000 Exascale Nodes for Power Grid Optimization

Ensuring the nation’s electrical power grid can function with limited disruptions in the event of a natural disaster, catastrophic weather or a manmade attack is a key national security challenge. Compounding the challenge of grid management is the increasing amount of renewable energy sources such as solar and wind that are continually added to the […]

Members of ECP’s Industry Council in Panel at SC23 on Exascale Computing’s Impact on Industry

July 31, 2023 — At SC23, there will be a panel discussion titled “The Impact of Exascale and the Exascale Computing Project on Industry“ featuring members of Exascale Computing Project’s Industry and Agency Council (IAC), who will discuss how the ECP and the move to exascale computing is impacting industry’s current and planned use of […]

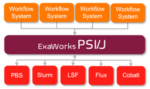

ExaWorks: Tested Component for HPC Workflows

ExaWorks is an Exascale Computing Project (ECP)–funded project that provides access to hardened and tested workflow components through a software development kit (SDK). Developers use this SDK and associated APIs to build and deploy production-grade, exascale-capable workflows on US Department of Energy (DOE) and other computers. The prestigious Gordon Bell Prize competition highlighted the success of the ExaWorks SDK when the Gordewinner and two of three finalists in the 2020 Association for Computing Machinery (ACM) Gordon Bell Special Prize for High Performance Computing–Based COVID-19 Research competition leveraged ExaWorks technologies.